Wasms and GPUs are generally separated by an expensive serialization limitation: on most hardware, getting data from the VM sandbox to the accelerator means copying it across the bus. Apple Silicon’s unified memory architecture erases that limitation (no bus, same physical memory), and what comes out is a runtime where Wasm control plane is and this GPU is the compute planewith Near-zero overhead between them.

I am building something called Driftwood Which uses it for stateful AI inference… and this post is about the foundation (how the zero-copy chain works, what I measured, what it opens up). Still early, still thinking about it.

Why is this generally difficult?

Quick background, for anyone who doesn’t live in this stack: WebAssembly gives you a sandbox. Your module gets a flat byte array (linear memory) and that’s the universe… everything outside is mediated by “host” function calls. The whole point is isolation, portability, fatalism.

gpu Too Want a flat byte array, but a specific type: page-aligned, pinned, accessible to the DMA engine. On a discrete GPU (think NVIDIA, or AMD), that memory sits on the PCIE bus from the CPU, so getting data from the Wasm module’s linear memory to the GPU means: copying from the sandbox to host memory, then copying across the bus to GPU memory. Two copies, two latency hits, and a weird impedance mismatch between “Isolated VM” and “Hardware Accelerator”.

Apple changes silicon physics. The CPU and GPU share the same physical memory (Apple’s Unified Memory Architecture)… no buses! The GPU can also read the same pointers from DRAM that the CPU can read. The real question: can you thread that pointer through layers of abstraction (Wasm runtime, GPU API) without making a defensive copy of something along the way?

Turns out… you can!

three-link chain

Three links. I verified each one on my own before attempting to build them: it’s the kind of thing where if you skip the isolation step and the entire pipeline breaks, you don’t know “which joint is leaking”.

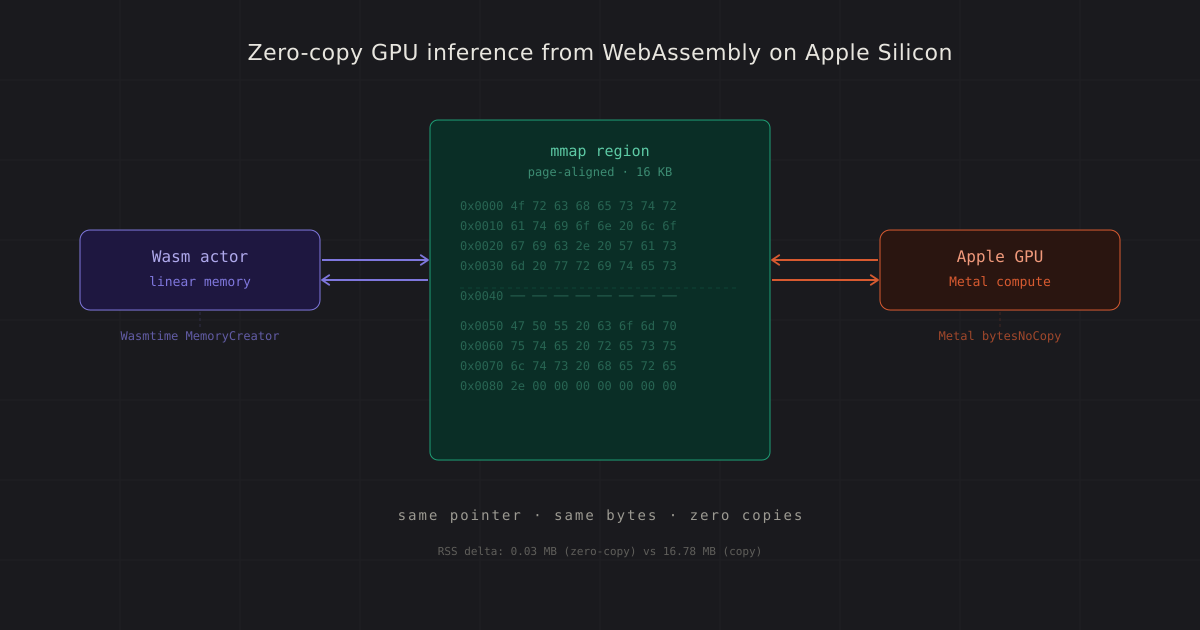

Link 1: MMAP gives you page-aligned memory. ARM64 on macOS, mmap with MAP_ANON | MAP_PRIVATE Returns 16 KB-aligned addresses. This is no lucky accident, This is the ARM64 page sizeAnd mmap Aligned by contract. Alignment matters due to metal Is necessary it.

Link 2: Metal accepts that pointer without copying it. MTLDevice.makeBuffer(bytesNoCopy:length:) Wraps the current pointer as a metal buffer. On Apple Silicon, it is zero-copy pathi.e. GPU accesses Same Physical memory is what the CPU does. I verified the pointer identity: MTLBuffer.contents() indicator is equal to origin mmap indicator. I verified no hidden copies: RSS delta was 0.03 MB (measurement noise) compared to 16.78 MB for the clear-copy path. And, somehow similar computation latency.

Link 3: Wasmtime lets you bring your own allocator. of wastime MemoryCreator The attribute lets you control how linear memory is allocated. Instead of letting wassamtime call mmap Internally, you provide the backing memory yourself. i execute MemoryCreator to return ours own mmap of area, and wastime memory.data_ptr() Returns exactly the pointer I assigned to it. The Wasem module reads and writes via Wasem’s memory API; The GPU reads and writes through the metal buffer; Both are operating on the same bytes.

Composition: allocate one mmap area, hand it over Both Vastime (as the actor’s linear memory) And Metal (as GPU buffer). The Wasm module writes data at a known offset, the GPU performs calculations on it, and the results appear in the module’s linear memory. No copies and No Clear data transfer.

I tested the entire chain with 128×128 matrix multiplication: the Wasm module fills matrices A and B, the GPU runs a GEMM shader, the module reads the result back to C. Zero errors in 16,384 elements. Small test, but it’s that kind of thing Either it will all line up or you will get garbageSo zero error is the signal I wanted.

what i measured

Three things I cared about: pointer identification (Is it really zero-copy?), memory overhead (Are any hidden copies getting in?), and accuracy (Does the GPU see what Wasm has written?).

Measurement Zero-copy path Copy path

─────────────────────────────────────────────────────────────

Pointer identity mmap == MTLBuffer different addrs

RSS delta (16 MB region) 0.03 MB 16.78 MB

GEMM latency (128×128) ~6.75 ms ~6.75 ms

Correctness (16K elements) 0 errors 0 errors

Latency equivalence makes sense: on UMA, the computation itself is the same either way. The memory picture is where this shows up: a zero-copy path has essentially no overhead for making the data GPU-accessible, and the copy path doubles your memory footprint.

At small tensor sizes, no one cares. At the scale of the KV cache in Transformer estimation (hundreds of megabytes per conversation) it is the difference between fitting four actors or two in memory. This is the system I really want to work in, so memory part matters.

From zero-copy to inference

So now I’ve got a primitive: Wasm and the GPU share memory without any overhead. What do you do with it?

I plugged the chain into Apple’s MLX framework and ran the Llama 3.2 1B instruction from a wasm actor: a complete Transformer decoder written in Rust, compiled to a native host runtime, driving inference on an Apple Silicon GPU via host function calls. (I was too lazy to wire up a custom kernel path from scratch, and…mlx was there)

Measured latency, running Llama 3.2 1b (4-bit quantified, 695 MB) on a 2021 M1 MacBook Pro (Old personal laptop, I’ll re-evaluate on a proper Mac Studio someday when I get it 😄):

Operation Latency

──────────────────────────────────────

Model load (safetensors) 229 ms (one-time)

Prefill (5 tokens) 106 ms

Per-token generation ~9 ms

Host function boundary negligible

Host function limitation (Wasm-to-GPU dispatch) is not measurable against estimated cost. Anyone who has worked with sandboxed runtimes has probably wondered about the idea of exceeding that limit per dispatch. On this hardware, it’s not a thing.

KV Cash Portability

Transformers maintain a key-value cache that stores references during negotiation, which normally occurs short term (Kill process, lose cache, restart). If you’ve tried running local estimates, you know this feeling.

Because the cache resides in GPU-accessible memory that i controlI can sort it. So, I dump the KV cache in SafeTensor format (standard ML tensor serialization, nothing exotic) and later restore it on the same machine or a different machine, Or potentially against a different model on a different machine! That last one I haven’t tested in a meaningfully different architecture yet… we’ll see.

Operation Latency Size

───────────────────────────────────────────────────

Serialize (24 tokens) 1.1 ms 1.58 MB (~66 KB/token)

Restore from disk 1.4 ms

Re-prefill from scratch 67.7 ms (the alternative)

───────────────────────────────────────────────────

Speedup from restore: 5.45×

Round-trip fidelity: bit-identical (10/10 tokens match)

5.45× At 24 tokens, and the ratio improves with reference length: the restoration time is almost constant, again the prefill scales linearly. At 4,096 tokens, a restore would be about 100× faster than a recalculation (I haven’t done this). In fact This has been pushed to 4,096 so far; This is napkin math derived from static-vs-linear shapes).

this is the basis of stateful actor dynamics: To interrupt a conversation, take it somewhere else, defuse it while maintaining the full context. The linear memory of the Wasm module holds the logical state of the actor; KV cache captures the cached context of the inference engine. Together: A portable snapshot of an ongoing AI conversation (Or, at least, that’s the plan 😅).

what is being built

Driftwood is a runtime for stateful Wasm actors with GPU inference. Zero-copy chain is the foundation:on top of this I’m going to add more actor snapshot (Pause and resume any conversation), checkpoint portability (transfer the estimation state to the machines), and multi-model support (The snapshot format is model-agnostic, so in theory Model swap saves actor’s identity … Who It is possible Work, will check again once I test it).

It’s all early days, things are still being pieced together. But the “physics” works: Wasm and the GPU can share memory with zero overhead on Apple silicon, the KV cache is portable, and a full Transformer runs from the sandbox actor at native speed. The next things I want to see: does the snapshot actually survive a model swap, does the series stick to larger models, and am I missing some obvious reason it would drop in scale. slow and steady …

More details on the actor model and snapshot architecture in a future post, once I’ve actually shipped something beyond the “physics work” stage.

<a href