The vector database category is changing in response to the needs of agentic AI.

The recovery-augmented generation (RAG)-to-vector database pipeline doesn’t cut it anymore; Agentic AI requires a different approach that includes context. VentureBeat’s Q1 2026 Pulse The survey outlines this trend: each standalone vector database is losing adoption share, while hybrid retrieval intent has tripled to 33.3%, the fastest growing strategic position in the dataset.

Vector database pioneer Pinecone recognizes this and is working to meet the specific needs of agentic AI.

The company today announced Nexus, which it positions as a knowledge engine rather than an improvement in retrieval. Nexus offers a context compiler that converts raw enterprise data into persistent, task-specific knowledge artifacts before they are queried by agents, and a composable retriever that serves those artifacts with field-level citations and deterministic conflict resolution.

With Nexus, Pinecone is releasing KnowQL, a declarative query language that gives agents a vocabulary for specifying output size, confidence requirements, and latency budgets. In Pinecone’s own internal benchmarks, a financial analysis task that previously consumed 2.8 million tokens was completed by Nexus with only 4,000. This represents a 98% reduction, although the company has not yet validated this in a customer production deployment. Nexus is in early access starting today.

"RAG was built for human users," Pinecone CEO Ash Ashutosh told VentureBeat. "Nexus was built for agentic users, because their language is very different. The reactions they expect vary greatly. The work that is assigned to an agent is very different from that done by a chatbot."

Why RAG was never designed for what agents actually do

RAG involves a question, a response, and a person in the loop to interpret the results. But agents work differently. They are assigned tasks, not questions – and completing these requires gathering context from multiple sources, resolving conflicts, keeping track of what has already been retrieved, and deciding what to ask next.

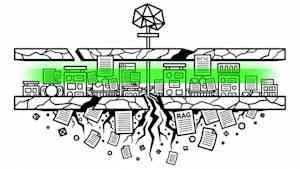

Difference matters. A RAG pipeline retrieves documents and hands them to a model at inference time. Each agent session starts cold, with no compiled understanding of the enterprise data estate – which tables belong to whom, which sources are authoritative for which queries, and which formats an agent downstream will actually be able to consume. It is reinvented in every session.

"At the root of all this was a very simple problem," Ashutosh said. "You’re asking agents – machines – to work on systems and data that were designed for humans."

Pinecone estimates that 85% of agent computation effort goes into re-discovery cycles rather than task completion. The downstream effects are compounded: unpredictable latency, uncontrolled token costs, and non-deterministic outcomes. Run the same task twice against the same data, and an agent may produce different answers without any record of which source produced the result. For enterprises where auditability is a compliance requirement, this is a structural inefficiency, not a tuning problem.

What is Nexus and how does it work

Nexus takes the logic work from inference time to compile time. In a traditional RAG pipeline, the logic required to interpret, contextualize, and structure knowledge occurs at the time an agent makes a query – every session, every time, burning tokens on work that could have already been done. But Nexus does the logic only once, during the compilation phase that runs before any agent queries, then stores the results as a reusable knowledge artifact. Agents receive structured, action-ready context instead of raw documents to interpret instantly.

Pinecone Shipping’s architecture consists of three distinct components, each of which addresses a different layer of the agent retrieval problem.

- Context compiler. Nexus takes raw source data and a task specification and builds specialized knowledge artifacts – structured, task-optimized representations that agents consume directly without overhead interpretation. The same underlying data estate produces different artifacts for different agents: a sales agent gets a deal context synthesized from CRM and call records, a finance agent gets a revenue context linking contracts with billing schedules. Artifacts are persistent and reused across agent sessions, not regenerated at inference time.

-

Composable Retriever. Compiled artifacts are served at query time with typed fields, per-field citations with confidence levels, and deterministic conflict resolution. The output is shaped to match the agent’s specified format, and not returned as raw text for the agent to re-parse.

-

KnowQL. Pinecone describes it as the first declarative query language designed for agents rather than humans. Six primitives – intent, filter, provenance, output size, confidence, and budget – allow agents to specify structured responses and source grounding and latency envelopes in a single interface. Ashutosh compared the structural difference that KnowQL makes to what SQL did for relational databases: Before a standard interface existed, each application created its own data access layer from scratch.

The relationship between Nexus and Pinecone’s underlying vector database is additive. The reference compiler produces knowledge artifacts that are indexed and stored in a vector database; The compilation layer shapes and serves knowledge; The vector layer handles storage, retrieval speed, and scale.

"The vectors are still stored and managed by the Pinecone vector database," Ashutosh said.

What do analysts say about architectural claims?

Moving logic from the inference to the compilation phase is not a new concept – ontologies, data catalogs, and semantic layers have followed versions of it for years. What has changed is the ability to do this at scale without dedicated engineering teams for each domain. That’s the specific argument Nexus is making, and it’s where analysts see real progress.

Stephanie Walter, practice leader for the AI stack at Hyperframe Research, told VentureBeat that Nexus is demonstrably important because it moves knowledge work from runtime chaos to pre-compiled structure. However, he stressed that this is an evolution of the RAG architecture, not a complete reinvention.

"Real innovation is not the idea itself, but the productization of knowledge compilation as a layer of first-class infrastructure," Walter said. "If Pinecone can implement this reliably, it becomes meaningful infrastructure, not just another RAG tuning trick."

The technical mechanism behind that claim is what Gartner’s distinguished VP analyst Arun Chandrasekaran has called a meaningful architectural distinction.

"Unlike traditional RAG, which relies on pure semantic search at runtime, architectural compilation embeds structural logic in the metadata layer, which can boost response time and provide better logic," Chandrasekaran told VentureBeat. "This is a significant leap from simple retrieval to advanced reasoning, allowing agents to navigate the enterprise schema and have better memory for relevance."

competitive landscape

Many sellers accept Vector databases and traditional RAGs are not sufficient for agentic AI.

Microsoft has expanded it FabricIQ technology Providing semantic context for agentic AI. Google has recently announced this agentic data cloud As an approach to help resolve similar issues. There are also standalone episodic memory technologies, such as massaWhich provides another option for the users.

but aAnalysts are less focused on feature comparisons than on what buyers should actually evaluate.

"The agentic AI stack is splitting into dozens of features, but enterprise buyers shouldn’t chase features," Walter said. "They must pursue controls: cost control, governance control and security control."

He argued that most enterprise failures in agentic AI would not be technical. They will be operational – linked to cost overruns, governance gaps and security discipline.

The ability bar outweighs the recovery speed.

"The true differential deterministic grounding is," Chandrasekaran said, pointing to technologies like knowledge graphs that ensure agents understand structural relationships within enterprise data rather than returning surface-level matches. Interoperability is a related consideration: standards such as Model Reference Protocol (MCP) make sense for connecting agents to legacy data sources without creating new dependencies.

What does this mean for enterprises

RAG and standalone vector databases were created for a different era. The workload of agents is exposing the limitations of both.

The recovery cost problem is architectural

Teams running complex agentic workloads on traditional RAG pipelines are burning tokens in estimation time on work that could already be done – interpreting, contextualizing, and structuring knowledge from the beginning, in every session. This is a design problem. Tuning the recovery layer won’t fix this. The question for data engineering teams is whether their current stack is structurally capable of pre-compiling knowledge for specific agent tasks, or whether it was built for a human user who never needed that capability.

Governance is what separates a pilot from a production deployment

The capabilities that determine whether agentic AI gets approved for enterprise use are not performance metrics.

"The real enterprise value proposition is not just fast retrieval, but controlled knowledge pipelines," Walter said. "Those capabilities are what transform agentic AI from an experiment into something that finance and risk teams will actually approve of."

the budget has changed

VentureBeat’s Q1 Pulse data shows that recovery optimization investment increased to 28.9% in March, outpacing valuation spending for the first time in the quarter. Enterprises have completed the measurement of their recovery problems. They are now spending money on repairing them.

"The future of agentic AI will not be determined by who has the longest context window," Walter said. "This will be determined by who can operationalize trusted knowledge at scale without increasing costs or governance."

<a href