Enterprise data stacks were built for humans running scheduled queries. As AI agents are acting autonomously on behalf of businesses around the clock, that architecture is breaking down – and vendors are racing to rebuild it. Google’s answer, announced at Cloud Next on Wednesday, is the Agentic Data Cloud.

The three pillars of architecture are:

- Knowledge list. Automates semantic metadata curation by inferring business logic from query logs without manual data steward intervention

-

Cross-Cloud Lakehouse. Lets BigQuery query Iceberg tables on AWS S3 via private network with no egress fees

-

data agent kit. MCP puts tools into VS Code, Cloud Code, and Gemini CLI so data engineers describe results instead of writing pipelines

"Data architecture will now have to change," Andy Gutmans, VP and GM of Data Cloud at Google Cloud, told VentureBeat. "We are moving from human scale to agent scale."

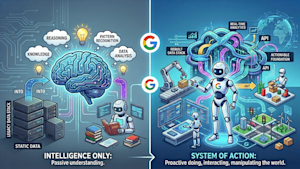

From intelligence system to action system

The main premise behind the agentic data cloud is that enterprises are moving from human-scale to agent-scale operations.

Historically, data platforms have been optimized for reporting, dashboarding, and some forecasting – what Google describes as “reactive intelligence.” In that model, humans interpret the data and decide what to do.

Now, as AI agents are increasingly expected to take action directly on behalf of businesses, Gutman argued that data platforms must evolve into systems of action.

"We need to ensure that all enterprise data can be activated with AI, including both structured and unstructured data." Gutmans said. "We need to ensure that there is the right level of trust, which also means that it is not just about getting access to the data, but about actually understanding the data."

The Knowledge Catalog is Google’s answer to that problem. It is an evolution of Dataplex, Google’s existing data governance product, with a different architecture underlying it. Where traditional data catalogs require data managers to manually label tables, define business terms, and create terminology, the Knowledge Catalog automates that process using agents.

The practical implication for data engineering teams is that the Knowledge Catalog measures the full data wealth, not just a curated subset that a small team of data managers can maintain by hand. The catalog natively covers BigQuery, Spanner, AlloyDB, and Cloud SQL, and federates with third-party catalogs including Collibra, Atlan, and Datahub. Zero-copy federation extends semantic context from SaaS applications including SAP, Salesforce Data360, ServiceNow, and Workday without the need for data movement.

Google’s Lakehouse moves to cross cloud

Google has a data lakehouse named big lake From 2022. Initially it was limited to Google data only, but in recent years it has added some limited federation capabilities, enabling enterprises to query data found in other locations.

Gutmans explained that previous federation queries worked through the API, which limited the features and customizations BigQuery could bring to external data. The new approach is storage-based sharing through the open Apache Iceberg format. That means whether the data is in Amazon S3 or Google Cloud, he argued, it doesn’t matter.

"What this really means is that we can bring all the goodness and all the AI capabilities to those third-party data sets," He said.

The practical result is that BigQuery can query Iceberg tables sitting on Amazon S3 through Google’s cross-cloud interconnect, a dedicated private networking layer, with no egress fees and a price-performance that Google says is comparable to native AWS warehouses. All BigQuery AI functions run against that cross-cloud data without any modifications. Bidirectional federation in preview extends to Databricks Unity Catalog on S3, Snowflake Polaris, and AWS Glue Data Catalog using the open Iceberg REST Catalog standard.

From writing the pipeline to describing the results

Knowledge Catalog and cross-cloud lakehouse solve data access and reference problems. The third pillar describes what happens when a data engineer actually sits down to build something with all this.

The Data Agent Kit comes as a portable set of skills, MCP tools, and IDE extensions that come in VS Code, Cloud Code, Gemini CLI, and Codex. It does not present any new interface.

The architectural change it enables is a step up from what Gutmans called "instructional copilot experience" For intent-driven engineering. Instead of writing a Spark pipeline to move data from source A to destination B, a data engineer describes the result – a cleaned dataset ready for model training, a transformation that applies a governance rule – and the agent chooses whether to use BigQuery, the Lightning engine for Apache Spark or Spanner to execute it, then generates production-ready code.

"Customers are worried about building their own pipelines," Gutmans said. "They are more in a review type mode than actually writing code."

Where Google and its rivals differ

The premise that agents need semantic context, not just data access, is shared across the market.

Databricks has Unity Catalog, which provides governance and a semantic layer in its Lakehouse. Snowflake offers Cortex, its AI and semantic layer. Microsoft Fabric It includes a semantic model layer built for business intelligence and, increasingly, agent grounding.

The dispute is not over whether semantics matter or not – everyone agrees that they matter. The dispute is over who builds and maintains them.

"Our goal is just to get all the semantics you can," He explained, noting that Google will be hooking up with third-party semantic models rather than requiring customers to start all over again.

Google is also positioning openness as a differentiator, with bidirectional federation in the Databricks Unity Catalog and Snowflake Polaris through the open Iceberg REST Catalog standard.

What does this mean for enterprises

Google’s argument – and echoed in the data infrastructure market – is that enterprises are lagging behind on three fronts:

Meaningful context is becoming infrastructure. If your data catalog is still manually curated, it won’t scale to agent workloads – and Gutman argues that the gap will only increase as agent query volumes increase.

Cross-cloud exit costs are a hidden tax on agentic AI. Storage-based federation through open Iceberg standards is emerging as the architectural answer to Google, Databricks, and Snowflake. Enterprises locked into a proprietary federation approach should stress-test those costs on agent-scale query volumes.

Gutmans argues that the pipeline-writing era is coming to an end. Data engineers who are now moving toward results-based orchestration will get a significant head start.

<a href