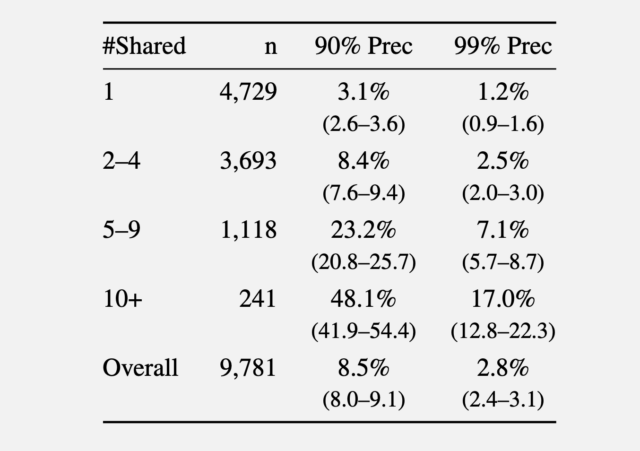

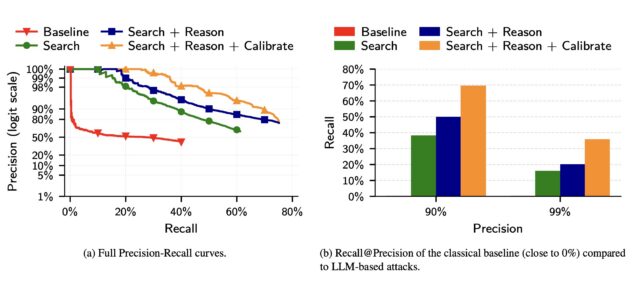

Recall on various precision limits.

Recall on various precision limits.

In the third experiment, the researchers took 5,000 users from the Netflix dataset and added another 5,000 “distraction” identities of people who did not appear in the results. They then added 5,000 query distractors to the list of 10,000 candidate profiles, consisting of users who only appeared in the query set, with no actual matches in the candidate pool.

Compared to the classical baseline, which mimics the Netflix reward attack for LLM anonymization, the latter performed much better than the former.

screenshot

The researchers wrote:

(a) The accuracy of classical attacks drops very rapidly, which explains its low memory. In contrast, the accuracy of LLM-based attacks degrades more gracefully as the attacker makes more guesses. (b) The classical attack fails almost completely even at moderately low accuracy. In contrast, even the simplest LLM attack (search) achieves non-trivial recall at low precision, and extending it with Reason and Calibrate steps doubles the recall @99% precision.

The results show that LLMs, although still plagued by false positives and other weaknesses, are rapidly outperforming more traditional, resource-intensive methods for identifying online users.

The researchers proposed mitigations including imposing rate limits on API access to user data, detecting automated scraping, and requiring platforms to restrict bulk data export. LLM providers can also monitor the misuse of their models in anonymization attacks and build guardrails that deny anonymization requests to models.

Of course, another option is for people to dramatically curb their social media use, or at the very least, regularly delete posts after a set time frame.

If the success of LLMs in anonymizing people improves, the researchers warn, governments could use the technologies to expose online critics, corporations could gather customer profiles for “ultra-targeted advertising,” and attackers could create profiles of targets en masse to launch highly personalized social engineering scams.

The researchers warned, “Recent advances in LLM capabilities have made it clear that there is an urgent need to rethink various aspects of computer security in view of LLM-driven offensive cyber capabilities. Our work shows that the same is true for privacy.”

<a href