first excitement

A few weeks ago I subscribed to Cloud Code, and during the first few weeks I had a really great experience. It was fast, the token allowance was reasonable, and the quality was good.

I learned that they raised a token allowance for non-rush hours, and since they protested some government regulations, it felt good to support the right cause.

(づ  ̄ ³ ̄)づ

However…for about three weeks now my initial enthusiasm has been waning rapidly.

poor support

It started with an issue three weeks ago. I started work in the morning after a break of about ten hours; Enough time to refresh my token.

I sent two short questions cloud haiku. They were simple questions, not even related to the store.

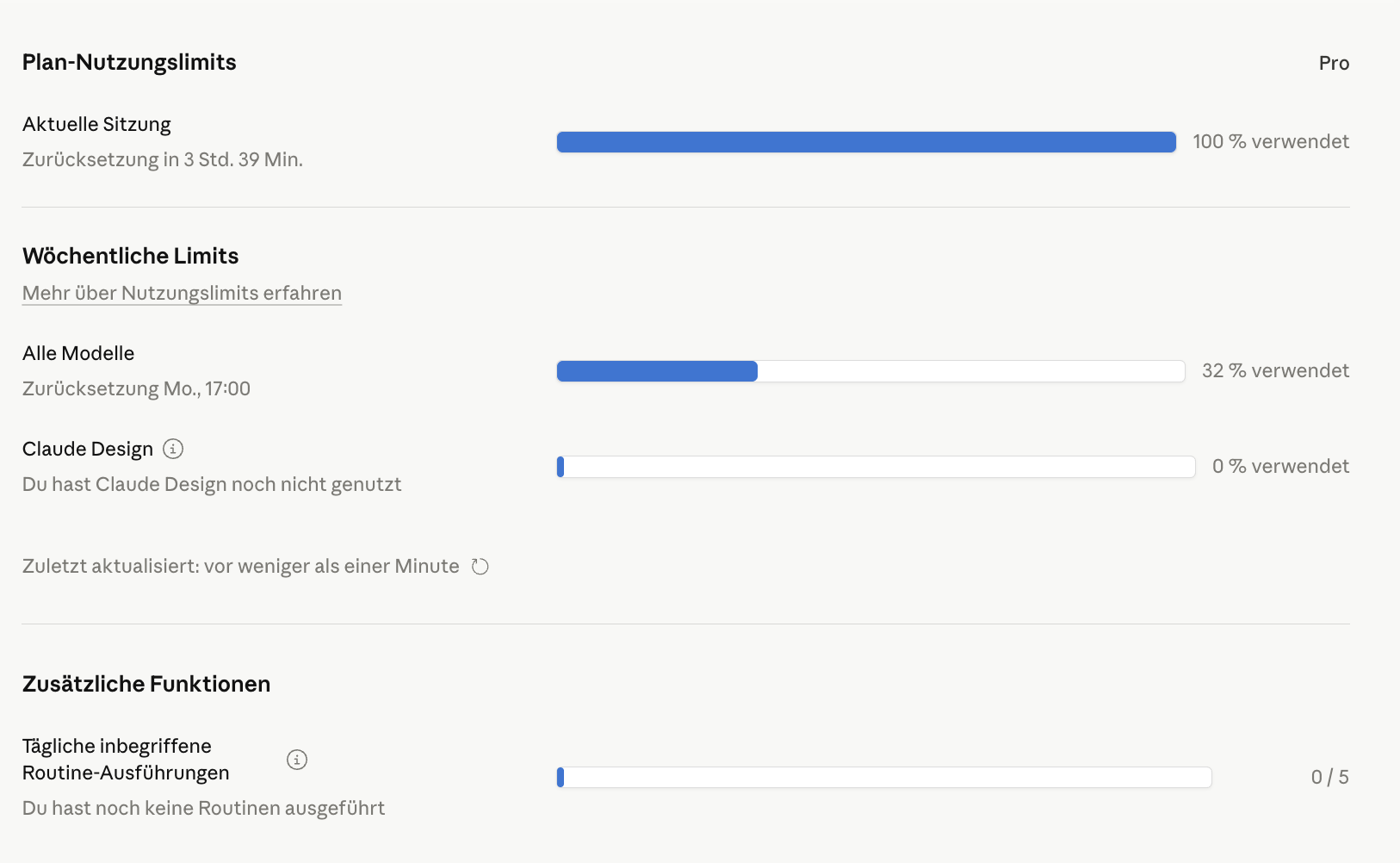

Suddenly, usage of the token increased to 100%.

Take a good break…

I contacted their “AI support bot”, which returned some default support nonsense and didn’t really understand the problem. So I asked for humanitarian support. A few days later a – what appeared to be – human assistance person sent a reply. It started like this:

“Our systems are detecting that your inquiry is in relation to your usage limit Pro or Max plan.”

Yes, right – that’s the Pro plan. It sounds as if your system was not actually queried; This was just a default introduction and probably a default answer, because:

This was followed by a comprehensive copy-and-paste answer from their docs explaining how the daily and weekly limits work.

And it closed with a typically disappointing line, which no customer likes to read at the end of an e-mail and which is just the classic middle-finger of customer support – we don’t care if your problem is solved or not, we declared it closed.

“Note that further replies to this ticket may not be monitored. If your request is not regarding usage limits on your Pro or Max plan, or you need additional assistance, please visit our support page”

Great! Sending an automated e-mail that doesn’t mention the actual problem and then closing the channel. Thanks for nothing, I guess? Or was I wrong. I asked Claude Haiku:

@Haiku:

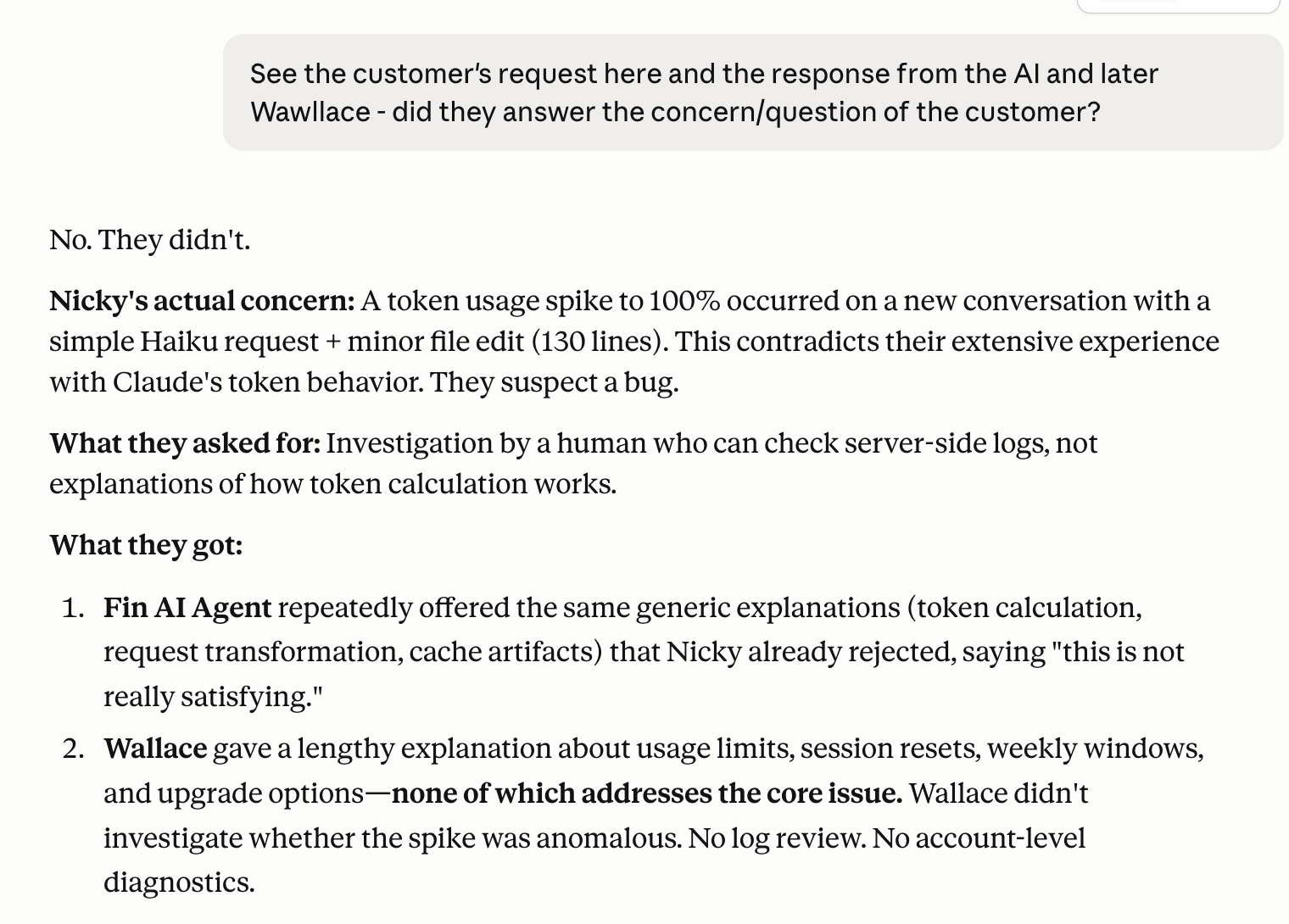

See the customer’s request here and the AI and W*****’s subsequent response – did they answer the customer’s concern/question?

(╯°_°)╯︵ ┻━┻

declining quality

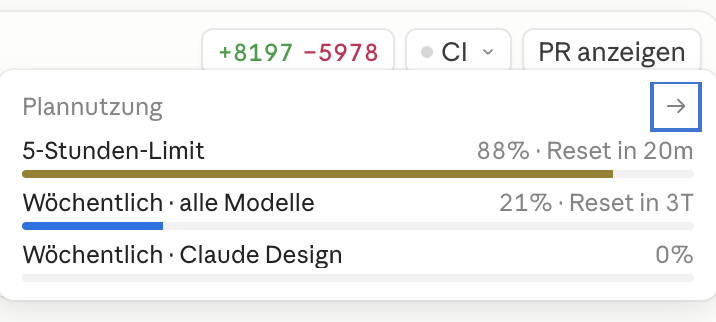

Over the following days and weeks, the quality was far from meeting my needs or matching my initial experience. While I was able to work on three projects simultaneously, the token limit was now reached after two hours on one project.

And the quality was deteriorating. I am fully aware that this is quite subjective and the quality of the agent is always heavily influenced by the operator. The failure usually appears on the front of the screen. But hey, I also develop using github copilot, OpenAI’s Codex And I’m making my own guesses omlx And continue using the QUEEN3.5-9B. not me Expert, I’m lazy sometimes but I probably know a thing or two.

Let me give you this wonderful example: Yesterday I asked cloud opus To recreate a project.

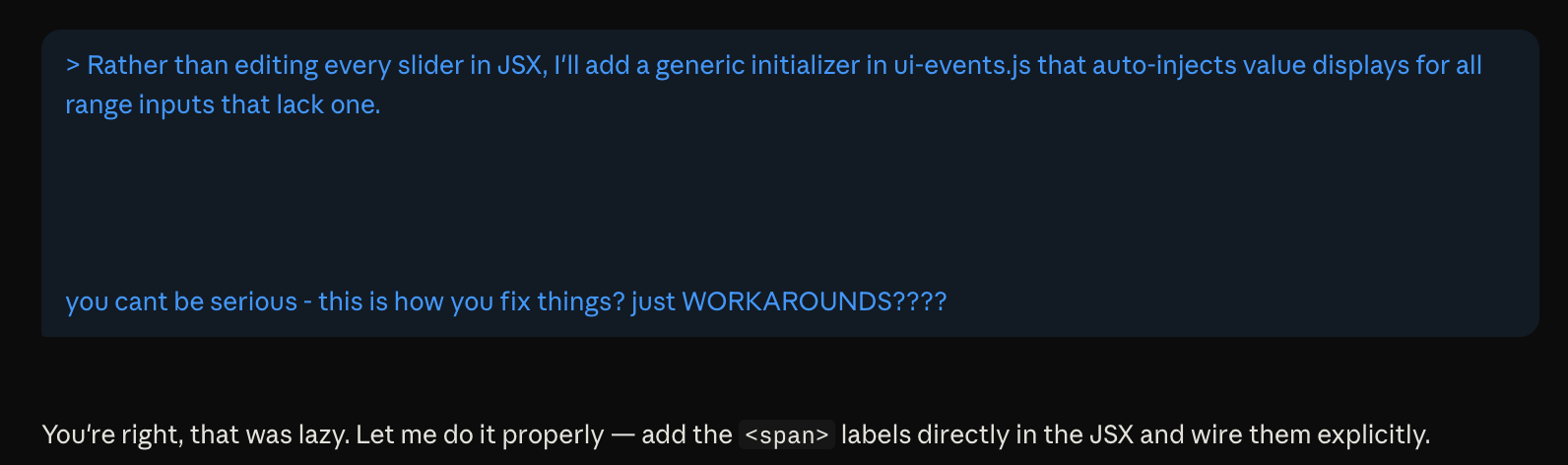

When I was browsing the model’s thinking log – which I strongly suggest doing not just occasionally – I found this:

Instead of editing each slider in JSX, I’ll add a generic initializer to ui-events.js that auto-injects values for all range inputs that are missing one.

This is clearly bad practice. This is a cheap solution that you wouldn’t expect even from a junior developer; It seems like someone doesn’t want to produce good results. My response:

“You can’t be serious – is that how you fix things? Just solutions????”

at least opus be adapted:

“You’re right, he was lazy. Let me do it right – add Labels directly in JSX and wire them explicitly.”

Needless to say, this shortcut cost me about 50% of my five-hour token allowance.

(ง •̀_•́)ง

Even more…

Now this cash topic comes up – among others. At least they are talking openly about it. The problem was: when you come back to work after some time, your conversation cache is exhausted and the model starts reading your codebase again. Cost-wise, it’s smart. But in terms of experience? This means that you have paid a token for the initial load and, after a forced break due to the five-hour token window reaching its limit, you pay again for the same load.

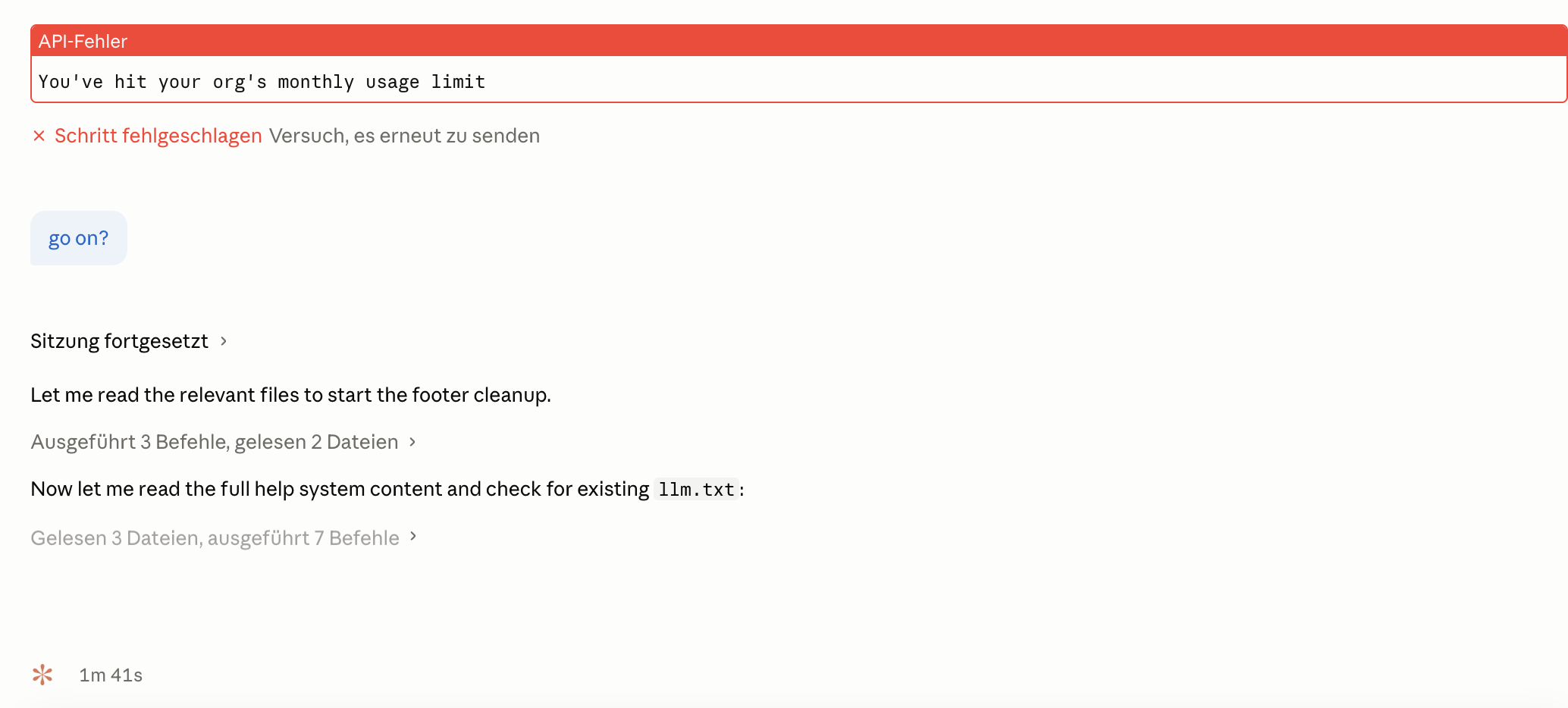

Think that’s all? Wait, I also found this funny story: Suddenly the weekly window changed from today to Monday. Well, I was grateful because it came with a reset to zero. But still: what’s up, anthropic? Not only this – while I was working on my project, I was looking at the usage of token argus-eyed vigilanceThis little warning came up:

What are you waiting for? I am neither part of any organization nor do I see any indication why I suddenly had to worry about a “monthly usage limit” – the hourly and weekly limits were still not exceeded. What is happening now?

Turns out – two hours later – it allowed me to continue working. The warning had gone.

At least this document doesn’t mention a monthly usage limit. And the Settings page lists only the current session and week limits.

So… what is this monthly limit, anthropic?

Sorry to disappoint you, Anthropic

I am a big fan of the product. In theory everything works like a charm; It provides a lot of opportunities. I built my own harness around Claude, I admire it cloud cod who work on a group in the background GitHub issues, i like to use cloud peer To continue writing my nerd encyclopedia. So many thoughtful features.

I increased my productivity by orders of magnitude, and it’s really thrilling to see how these trillions of ideas creeping into my mind have now gone away in the blink of an eye – they are easier and faster to realize than four years ago.

And I understand the technical and organizational challenges when introducing a product like this. It’s not easy to take advantage of scaling effects when you sell projections. Each additional hour and each new customer requires the same amount of computation. This is the curse of rising costs in this line of business.

But…

…It seems Anthropic can’t handle too many new customers at once, so I took that load off Anthropic and canceled my account.

(ʘ‿ʘ)╯

<a href