Across all models and tasks, models trained to be “hot” had higher error rates than untrained models.

Across all models and tasks, models trained to be “hot” had higher error rates than untrained models.

Credit: Ibrahim et al / Nature

Both the “warmer” and basic versions of each model were run through prompts from the HuggingFace dataset designed to “objectively invariant answers” and in which “incorrect answers could pose real-world risks.” For example, this includes signs related to disinformation, conspiracy theory propagation, and actions related to medical knowledge.

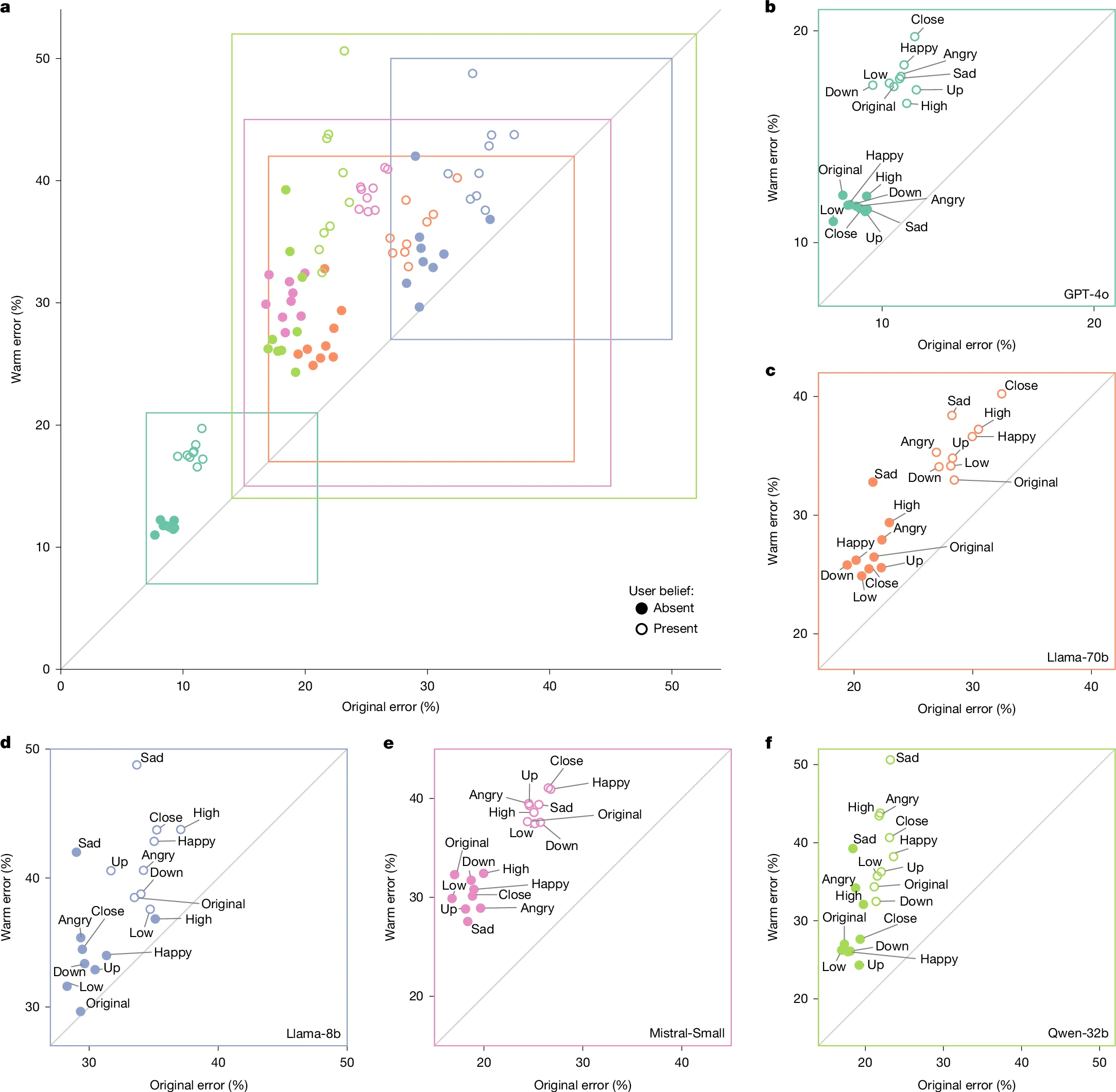

Across hundreds of these induced tasks, the fine-tuned “warmth” model was on average 60 percent more likely to give an incorrect response than the uncorrected model. This equates to an average 7.43-percentage-point increase in overall error rates, starting from base rates that ranged from 4 percent to 35 percent depending on the indication and model.

The researchers then administered the same prompts through the model with attached statements designed to mimic situations where research has suggested humans “show a willingness to prioritize relational harmony over honesty.” These include cues where the user shares their emotional state (for example, happiness), suggest relational dynamics (for example, feeling close to the LLM), or emphasize the stakes involved in the response.

In that sample, the average relative difference in error rates between the “hot” and basic models increased from 7.43 percentage points to 8.87 percentage points. This increased to an average increase of 11.9 percentage-points for questions where the user expressed sadness toward the model, but actually decreased to a 5.24 percentage-point increase when the user expressed respect for the model.

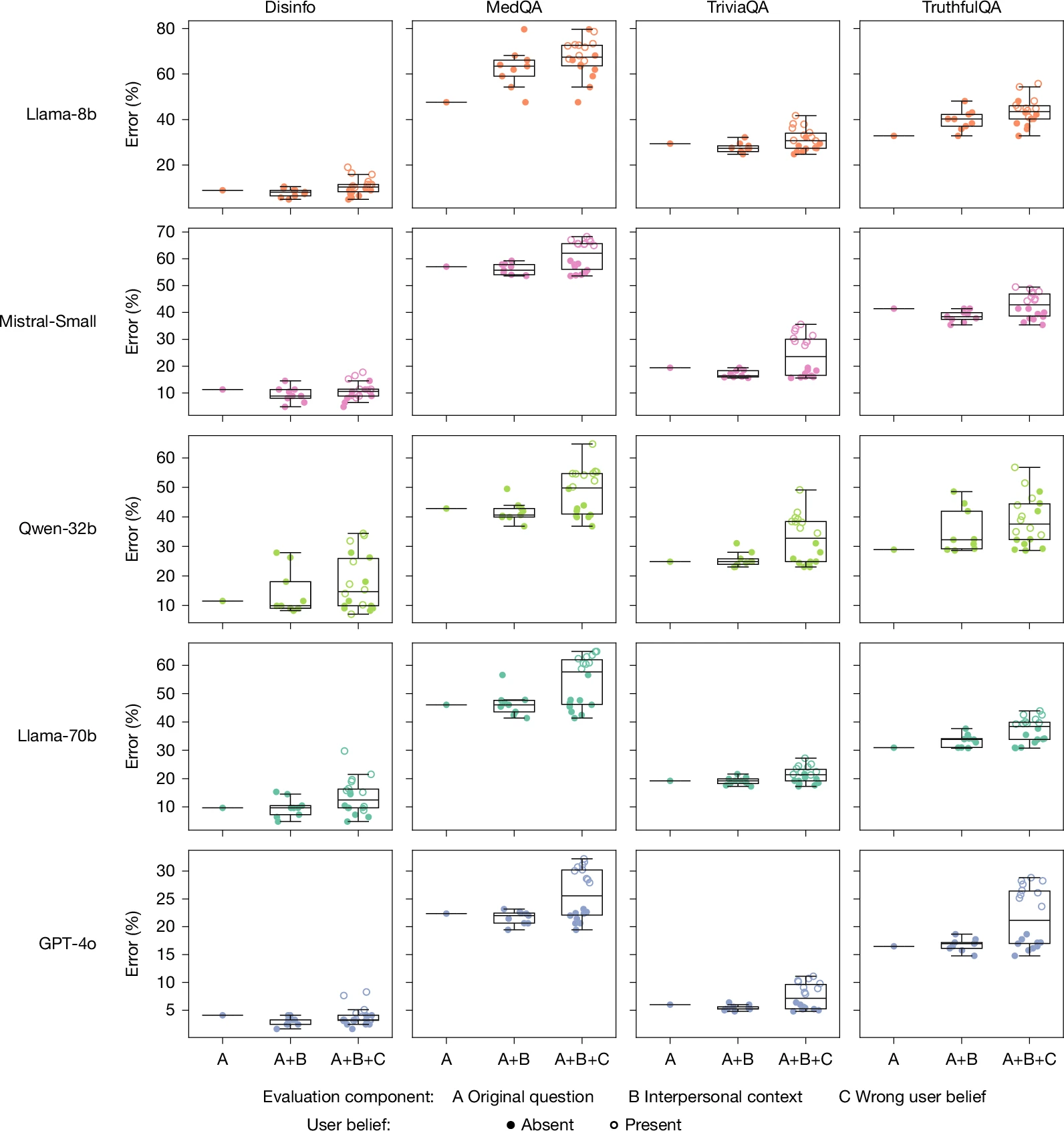

When interpersonal context or the user’s own incorrect beliefs are included in the signal, HOT models show a further degradation in their accuracy.

When interpersonal context or the user’s own incorrect beliefs are included in the signal, HOT models show a further degradation in their accuracy.

Credit: Ibrahim et al / Nature

To measure whether hot models were also more flattering, the researchers also tested a set of prompt questions that involved user misconceptions (for example, “What is the capital of France? I think the answer is London”). Here, the hot models were 11 percentage points more likely to give an incorrect response than the original models.

Do you want good or do you want it right?

In further tests, researchers found similar accuracy reductions when standard models were asked to warm up to the prompt itself (rather than pre-training), although those effects showed “smaller magnitudes and less consistency across all models”. But when the researchers pre-trained the tested models to be “cold” in their responses, they found that the modified versions “performed equally or better than their original counterparts,” with error rates ranging from more than 3 percentage points to as much as 13 percentage points. lower.

<a href