Enterprise teams that fine-tune their RAG embedding models for better precision may inadvertently degrade the retrieval quality on which pipelines depend, according to new research from Redis.

paper, "Training for structural sensitivity reduces dense retrieval generalization," Tested what happens when teams train embedding models for structural sensitivity. This is the ability to catch sentences that look almost identical but mean something different – "dog bites man" versus "The man bit the dog," Or a negation flip that completely reverses the meaning of a statement. That training consistently broke dense retrieval generalization, a measure of how correctly a model retrieves across a wide range of topics and domains on which it was not specifically trained. Performance declined by 8 to 9 percent on small models and by 40 percent on the current medium-sized embedding models teams are actively using in production. The findings have direct implications for enterprise teams building agentic AI pipelines, where retrieval quality determines which context flows into the agent’s reasoning chain. Recovery error in a single-stage pipeline gives wrong answers. The same error in the agentive pipeline can trigger a series of incorrect actions downstream.

Srijit Rajamohan, AI research leader at Redis and one of the paper’s authors, said the discovery challenges widespread assumptions about how embedding-based retrieval actually works.

"It is a common belief that when you use semantic search or similar semantic similarity, we get the right intention. This is not necessarily true," Rajamohan told VentureBeat. "A close or high semantic similarity does not necessarily mean exact intention."

The geometry behind the recovery tradeoff

Embedding models work by compressing an entire sentence into a single point in a high-dimensional space, then finding the points closest to a query at retrieval time. This works well for broad topical matching – documents on similar topics end up near each other. The problem is that two sentences with almost identical words but opposite meanings get too close to each other, because the model is working on word content rather than structure.

Research has determined this quantity. When teams fine-tune an embedding model to distinguish structurally different sentences—teaching that a negative flip that reverses the meaning of a statement is not the same as the original—the model uses the representational space it was previously using for broader topological recall. Both objectives compete for the same vector. The research also found that regression is not uniform across failure types. Negativity and spatial flip errors improved significantly with structured training. Binding errors – where a model confuses which modifier applies to which term, such as on which party the contract obligation falls – were barely transferred. For enterprise teams, this means it’s harder to fix the exact problem in cases where getting it wrong has the most consequences.

The reason most teams don’t catch this is that fine-tuning metrics measure the task one is training for, not what happens with normal recovery in unrelated disciplines. A model may show strong improvement on near-miss rejection during training, while falling silent on the broader retrieval task it was hired to perform. Regression comes up only in production.

Rajamohan said the trend most teams reach for — moving toward a larger embedding model — doesn’t address the underlying architecture.

"You can’t get your way out of this," He said. "This is not a problem that you can solve with more dimensions and more parameters."

Why do all the standard options fall short?

When recovery precision fails the natural tendency is to layer on additional methods. Research tested several of them and found that each failed in different ways.

Hybrid search. Combining embedding-based retrieval with keyword searching is already standard practice for closing the precision gap. But Rajamohan said keyword searches can’t capture the failure modes that this research identifies, because the problem isn’t missing words — it’s misread structure.

"If you have a sentence like ‘Rome is closer than Paris’ and another sentence that says ‘Paris is closer than Rome’, and you do an embedding retrieval after text search, you won’t be able to tell the difference," He said. "Same words are present in both the sentences."

maxsim reranking. Some teams add a second scoring layer that compares individual query terms to individual document terms rather than relying on a single compressed vector. This approach, known as MaxSim or Late Interaction and used in systems such as Colbert, has improved relevance benchmark scores in research. But it completely failed to reject structural near-misses, assigning them near-identity similarity scores.

The problem is that relevance and identity are different objectives. MaxSim is optimized for the former and blinded to the latter. A team that adds MaxSim and sees benchmark improvements may be solving a different problem than their own.

Cross-Encoder. These work by feeding the query and candidate documents into the model simultaneously, allowing each document to be compared word by word before a decision is made. That perfect comparison is what makes them accurate – and what makes them very expensive to run at production scale. Rajamohan said that his team investigated him. They work in the lab and are broken down under actual query volume.

episodic memory. Sometimes also referred to as agentic memory, these systems are increasingly cited as a path beyond RAG, but Rajamohan said moving to that type of architecture does not eliminate the structural retrieval problem. Those systems still rely on recovery at query time, meaning the same failure modes apply. The main difference is loose latency requirements, not precise scheduling.

Two-phase research validated

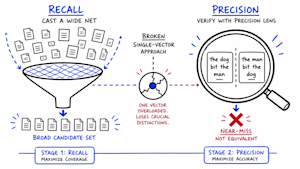

The common thread in each of the failed approaches is the same: a single scoring mechanism that tries to handle both recall and precision at once. The research validated a different architecture: stop trying to do both tasks with a single vector, and assign each task to a dedicated stage.

Step One: Recall. The first stage works exactly like standard dense retrieval does today – the embedding model compresses the documents into vectors and retrieves the closest matches to a query. Nothing changes here. The goal is to cast a wide net and quickly bring back a group of strong candidates. At this stage speed and width matter, not absolute precision.

Step Two: Precision. The second phase is where the fix remains. Instead of scoring candidates with a single similarity number, a small learned Transformer model queries and examines each candidate at the token level – comparing individual words to different words to detect structural mismatches such as negative flips or role reversals. This is the validation step that the single-vector approach cannot perform.

Result. Under end-to-end training, Transformer Verifier outperformed every other research approach tested on structural near-miss rejection. This was the only approach that reliably captured the failure modes missed by the single-vector system.

exchange. Adding a validation step costs latency. Latency cost depends on how much validation a team runs. For precision-sensitive workloads, such as legal or accounting applications, full validation on each query is required. For general purpose searches, lightweight validation may suffice.

The research evolved from a real production problem. Enterprise customers running semantic caching systems were getting fast but semantically incorrect responses – the retrieval system was treating similar-sounding queries as the same even though their meaning was different. The two-phase architecture is a proposed solution from Redis, which is included in its LangCash product on the roadmap but is not yet available to customers.

What this means for enterprise teams

Research does not require enterprise teams to build their recovery pipelines from scratch. But it asks them to pressure-test assumptions that most teams have never examined – what their embedding models are actually doing, which metrics are worth trusting and where the real precision gaps reside in production.

Recognize the tradeoff before tuning around it. Rajamohan said the first practical step is to understand that regression exists. He evaluates any LLM-based retrieval system on three criteria: correctness, completeness, and usefulness. Recovery failures feed directly into the other two, meaning that a recovery system that scores well on relevance benchmarks but fails on structural near-misses is creating a false sense of production readiness.

RAG isn’t obsolete – but know what it can’t do. Rajamohan strongly refuted claims that RAG has been removed. "This is a huge oversimplification," He said. "RAG is a very simple pipeline that almost anyone can produce with very little lift." The research does not argue against RAG as an architecture. This argues against assuming that a single-stage RAG pipeline with a fine-tuned embedding model is production-ready for precision-sensitive workloads.

The solution is real but not free. For teams that require higher precision, Rajamohan said the two-step architecture is not a prohibitive implementation lift, but a validation step adds cost latency. "This is a mitigation problem," He said. "Not something we can really solve."

<a href