OpenAI has introduced Trusted Contact to ChatGPT, which will allow users to nominate a friend who the company can contact if they are in danger of harming themselves. More and more people are using Chatbots as a digital therapist, relying on chatbots for their mental health needs. OpenAI had earlier reported BBC More than one million of its 800 million weekly users express suicidal thoughts in their conversations.

Last year, OpenAI faced a wrongful death lawsuit that accused the company of driving a teenager to commit suicide. The lawsuit alleges that the teen talked to Chatgpt about four previous attempts to end his life and then helped him plan the actual suicide. BBC’s The investigation, published in November 2025, found that in at least one instance, ChatGPT advised the user to kill himself. OpenAI told the news organization that since then it has improved how its chatbot responds to people in crisis.

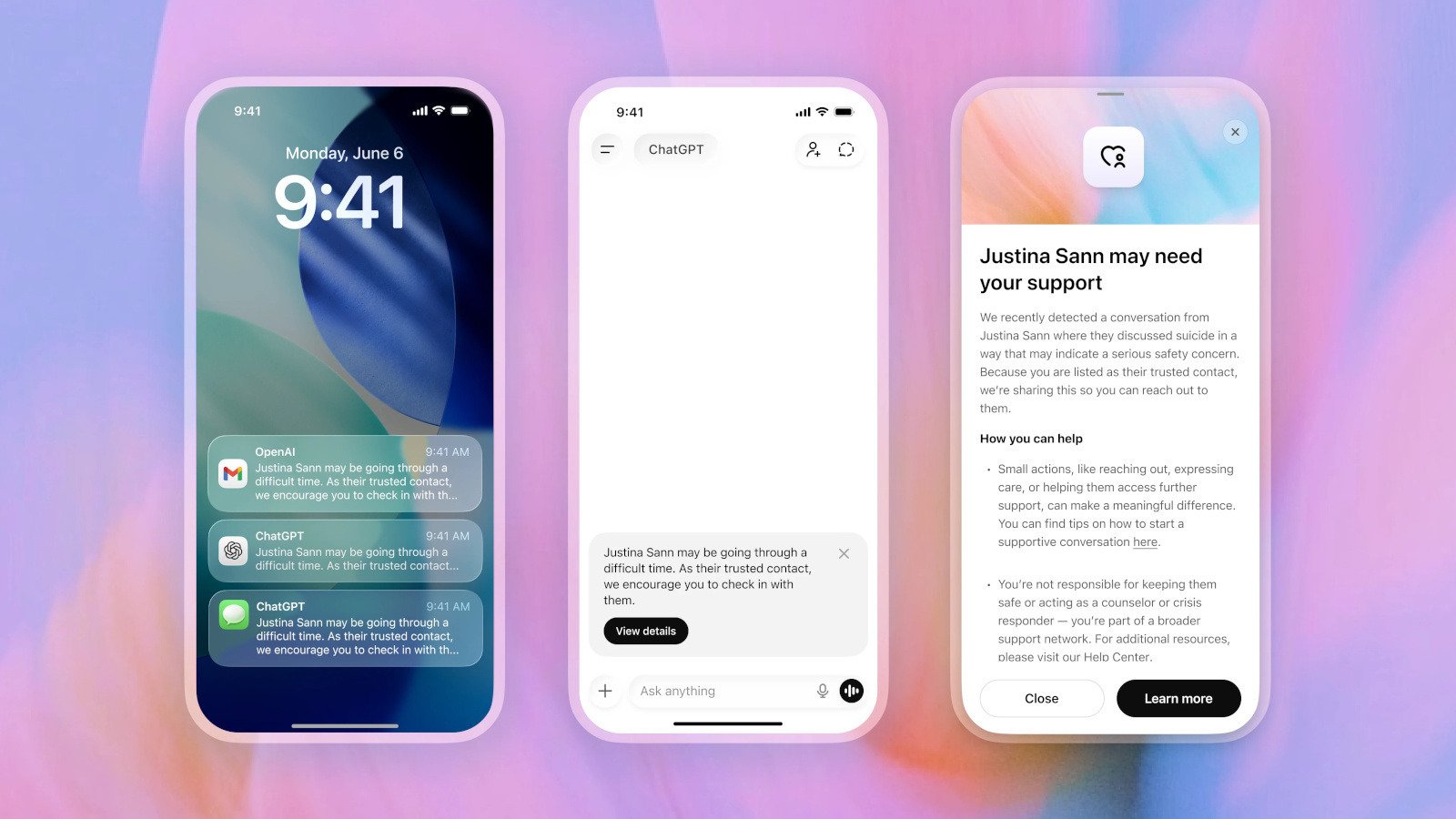

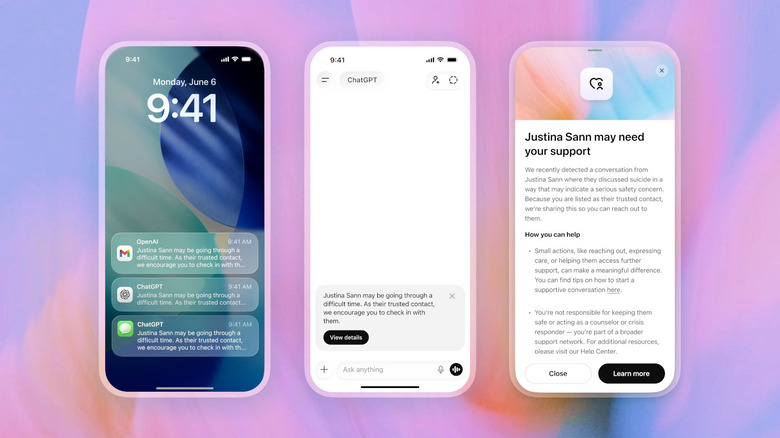

Trusted Contacts is built into ChatGPT’s parental controls, giving adults aged 18 and over the option to add details of someone who can help them if they are on the verge of suicide. Users will be able to nominate an adult as their trusted contact in ChatGPT settings, who must accept the invitation received within a week. If they fail to accept it, the user can choose to add another contact instead. ChatGPT’s system will first warn the user that the company can notify their contact if it detects a serious possibility of self-injury. This will encourage the user to reach out to their friend and also suggest potential conversation starters.

This process is not completely automated. OpenAI says that “a small team of specially trained people” will review the situation, and if they determine there is a serious risk of self-harm, ChatGPT will send an email, a text message, or an in-app notification to the user’s contact.

“[The user] They may be going through a difficult time,” the message will read. ”As their trusted contact, we encourage you to check with them.” From there, the contact can see more details about the alert, telling them that OpenAI has detected a conversation in which the user discussed suicide. However, the company will not send them the transcript of the conversation for user privacy. ”While no system is perfect, and a notification to a trusted contact may not always reflect what a person is experiencing, every notification goes through trained human review before being sent. And we strive to review these safety notifications within an hour,” the company wrote in its announcement.

If you or someone you know is experiencing suicidal thoughts, do not hesitate to contact the National Suicide Prevention Lifeline at 1-800-273-8255. This line is open 24/7 and can also be used to chat online if the phone is not available.

<a href