When I started at AWS in 2008, we ran an EC2 control plane on a tree of MySQL databases: a primary to handle writes, a secondary to take from the primary, a handful of read replicas to scale reads, and a few additional replicas to do latency-insensitive reporting stuff. Everything was tied together with MySQL’s statement-based replication. It worked great day-to-day, but two major areas of pain have remained with me ever since: the operations were expensive, and the eventual continuity made things awkward.

Since then, managed databases like Aurora MySQL have made relational database operations much easier. which is great. But ultimately consistency is still a feature of most database architectures that try to scale reads. Today, I want to talk about why consistency is a pain after all, and why we’ve invested heavily in making all reads strongly consistent in Aurora DSQL.

Ultimately continuity is a pain for customers

Consider the following piece of code running against an API exposed by a database-backed service:

id = create_resource(...)

get_resource_state(id, ...)In a world of read replicas, the latter statement may come as a bit of a shock: Reply’id does not exist’. The reason for this is simple: get_resource_state This is a read-only call, probably routed to a readable replica, and causing a write race. create_resourceIf replication wins, this code works as expected, If the client wins, he must deal with the strange sensation of moving backward in time,

Application programmers don’t really have any theoretical way to work around this, so they write code like this:

id = create_resource(...)

while True:

try:

get_resource_state(id, ...)

return

except ResourceDoesNotExist:

sleep(100)Which fixes the problem. kinda. other times, especially if ResourceDoesNotExist can be thrown if id is removed, it causes an infinite loop. It also creates more work for the client and server, adds latency, and requires the programmer to choose a magic number for it. sleep Which creates a balance between the two. Ugly.

But not only this. Mark Bowes explains that the problem is even worse:

def wait_for_resource(id):

try:

get_resource_state(id, ...)

return

except ResourceDoesNotExist:

sleep(100)

id = create_resource(...)

wait_for_resource(id)

get_resource_state(id) can still fail because the second get_resource_state The call may go to a completely different read replica that has not heard the news yet3,

Strong consistency avoids this whole problem1Making sure the first code snippet works as expected.

Ultimately consistency is a pain for app builders

People building services behind that API face exactly the same problems. To get the benefits of read replicas, application builders need to route as much read traffic as possible to those read replicas. But consider the following code:

block_attachment_changes(id, ...)

for attachment in get_attachments_to_thing(id):

remove_attachment(id, attachment)

assert_is_empty(get_attachments_to_thing(id))This is a fairly common code pattern inside microservices. Kind of a little workflow that cleans something up. But, ultimately in the wild world of sustainability, there are at least three potential bugs:

-

assertmay be triggered by anotherget_attachments_to_thingHaven’t heard about everyoneremove_attachments, -

remove_attachmentMay fail because it has not heard of one of the attachments listedget_attachments_to_thing, - First

get_attachments_to_thingThis may contain an incomplete list because old data is read into it, making the cleanup incomplete.

And there are some more too. The application builder must avoid these problems by ensuring that all reads used to trigger subsequent writes are sent to the primary. This requires more logic about routing (a simple “this API is read-only” is not enough), and reduces the effectiveness of scaling by reducing the traffic that can be sent to the replicas.

Ultimately consistency makes scaling difficult

Which brings us to our third point: read-modify-write is the canonical transactional workload. This applies to explicit transactions (anything that does this). UPDATE Or SELECT This is followed by a write in the transaction), but there are also things that the underlying transaction does (such as the example above). Ultimately consistency makes read replications less effective, because reads used for read-modify-write cannot, in general, be used for writes without strange effects.

Consider the following code:

UPDATE dogs SET goodness = goodness + 1 WHERE name = 'sophie'If the read for that read-modify-write is read from the read replica, the value of goodness The change may not happen as per your expectation. Now, the database can internally do something like this:

SELECT goodness AS g, version AS v FROM dogs WHERE name = 'sophie'; -- To read replica

UPDATE sophie SET goodness = g + 1, version = v + 1 WHERE name = 'sophie' AND version = v; -- To primaryand then checking it actually updated a row2But this adds a lot of work.

The nice thing about making scale-out reads strongly consistent is that the query processor can read from any replica, even in a read-write transaction. Choosing replication also does not require knowing in advance whether the transaction is read-write or read-only.

How does Aurora DSQL read continuously with read scaling

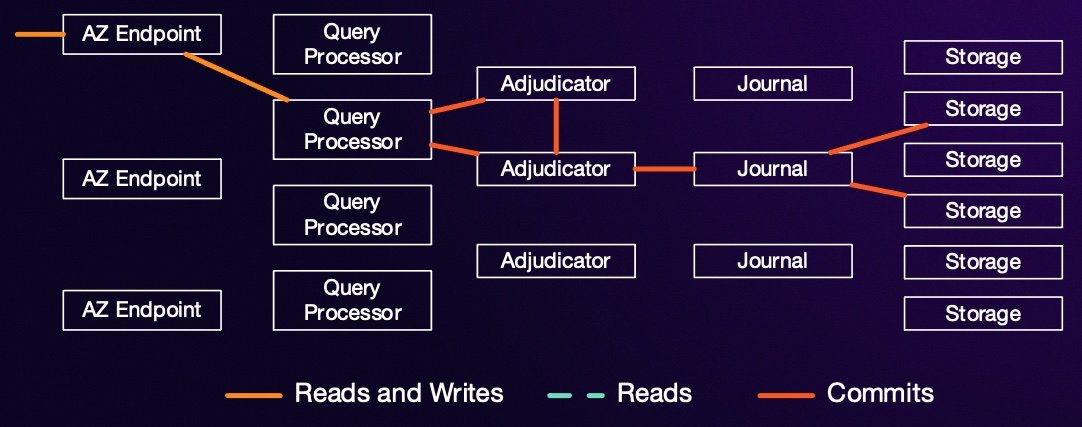

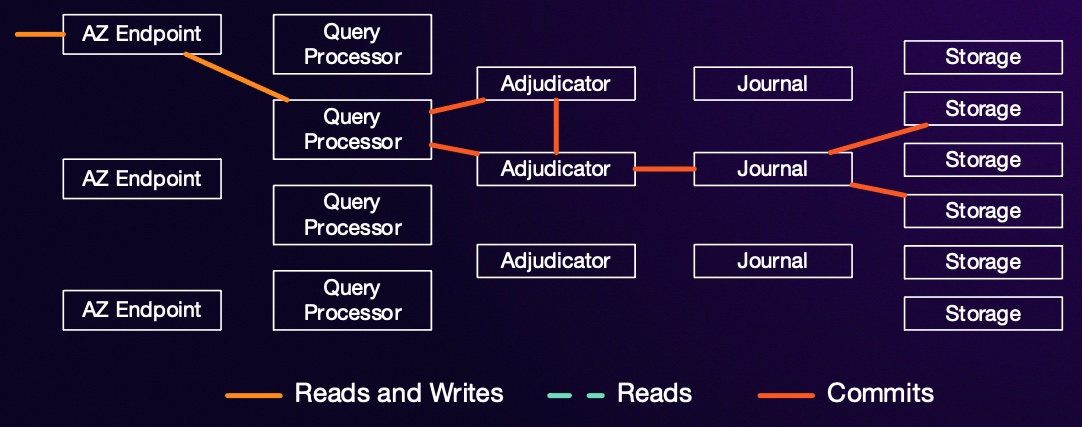

As I said above, all readings in Aurora DSQL are strongly consistent. DSQL can accelerate reads by adding additional replicas of any hot shards. So how does it ensure that all reads are strongly consistent? Let’s remind ourselves of the basics of DSQL architecture.

Each storage replica receives updates from one or more journals. The writes on each journal are completely monotonic, so once a storage node has seen an update for time $\tau$ it knows that it has seen all updates for time $t\leq \tau$. Once it has seen $t \geq \tau$ from all the journals to which it is subscribed, it knows that it can return data for time $\tau$ without missing any updates. When a query processor starts a transaction, it picks a time stamp $\tau_{start}$, and every time it reads from a replica it says to the replica “give me the data as of $\tau_{start}$”. If the replica has seen high timestamps from all journals, it is good to go. If this has not happened yet, it blocks reads until the write streams catch up.

I explain in detail here how $\tau_{start}$ is chosen:

conclusion

Strong consistency seems like a complex topic for distributed systems experts, but it is a real thing that applications built on traditional database replication architectures have to start working at a modest scale or even a very small scale if they are trying to offer high availability. DSQL goes to some internal lengths to make all readings consistent – with the aim of saving application builders and end users from dealing with this complexity.

I don’t mean to say that ultimately consistency is always bad. Latency and connectivity trade-offs exist (although CAP’s select-two framing is bunk), and ultimately stability has its place. However, that location is probably not in your services or API.

footnote

- You can point out that this particular problem can be fixed with a weaker set of guarantees, such as those provided by client stickiness. However, this falls apart very quickly with more complex data models and in cases like IaC where ‘your writing’ is less well defined.

- Yes, I know there are other ways to do this.

- If we want to get technical, it’s because the typical database doesn’t provide a read replication pattern. reads monotonicWhere the set of articles viewed by the reader is increasing with time. Instead, writes to the tip may appear to come and go arbitrarily, as requests are sent to different replicas. See Doug Terry’s explanation of replication data consistency through baseball for an easy introduction to these terms.

<a href