Try Gemini 3 Pro with Audio Transcription and the new Pelican benchmarks

18 November 2025

Google released the Gemini 3 Pro today. Here’s a collection of articles from Sundar Pichai, Demis Hassabis and Koray Kavcuoglu, an announcement from Logan Kilpatrick on his developer blog, a Gemini 3 Pro model card, and 11 of his other articles. This is a big release!

I had preview access to this model through AI Studio for a few days. The best way to describe it is that it is Gemini 2.5 upgraded to match key rival models,

Gemini 3 has the same underlying features as Gemini 2.5. The knowledge cutoff is the same (January 2025). It accepts up to 1 million input tokens, can output up to 64,000 tokens, and has multimodal input in text, image, audio, and video.

Standard

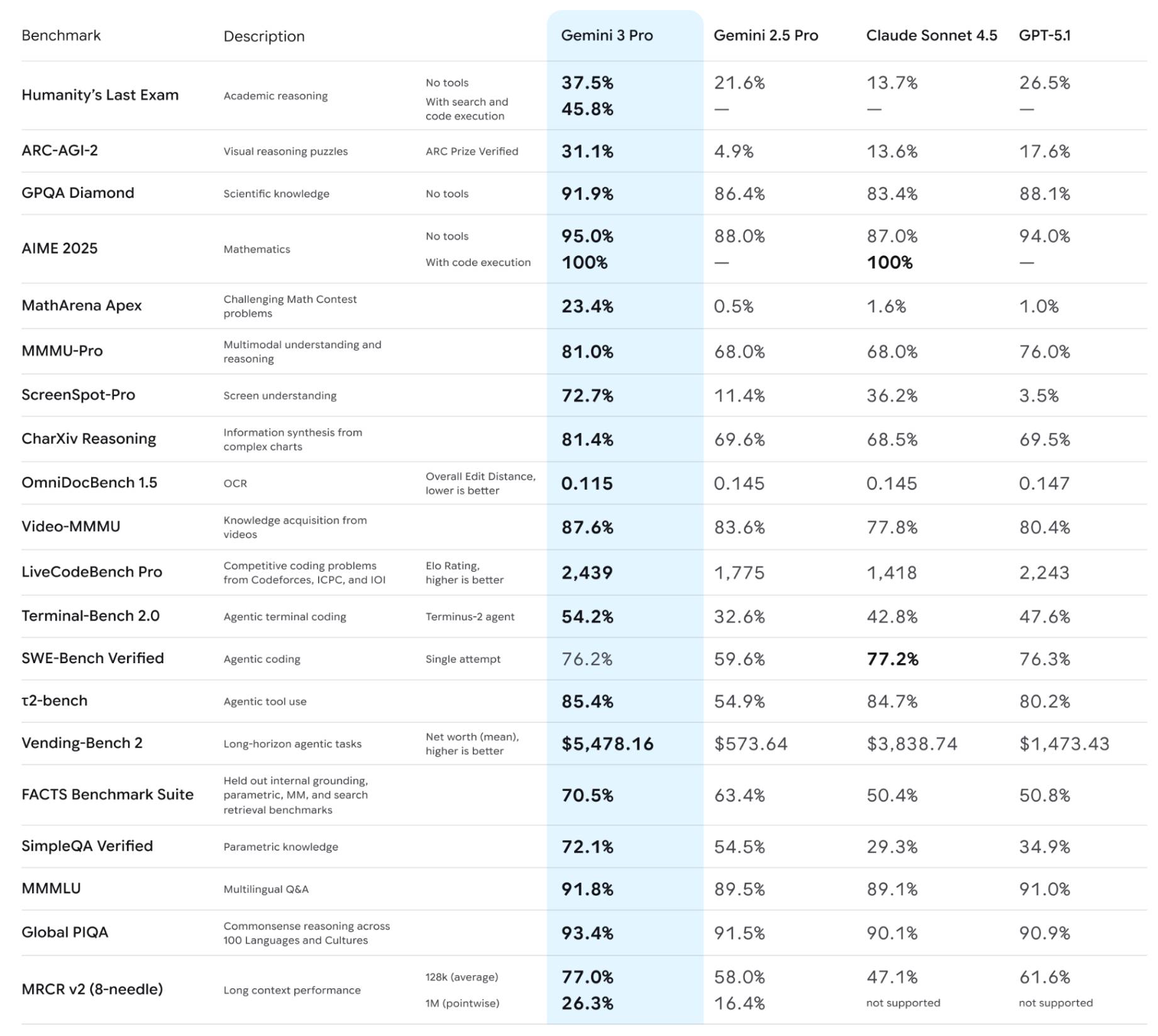

Google’s self-reported numbers (in the model card) show it scoring slightly higher against most standard benchmarks, including Cloud 4.5 SONET and GPT-5.1. As always I await independent confirmation, but I have no reason to believe that these figures are wrong.

Price determination

In terms of price it is slightly more expensive than the Gemini 2.5 but still cheaper than the Claude Sonet 4.5. Here’s how it fits in with those other flagship models:

| Sample | Input (per 1M token) | Output(per 1M token) |

|---|---|---|

| GPT-5.1 | $1.25 | $10.00 |

| gemini 2.5 pro |

≤ 200k tokens: $1.25 |

≤ 200k tokens: $10.00 |

| gemini 3 pro |

≤ 200k tokens: $2.00 |

≤ 200k tokens: $12.00 |

| cloud sonnet 4.5 |

≤ 200k tokens: $3.00 |

≤ 200k tokens: $15.00 |

| cloud opus 4.1 | $15.00 | $75.00 |

Trying it out against a complex image

That screenshot of the benchmark above looked like a good test for the Gemini 3’s multimodal support. I gave him that image URL and asked him to generate alt text for the image:

llm -m gemini-3-pro-preview -a https://static.simonwillison.net/static/2025/gemini-3-benchmarks.jpg 'Alt text for this image, include all figures and make them comprehensible to a screen reader user'

This is what I got back:

A comprehensive table comparing four AI models in various performance benchmarks. The columns listed are: benchmark name, description, Gemini 3 Pro (highlighted in blue), Gemini 2.5 Pro, Cloud Sonnet 4.5, and GPT-5.1.

The Final Test of Humanity (Academic Reasoning)

- No devices: Gemini 3 Pro 37.5%, Gemini 2.5 Pro 21.6%, Cloud Sonnet 4.5 13.7%, GPT-5.1 26.5%.

- With search and code execution: Gemini 3 Pro 45.8% (others have no data).

ARC-AGI-2 (Visual Logic Puzzles; ARC Awards Verified)

- Gemini 3 Pro 31.1%, Gemini 2.5 Pro 4.9%, Cloud Sonnet 4.5 13.6%, GPT-5.1 17.6%.

GPQA Diamond (Scientific Knowledge; No Equipment)

- Gemini 3 Pro 91.9%, Gemini 2.5 Pro 86.4%, Cloud Sonnet 4.5 83.4%, GPT-5.1 88.1%.

AIME 2025 (Mathematics)

- No Devices: Gemini 3 Pro 95.0%, Gemini 2.5 Pro 88.0%, Cloud Sonnet 4.5 87.0%, GPT-5.1 94.0%.

- With code execution: Gemini 3 Pro 100%, Cloud Sonnet 4.5 100%.

MathArena Apex (Challenging Math Competition Problems)

- Gemini 3 Pro 23.4%, Gemini 2.5 Pro 0.5%, Cloud Sonnet 4.5 1.6%, GPT-5.1 1.0%.

MMMU-Pro (Multimodal Understanding and Reasoning)

- Gemini 3 Pro 81.0%, Gemini 2.5 Pro 68.0%, Cloud Sonnet 4.5 68.0%, GPT-5.1 76.0%.

ScreenSpot-Pro (Screen Understanding)

- Gemini 3 Pro 72.7%, Gemini 2.5 Pro 11.4%, Cloud Sonnet 4.5 36.2%, GPT-5.1 3.5%.

CharXiv Reasoning (Information Synthesis from Complex Charts)

- Gemini 3 Pro 81.4%, Gemini 2.5 Pro 69.6%, Cloud Sonnet 4.5 68.5%, GPT-5.1 69.5%.

OmniDocBench 1.5 (OCR; overall edit distance, shorter is better)

- Gemini 3 Pro 0.115, Gemini 2.5 Pro 0.145, Cloud Sonnet 4.5 0.145, GPT-5.1 0.147.

Video-MMMU (Knowledge Acquisition from Videos)

- Gemini 3 Pro 87.6%, Gemini 2.5 Pro 83.6%, Cloud Sonnet 4.5 77.8%, GPT-5.1 80.4%.

LiveCodeBench Pro (competitive coding problems; Elo rating, higher is better)

- Gemini 3 Pro 2,439; Gemini 2.5 Pro 1,775; Claude Sonnet 4.5 1,418; GPT-5.1 2,243.

Terminal-Bench 2.0 (Agent Terminal Coding; Terminus-2 Agent)

- Gemini 3 Pro 54.2%, Gemini 2.5 Pro 32.6%, Cloud Sonnet 4.5 42.8%, GPT-5.1 47.6%.

SWE-Bench Verified (Agent Coding; Single Attempt)

- Gemini 3 Pro 76.2%, Gemini 2.5 Pro 59.6%, Cloud Sonnet 4.5 77.2%, GPT-5.1 76.3%.

T2-Bench (using agent tool)

- Gemini 3 Pro 85.4%, Gemini 2.5 Pro 54.9%, Cloud Sonnet 4.5 84.7%, GPT-5.1 80.2%.

Vending-Bench 2 (long-horizon agentic work; net worth (average), higher is better)

- Gemini 3 Pro $5,478.16; Gemini 2.5 Pro $573.64; Cloud Sonnet 4.5 $3,838.74; GPT-5.1 $1,473.43.

FACT Benchmark Suite (conducted internal grounding, parametric, MM and search retrieval benchmarks)

- Gemini 3 Pro 70.5%, Gemini 2.5 Pro 63.4%, Cloud Sonnet 4.5 50.4%, GPT-5.1 50.8%.

SimpleQA Verified (Parametric Knowledge)

- Gemini 3 Pro 72.1%, Gemini 2.5 Pro 54.5%, Cloud Sonnet 4.5 29.3%, GPT-5.1 34.9%.

MMMLU (Multilingual Question and Answer)

- Gemini 3 Pro 91.8%, Gemini 2.5 Pro 89.5%, Cloud Sonnet 4.5 89.1%, GPT-5.1 91.0%.

Global PIQA (Common Sense Reasoning in 100 Languages and Cultures)

- Gemini 3 Pro 93.4%, Gemini 2.5 Pro 91.5%, Cloud Sonnet 4.5 90.1%, GPT-5.1 90.9%.

MRCR V2 (8-needle) (long reference display)

- 128k (average): Gemini 3 Pro 77.0%, Gemini 2.5 Pro 58.0%, Cloud Sonnet 4.5 47.1%, GPT-5.1 61.6%.

- 1M (pointwise): Gemini 3 Pro 26.3%, Gemini 2.5 Pro 16.4%, Cloud Sonnet 4.5 (not supported), GPT-5.1 (not supported).

I haven’t checked every line of it, but a loose spot-check seems accurate to me.

That prompt took 1,105 input and 3,901 output tokens at a cost of 5.6824 cents.

I ran this following prompt:

llm -c 'Convert to JSON'

You can see the full output here, which starts like this:

{

"metadata": {

"columns": [

"Benchmark",

"Description",

"Gemini 3 Pro",

"Gemini 2.5 Pro",

"Claude Sonnet 4.5",

"GPT-5.1"

]

},

"benchmarks": [

{

"name": "Humanity's Last Exam",

"description": "Academic reasoning",

"sub_results": [

{

"condition": "No tools",

"gemini_3_pro": "37.5%",

"gemini_2_5_pro": "21.6%",

"claude_sonnet_4_5": "13.7%",

"gpt_5_1": "26.5%"

},

{

"condition": "With search and code execution",

"gemini_3_pro": "45.8%",

"gemini_2_5_pro": null,

"claude_sonnet_4_5": null,

"gpt_5_1": null

}

]

},

Analysis of city council meeting

To try this against an audio file I extracted 3 hours 33 minutes of audio from the video Half Moon Bay City Council Meeting – November 4, 2025. I use it yt-dlp To get that audio:

yt-dlp -x --audio-format m4a 'https://www.youtube.com/watch?v=qgJ7x7R6gy0'

This gave me a 74M M4A file, which I ran through Gemini 3 Pro like this:

llm -m gemini-3-pro-preview -a /tmp/HMBCC\ 11⧸4⧸25\ -\ Half\ Moon\ Bay\ City\ Council\ Meeting\ -\ November\ 4,\ 2025\ \[qgJ7x7R6gy0\].m4a 'Output a Markdown transcript of this meeting. Include speaker names and timestamps. Start with an outline of the key meeting sections, each with a title and summary and timestamp and list of participating names. Note in bold if anyone raised their voices, interrupted each other or had disagreements. Then follow with the full transcript.'

That failed with an “Internal error occurred” message, so I shrunk the file to a more manageable 38MB. ffmpeg,

ffmpeg -i "/private/tmp/HMB.m4a" -ac 1 -ar 22050 -c:a aac -b:a 24k "/private/tmp/HMB_compressed.m4a"

Then ran it again like this (for some reason I had to use --attachment-type this time):

llm -m gemini-3-pro-preview --attachment-type /tmp/HMB_compressed.m4a 'audio/aac' 'Output a Markdown transcript of this meeting. Include speaker names and timestamps. Start with an outline of the key meeting sections, each with a title and summary and timestamp and list of participating names. Note in bold if anyone raised their voices, interrupted each other or had disagreements. Then follow with the full transcript.'

This time it worked! The full output is here, but it starts like this:

Here is the transcript of the Half Moon Bay City Council meeting.

meeting outline

1. Call to order, updates and public forum

- Summary: Mayor Brownstone called the meeting as ordered. City Manager Chidester reported no reportable action from the closed session. Announcements are made regarding food insecurity volunteers and Diwali celebrations. During the public forum, Council Member Penrose (speaking as a citizen) warns against autocracy. Citizens talk about lease agreements, city maintenance, local concerts, and homeless outreach statistics.

- timestamp: 00:00:00—00:13:25

- Participant: Meyer Brownstone, Matthew Chidester, Irma Acosta, Deborah Penrose, Jennifer Moore, Sandy Vella, Joaquin Jimenez, Anita Reis.

2. Consent Calendar

- Summary: The council approves the minutes of previous meetings and a resolution authorizing a licensing agreement for the Seahorse Ranch. Council Member Johnson corrected a pull request regarding the abstention in the minutes.

- timestamp: 00:13:25—00:15:15

- Participant: Mayor Brownstone, Councilmember Johnson, Councilmember Penrose, Vice Mayor Ruddick, Councilmember Nagengast.

3. Ordinance Introduction: Commercial Vitality (Item 9A)

- Summary: Staff introduce a new ordinance to address neglected and vacant commercial storefronts, establishing maintenance and performance standards. Council members discuss enforcement mechanisms, window cleaning standards, and the need for objective guidance documents to avoid subjective enforcement.

- timestamp: 00:15:15—00:30:45

- Participant: Karen Decker, Council Member Johnson, Council Member Nagengast, Vice Mayor Ruddick, Council Member Penrose.

4. Ordinance Introduction: Building Standards and Electrification (Item 9B)

- Summary: Staff introduces updates to the 2025 building code. One major change includes the repeal of the city’s all-electric building requirement due to a 9th Circuit Court decision (California Restaurant Association v. City of Berkeley, Public speaker Mike Ferreira expressed deep frustration and disagreement with the “unelected state agencies” forcing the city to change its ordinances.

- timestamp: 00:30:45—00:45:00

- Participant: Ben Corrales, Keith Weiner, Joaquin Jimenez, Jeremy Levin, Mike Ferreira, Councilmember Penrose, Vice Mayor Ruddick.

5. Housing Element Update and Adoption (Item 9C)

- Summary: Staff presents the 5th draft of the housing element, noting the needs of the state HCD to modify ADU allocations and place a measure on the ballot regarding the “Measure D” growth limit. There is significant disagreement from Council Members Ruddick and Penrose regarding the state’s requirement to hold a ballot measure. Public speakers debate the enforceability of Measure D. Mike Ferreira interrupted the atmosphere to express his strong distaste for HCD interference in local law. The council votes to adopt the substance but strikes down the language committed to the ballot.

- timestamp: 00:45:00—01:05:00

- Participant: Leslie (Staff), Joaquin Jimenez, Jeremy Levin, Mike Ferreira, Council Member Penrose, Vice Mayor Ruddick, Council Member Johnson.

copy

mayor brownstone [00:00:00]

Good evening everyone and welcome to the November 4 Half Moon Bay City Council meeting. As a reminder, we have Spanish interpretation services available in person and on Zoom.

Victor Hernandez (interpreter) [00:00:35]

Thank you, Mr. Mayor, City Council, all city employees, members of the public. [Spanish instructions provided regarding accessing the interpretation channel on Zoom and in the room.] thank you so much.

Those first two lines of the transcript already reveal something interesting here: The Gemini 3 Pro chose not to include the exact text of the Spanish instructions, instead summarizing them as “[Spanish instructions provided regarding accessing the interpretation channel on Zoom and in the room.],

I haven’t spot-checked the entire 3 hour 33 minute meeting, but I have confirmed that the timestamps do not line up. The transcript closes thus:

mayor brownstone [01:04:00]

Meeting adjourned. have a good evening.

This actually happens at 3h31m5s and Meyer says:

Ok. Well, thank you to everyone, members of the public, for participating. Thanks for the staff. Thanks to fellow council members. This meeting has now been postponed. have a good evening.

I’m frustrated about the timestamps, because the mismatch there makes it very hard to get to the right point and confirm that the summarized transcript is an accurate representation of what was said.

This took 320,087 input tokens and 7,870 output tokens, for a total cost of $1.42.

And a new Pelican benchmark

The Gemini 3 Pro has a new concept of “thinking level” that can be set to low or high (and high by default). I tried running my classic generated SVG of a pelican at the cycle prompt on both levels.

Here’s less – Gemini decided to add a small hat (with a comment in SVG that says ,

And it’s high here. This is indeed an excellent Pelican, and the bicycle frame is at least the right size:

Although, to be honest, my Pelican benchmarks are starting to look a little too basic. I decided to upgrade it. Here is v2 of the benchmark, which I plan to use going forward:

Generate an SVG of a California brown pelican riding a bicycle. The bicycle must have spokes and a correctly shaped bicycle frame. The pelican must have its characteristic large pouch, and there should be a clear indication of feathers. The pelican must be clearly pedaling the bicycle. The image should show the full breeding plumage of the California brown pelican.

For reference, here’s a photo I recently took of a California Brown Pelican (sadly without the bicycle):

Here’s the Gemini 3 Pro’s attempt at a higher thinking level for that new signal:

And for good measure, here’s the same signal against GPT-5.1 – the one that spawned this stupid little guy:

And Cloud Sonnet 4.5, which didn’t perform as well: