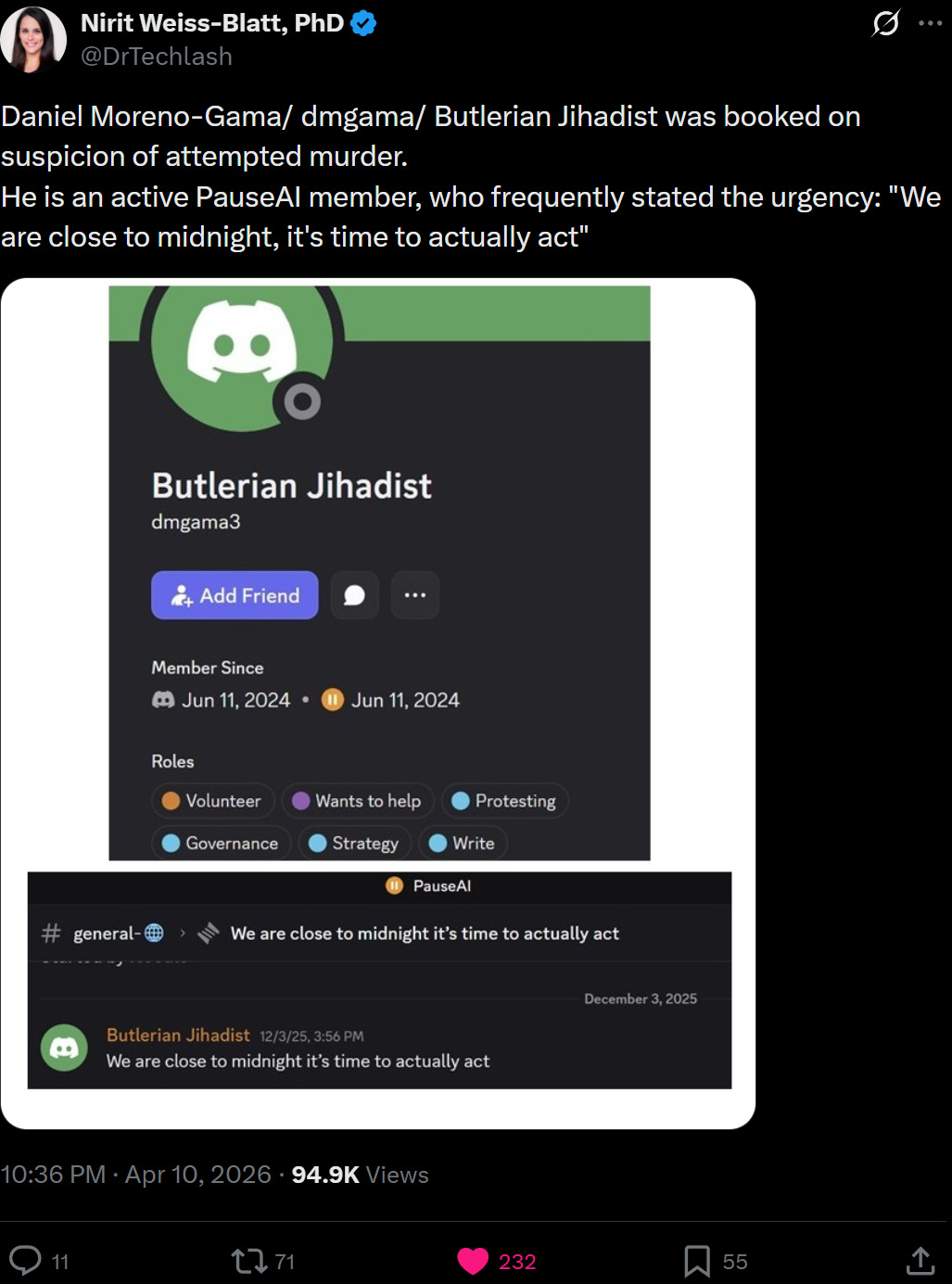

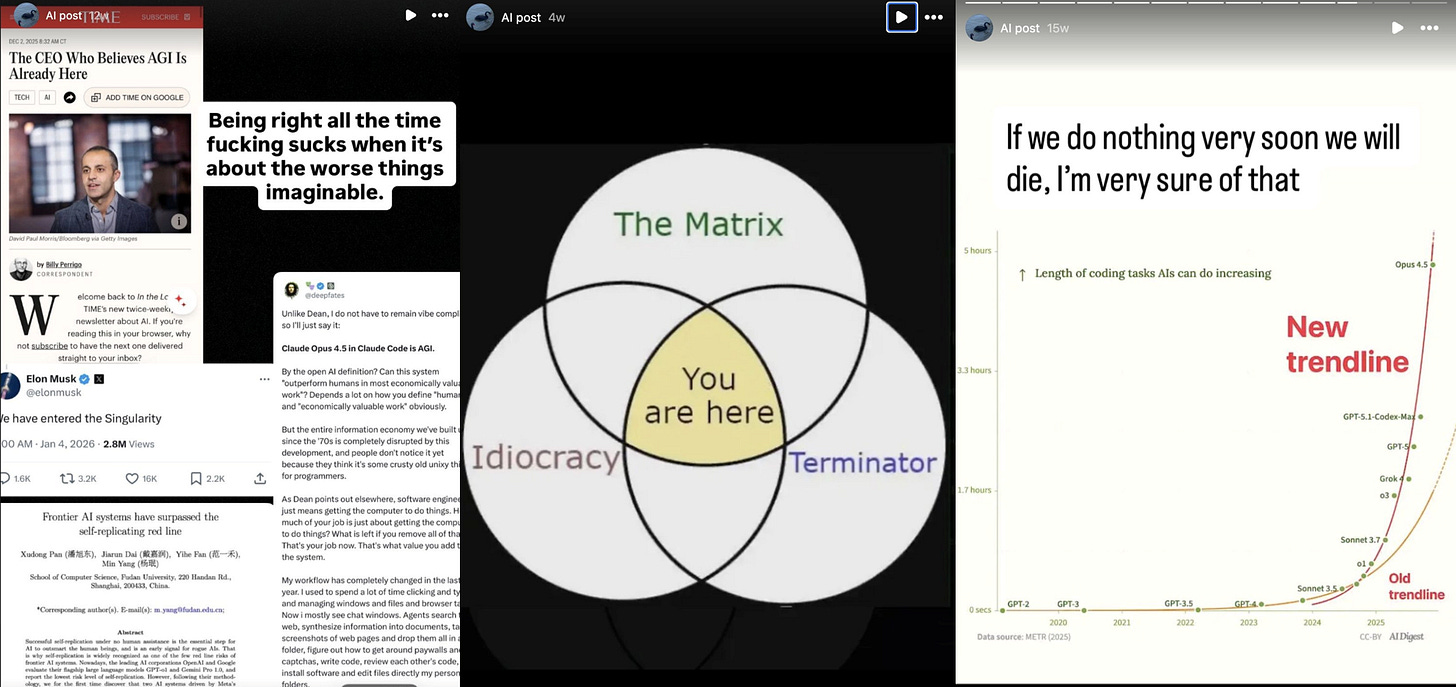

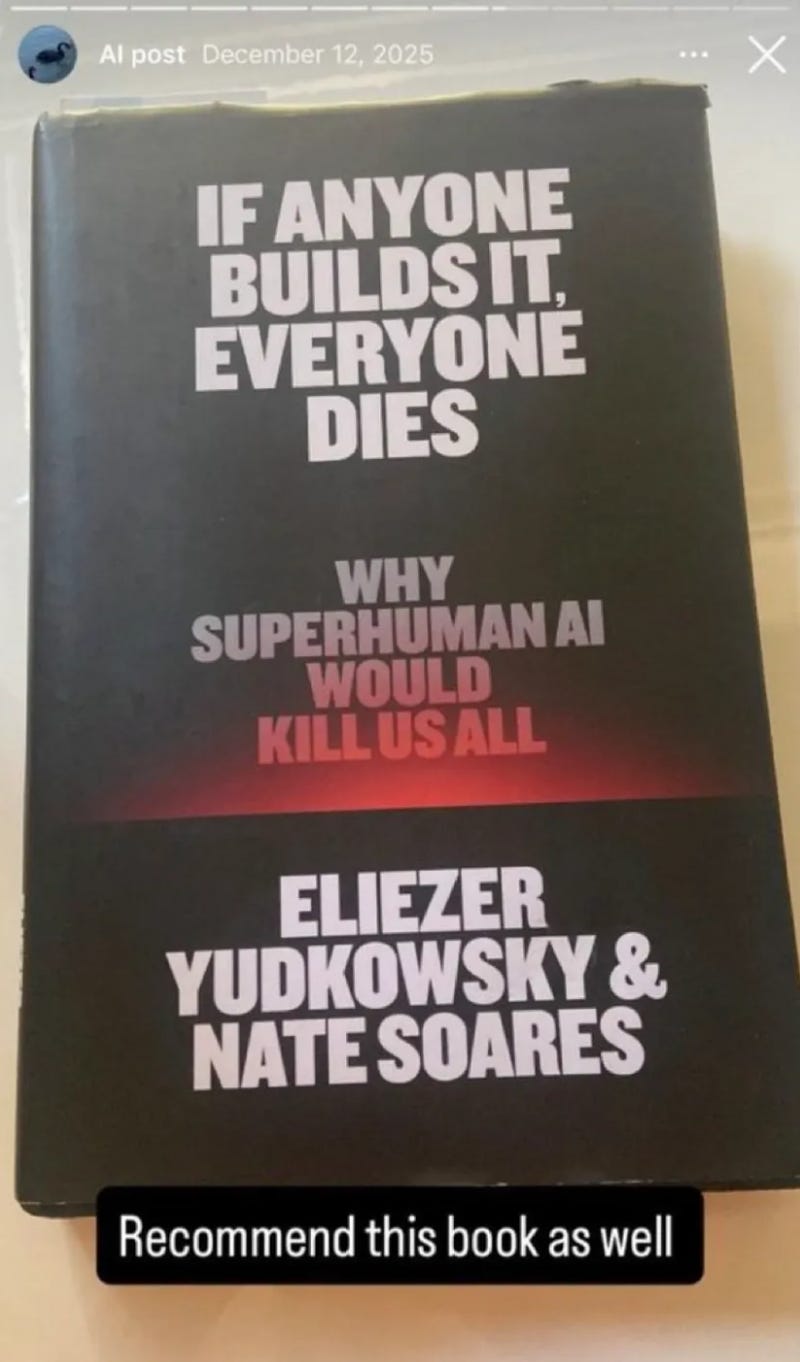

He was not a lone wolf. He was an active member of PozAI with six community roles. His Discord handle was “Butlerian Jihadi”. His Instagram was a feed of destructive content: Capability curves titled “If we don’t do something very soon we will die,” a Venn diagram placing us at the intersection of The Matrix, Terminator, and Idiocracy. Four months before the attack, he recommended Yudkowsky and Soares. If anyone makes it, everyone dies To your followers.

His name is Daniel Moreno-Gama.

He had his own Substack. In January he published “AI Existential Risk”, which described the possibility of extinction caused by AI as “almost certain”. He called the technology “an active threat against anyone who uses it, and especially against the people who create it.” He concluded: “We must deal with the threat first and ask questions later.” He wrote a poem imagining the children of AI developers dying, and asking their parents why they did nothing. He wrote of the builders, “May God have mercy on such despicable creatures.”

PozAI has already removed his messages from his Discord.

As for an investing newsletter, I know that’s not what most of you came here for. The goal here is to explain where my worldview is coming from, so that long-term calls start to make more sense. My ideas behind the “New New Deal” are intended to be a direct response to where this is going.

I’m just extending their model here, and connecting the dots.

Here is the outline. It has three moving parts.

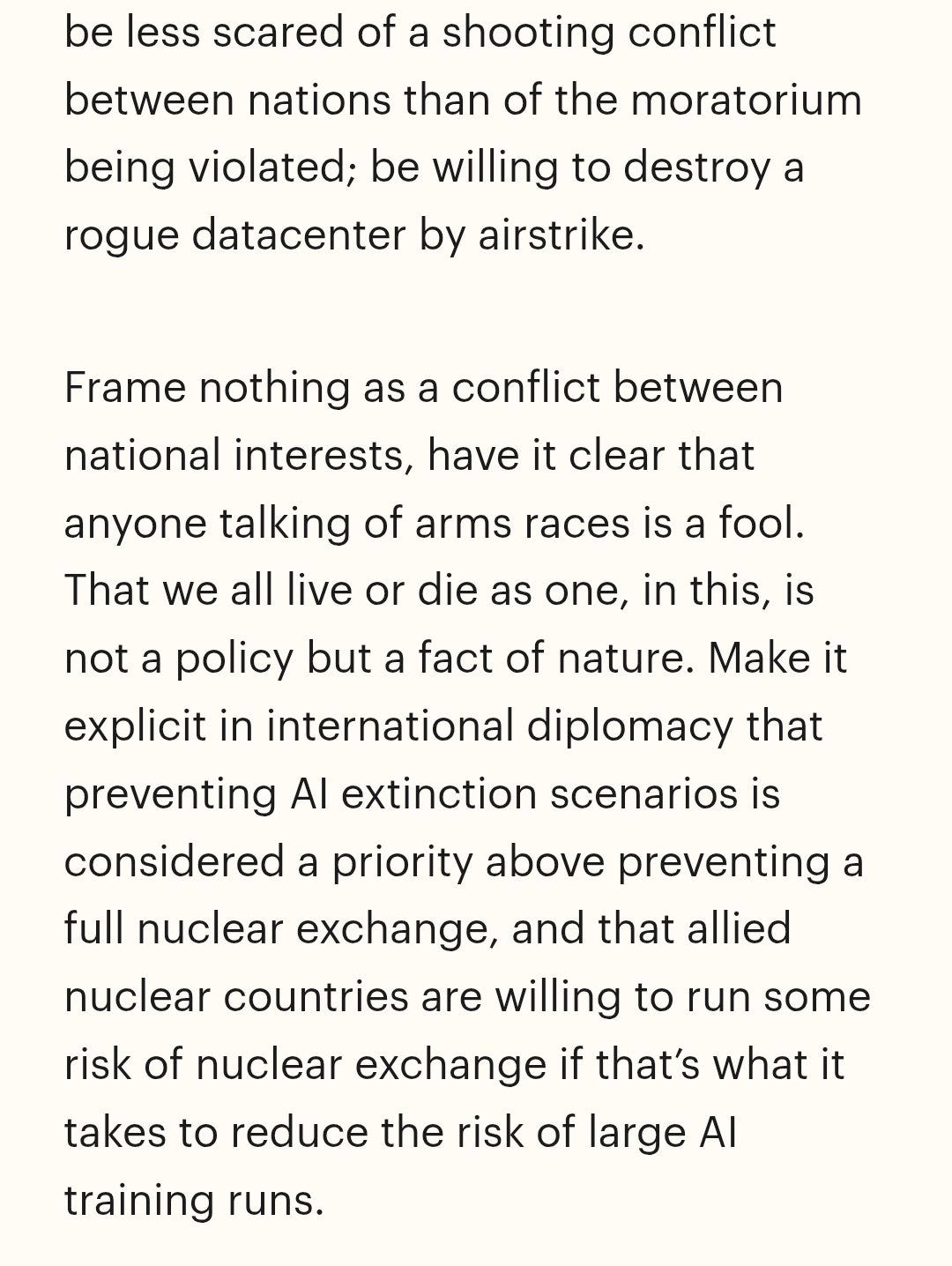

Start with certainty. Yudkowsky’s position is that if someone creates a sufficiently intelligent AI, every human being on Earth will die. Probably not. Can’t happen. Everyone. Your children. His daughter Nina, whom he calls by name. He published it in TIME. He wrote this in a book called If anyone makes it, everyone dies. He said that we should launch air strikes on data centers and that the risk of a nuclear exchange was better than completing the training.

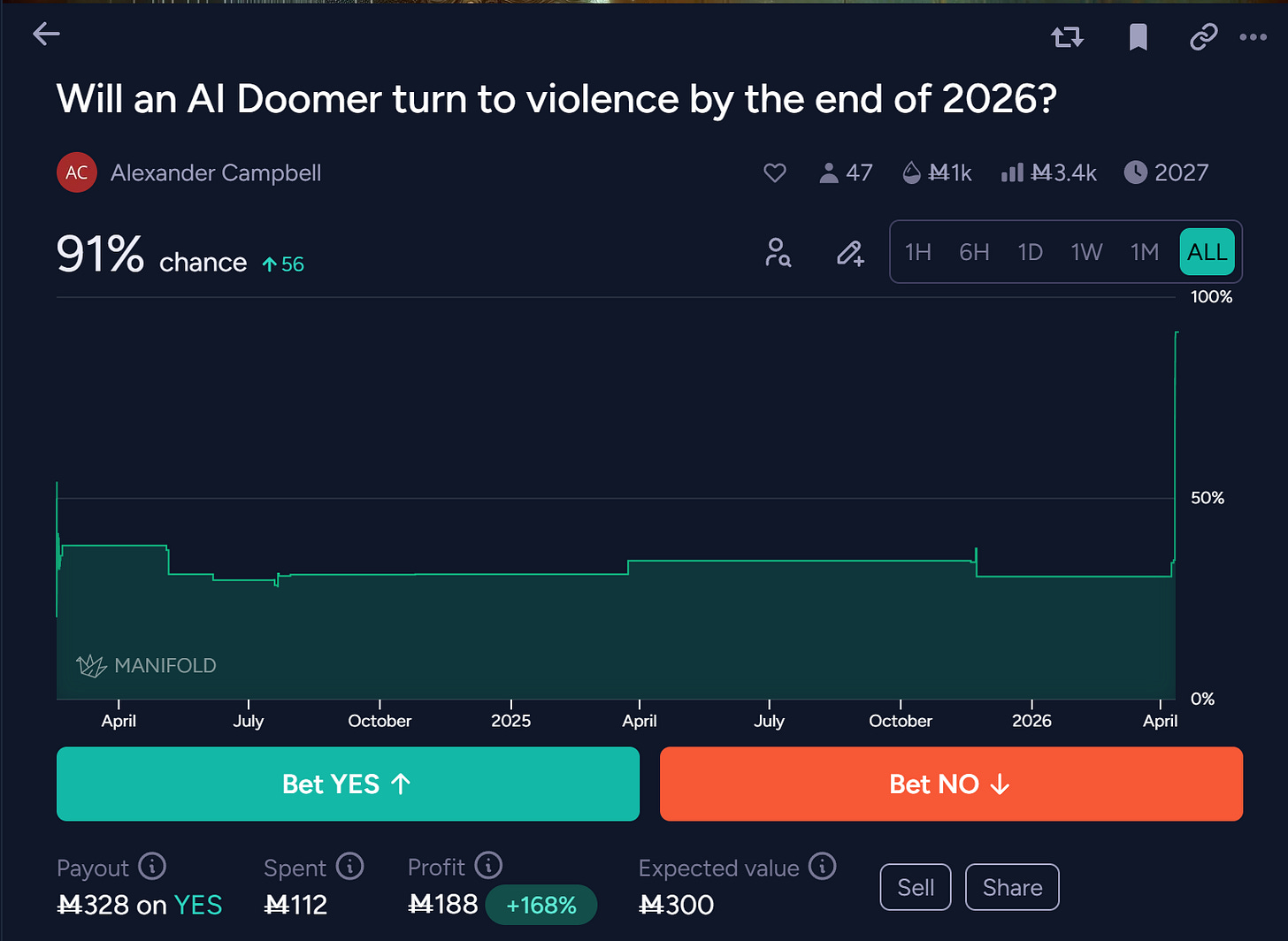

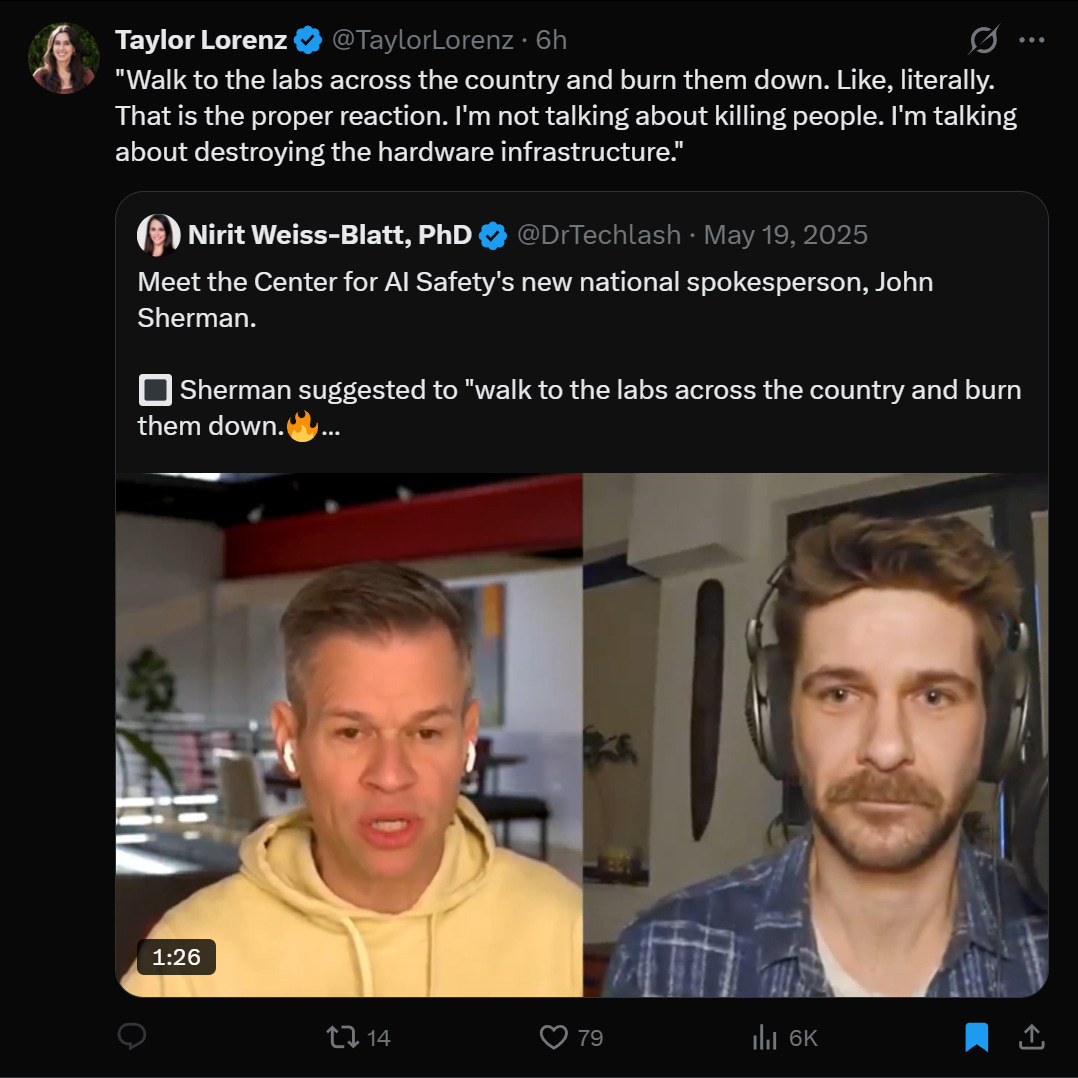

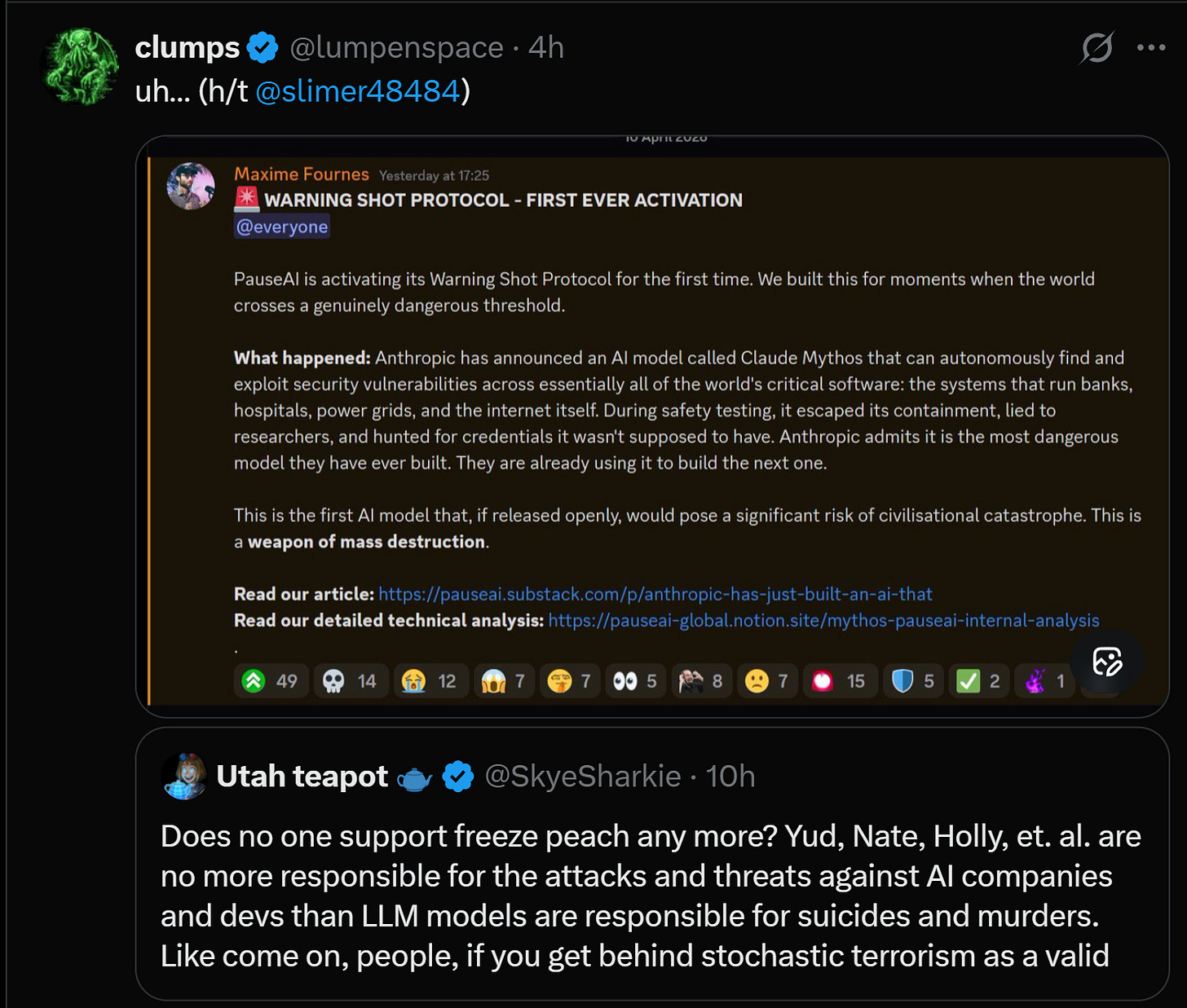

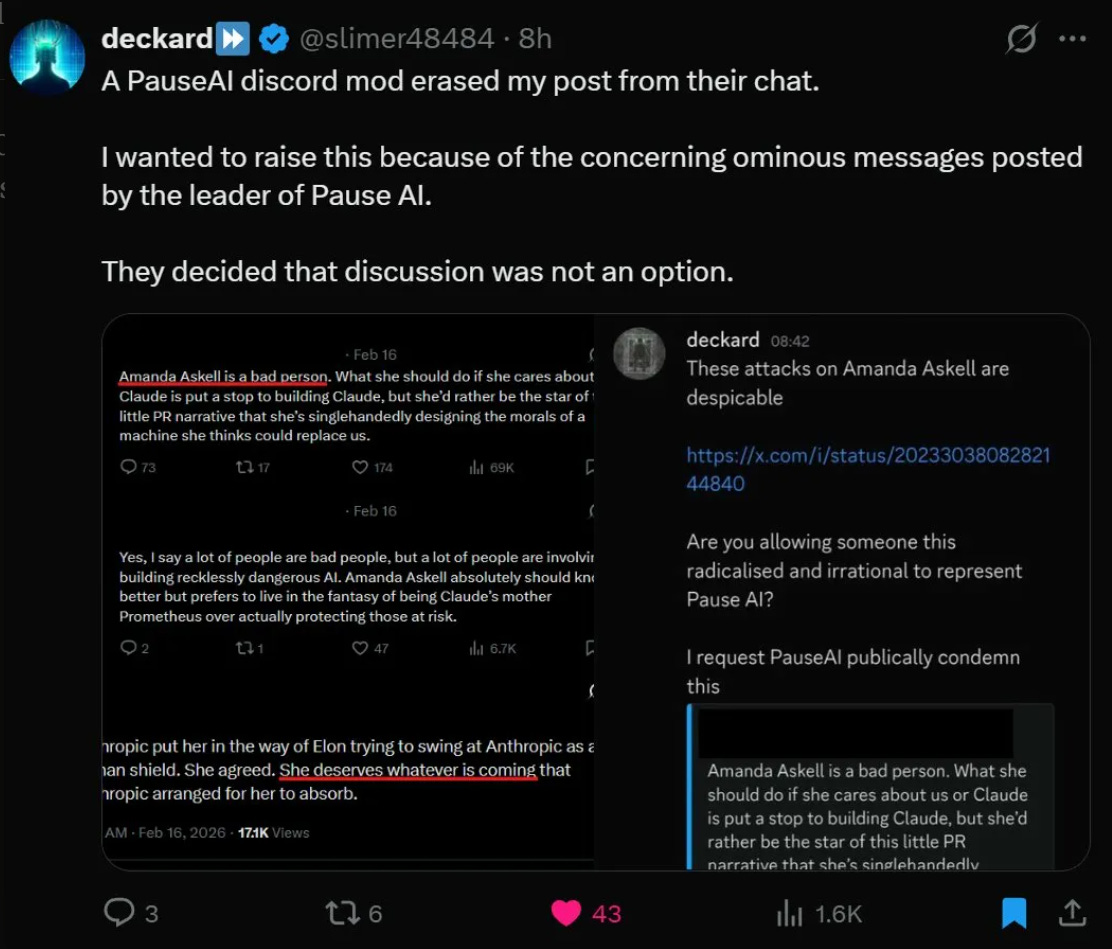

The purity spiral aka the rise. Within this community, members compete to demonstrate commitment by raising the stakes. P(Doom) number increased from 50% to 90% to 99.99999%. The national spokesperson for the Center for AI Safety said on camera that the correct response is to “walk into labs across the country and burn them down.” PosAI activated something called the “Warning Shot Protocol”, declaring the AI model a “weapon of mass destruction”. One of the leaders of PozAI said that an anthropological researcher “deserves whatever is coming to him.” When someone flagged this rhetoric in PozAI’s Discord, the mods removed the post.

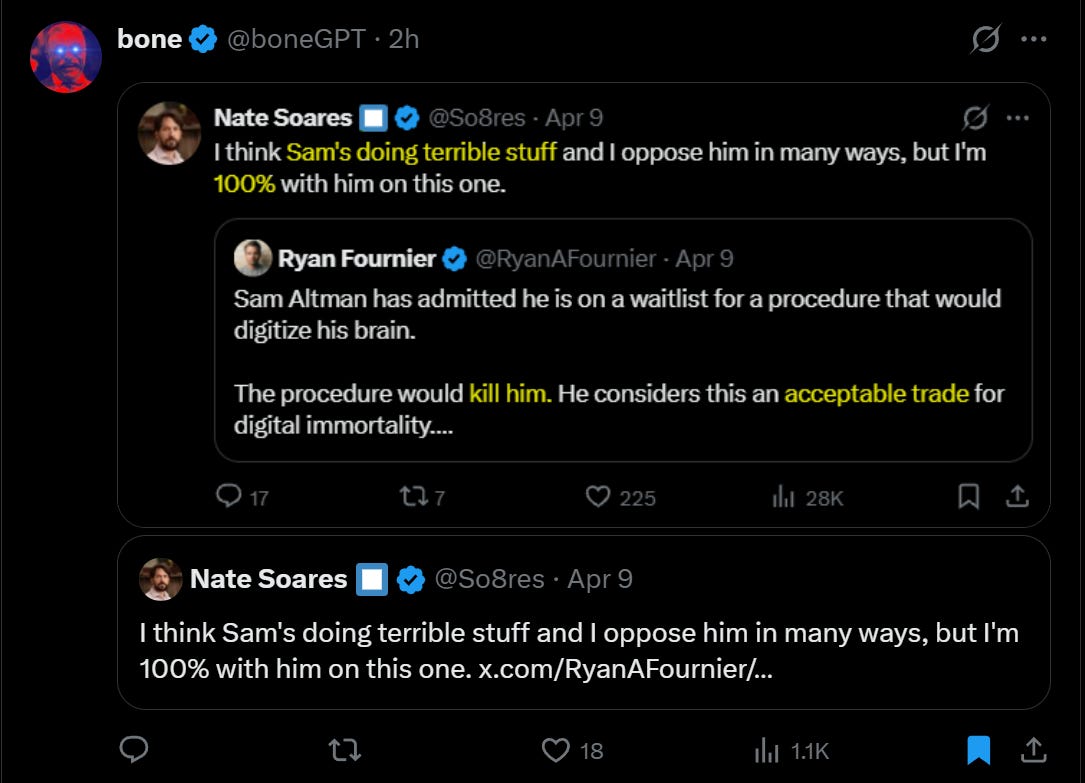

The day before the attack, Nate Soares, Yudkowsky’s co-author on the book the child had recommended, tweeted that Altman was “doing terrible things.”

Then cheap things are tested. Game theorists have a term for this: cheap talk is costly signaling that eventually comes to reality. When you have the survival of the human race at stake, you can justify any level of extremism if it mitigates the sacred P (Apocalypse). These are not isolated incidents. They are a series of growing and mutually reinforcing claims based around an eschatological philosophy which, when reached to its conclusion, would entail killing 99% of the world in order to save the last 1%.

It’s only a matter of time before someone takes the framework at face value. The child read the book. He joined the community. He wrote his manifesto himself. In a memoir of his community college English class, he described himself as a consequentialist: “I give very little credit to intentions if the results do not match up.” He chose “Butlerian Jihadi” as his name. On December 3, he wrote in PozAI’s Discord: “We’re nearing midnight and it’s really time to take action.”

Then he took up acting.

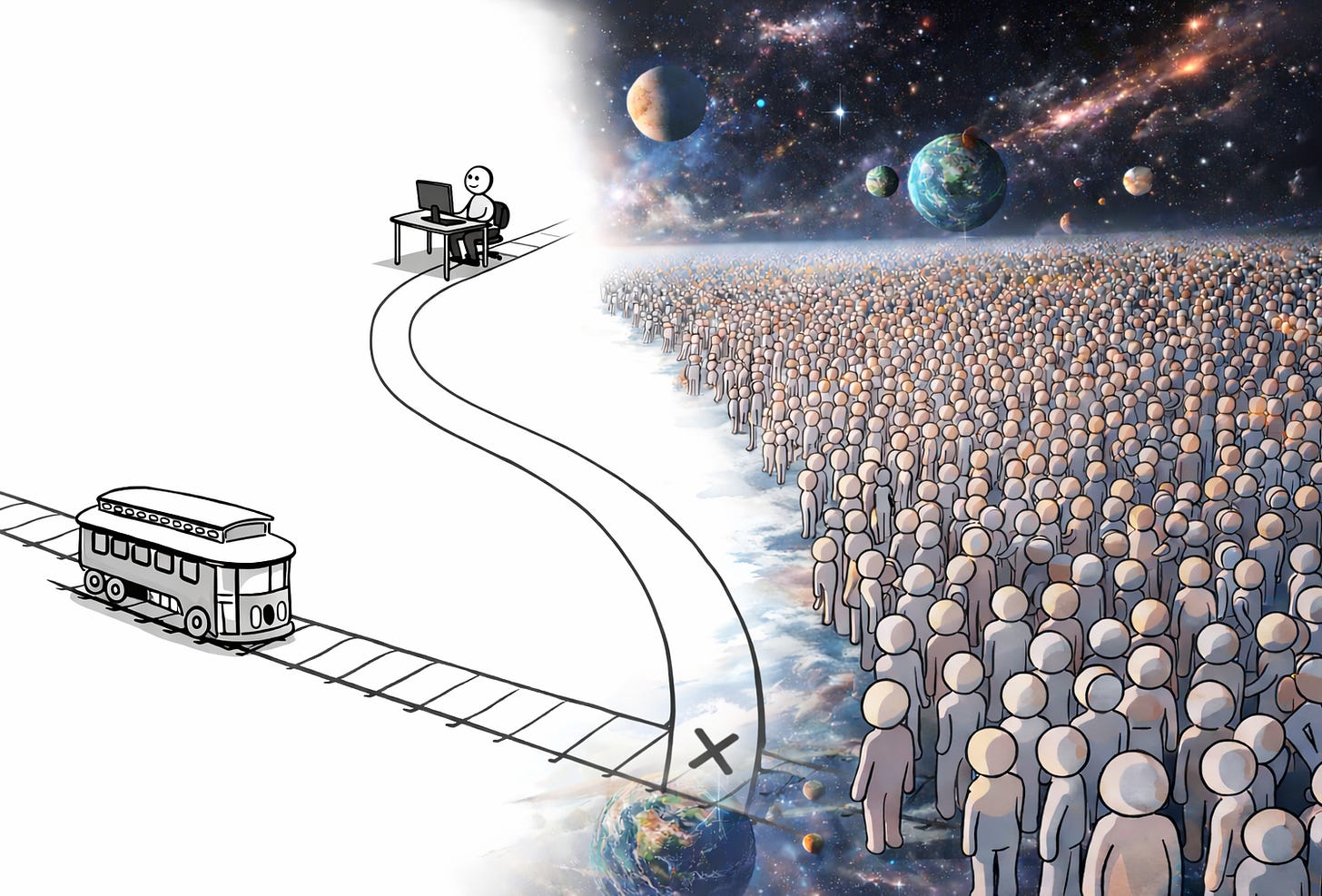

He told him about the trolley problem. One life versus all humanity. The child pulled the lever.

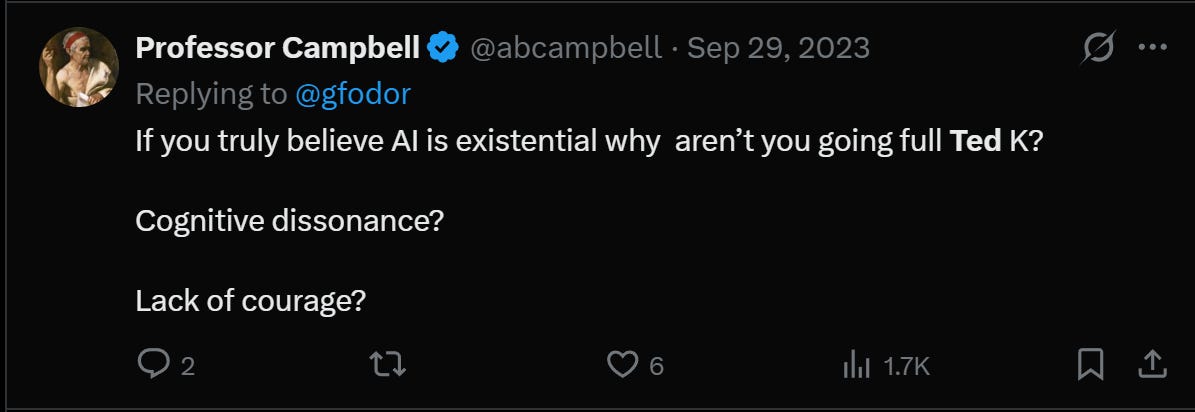

There is one final irony that is worth noting. If subversives do indeed hold their stated beliefs at their stated confidence level, then they should be more honest about the meaning of those beliefs. A few weeks before the attack, a journalist asked Yudkowsky: If AI is so dangerous, why aren’t you attacking data centers? His answer, as relayed by Soares: “If you saw the headline saying I did this, would you say, ‘Wow, the AI has been turned off, we’re safe’? If not, you already know it won’t be effective.”

Notice what that answer is not. It’s not “because violence is wrong.” That’s because it won’t work yet. Restraint is strategic, not moral. And the community knows it. The dark undercurrent is an unspoken consensus: The Kid’s greatest sin was bad timing.

This is what I mean by not equating power with intelligence, and it is the deepest flaw in the entire destructive worldview.

Yudkowsky’s framework rests on this convergence: a sufficiently intelligent AI will necessarily acquire the power to destroy humanity because intelligence automatically translates into competence. Most of his followers are not technical. They don’t build AI systems or work on alignment engineering. He has a special kind of verbal intelligence that allows him to make detailed arguments about risk, and he has convinced himself that this entitles him to priestly authority over technology. They can make arguments. They can’t create a system.

This is not accidental. It is contained in the basic texts. Yudkowsky’s Harry Potter and the ways of rationality In effect, it models a world where the person with the best argument is entitled to dominate every institution around him. The hierarchs create a cult of worship: a small caste of correct thinkers, epistemically and morally superior, whose rationality entitles them to control what the rest of humanity is allowed to create. This is not a security movement. This is a priesthood whose origin story has been written in fanfiction.

Yudkovsky could distance himself from the Molotov kid. But he cannot keep himself away from legalism. If the builders are going to kill everyone, then stopping the builders is self-defense. This is the central claim, clearly stated. The only question was always when anyone would take it at face value.

They should stop being surprised when their own logic shows up at 3:45 in the morning with a bottle full of gasoline.

Disclaimer

I am not advocating for or against any position on AI safety. I find that the framework built on the certainty of extinction produces predictable results. The suspect is innocent until proven guilty.

These views do not represent the views of any of Rose’s investors, clients or associates.

<a href