Here at CES 2026, AMD showed off its upcoming Venice series of server CPUs and its upcoming MI400 series of datacenter accelerators. AMD has talked about the specifications of both the Venice and Mi400 series at its Advancing AI event in June 2025, but this is the first time AMD has shown off silicon for both product lines.

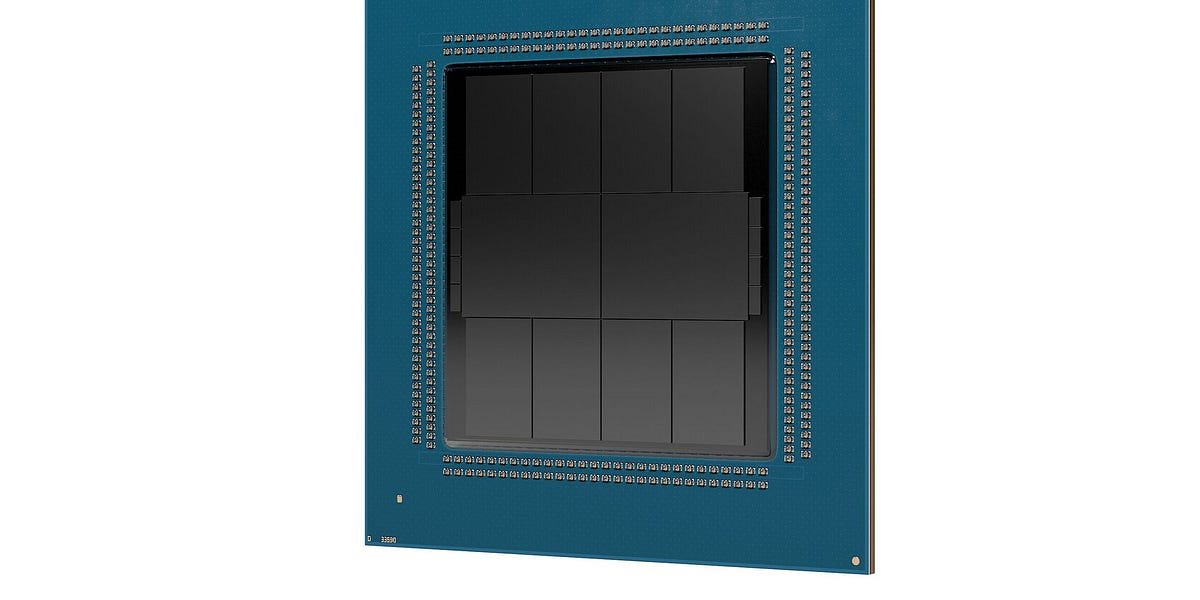

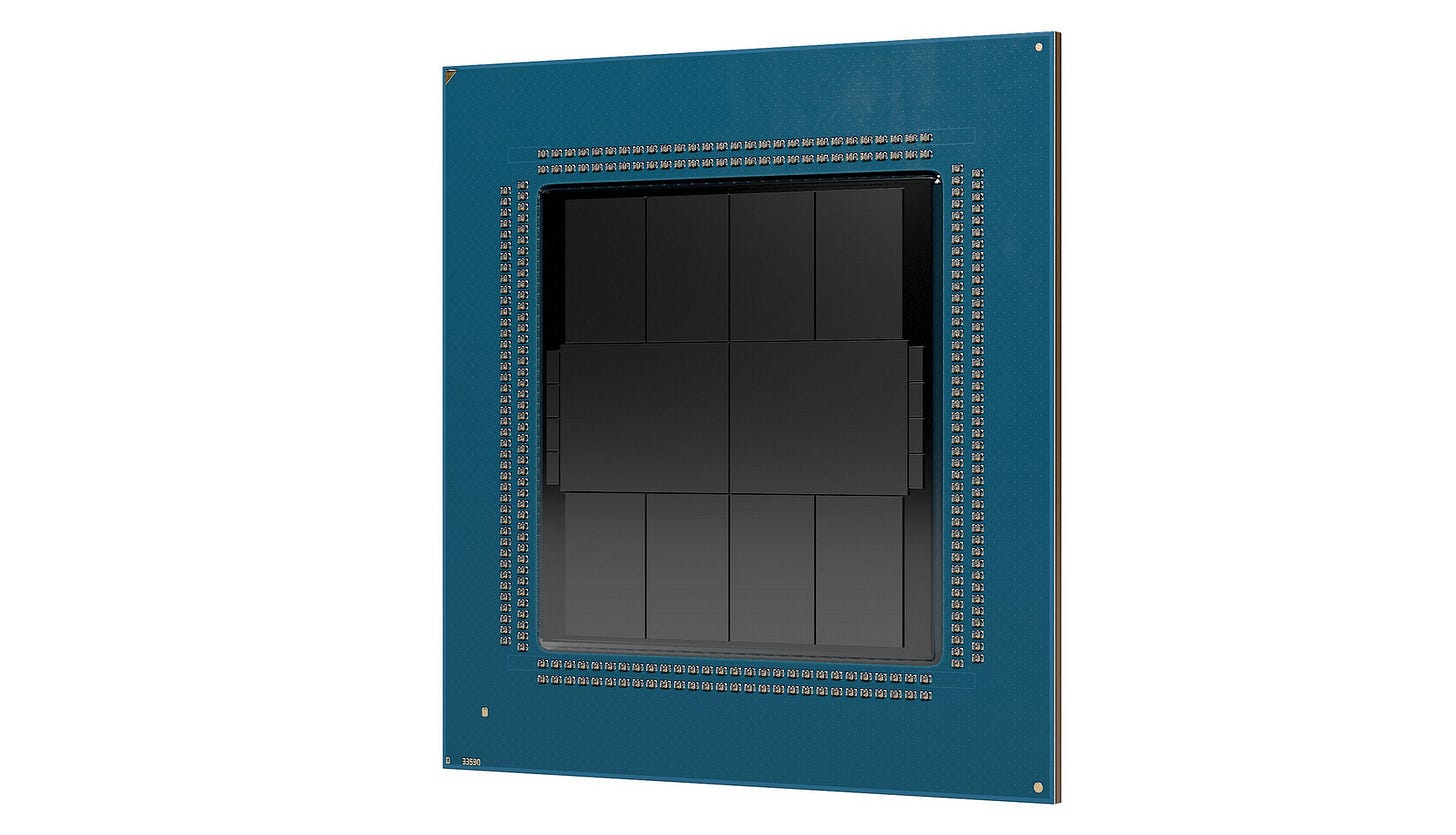

Starting with Venice, the first thing to note is that the packaging from CCD to IO die is different. Instead of using the package’s organic substrate to run wires between the CCDs and IO dies, which AMD has used since EPYC ROMs, Venice is using a more advanced form of packaging similar to the Strix Halo or Mi250X. Another change is that the Venice appears to have two IO dies instead of the one IO die that previous EPYC CPUs had.

Venice has 8 CCDs, each with 32 cores, for a total of 256 cores per Venice package. Making some measurements of each die, you discover that each CCD is approximately 165mm2 Of N2 silicon. If AMD stuck at 4MB L3 per core, each of these CCDs would have 32 Zen 6 cores and 128MB of L3 cache as well as the CCD. <-> IO is the die to die interface for die communication. about 165mm2 per CCD, which would make a Zen 6 core plus 4MB L3 about 5mm per core2 Each which is about the same as the Zen 5 at 5.34mm2 On N3 when counting both Zen 5 cores and 4MB L3 cache.

Moving on to the Io dice, each of them appears to be around 353mm.2 Just over 700mm overall2 Silicon dies dedicated to IO. This is a massive increase from about 400 mm2 That the former EPYC CPUs dedicated to their IO die. Both IOs appear to be using some kind of advanced packaging similar to die CCDs. Next to the IO die are visible 8 smaller dies, 4 on each side of the package, which are likely either structural silicon or deep trench capacitor dies, intended to improve power delivery to the CCD and IO die.

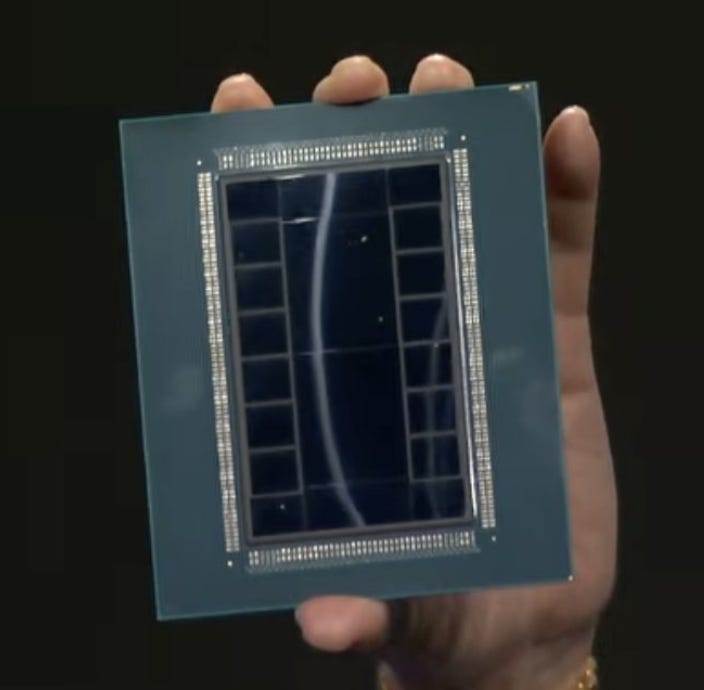

Hailing from Venice on the MI400 accelerator, it’s a massive package with 12 HBM4 dies and “twelve 2 nanometer and 3 nanometer compute and IO dies.” It appears to have two base dies, just like the MI350. But unlike the Mi350, there appear to be two additional dies above and below the base die. These two additional dies are potentially for off-package IO such as PCIe, UALink, etc.

Calculating the die size of the base die and IO die, the die size of the base die is about 747mm2 The off-package IO die is approximately 220mm for each of the two base dies2As far as the Compute Dies are concerned, while the packaging prevents any visual demarcation of the individual Compute Dies, it is likely that there are 8 Compute Dies with 4 Compute Dies on each base die, So while we cannot find out the exact die size of the compute die, the maximum size is around 180mm2The compute chiplet is likely to be in 140mm2 to 160mm2 area but this is a best guess which will have to wait for confirmation.

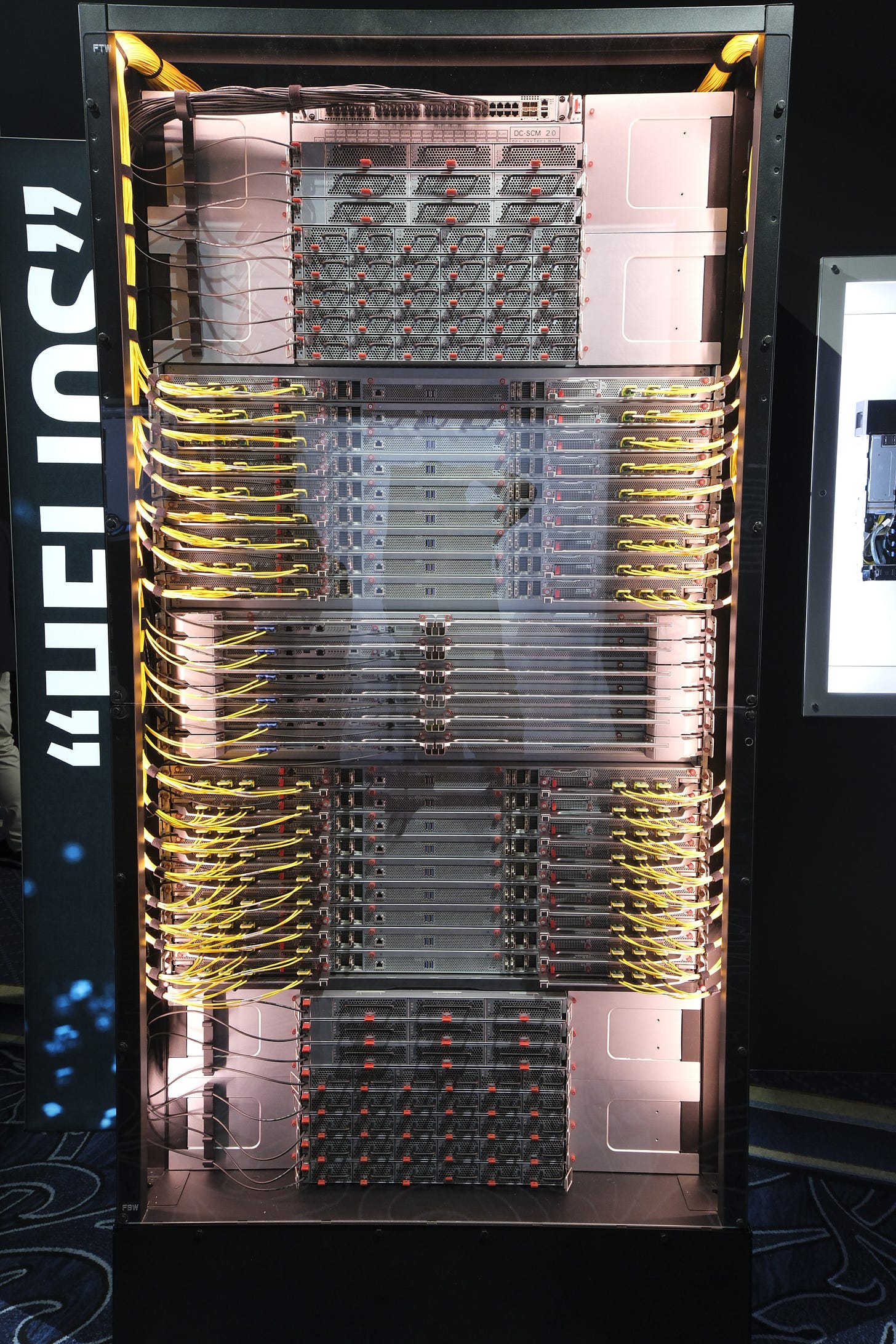

The Mi455X and Venice are two of the SoCs that are going to power AMD’s Helios AI rack but they aren’t the only new Zen 6 and Mi400 series products that AMD announced at CES. AMD announced that a third member of the MI400 family will be the MI440X joining the MI430X and MI455X. The MI440X is designed to fit into an 8-way UBB box as a direct replacement for the MI300/350 series.

AMD also announced Venice-X which will likely be a V-Cache version of Venice. This is interesting because not only did AMD abandon Turin-X, but if there is a 256 core version of Venice-X, it would be the first time that a higher core count CCD will have the ability to support a V-cache die. If AMD sticks to the same ratio of base die cache to V-cache die cache, each 32 core CCD will have up to 384 MB of L3 cache, which is equivalent to 3 gigabytes of L3 cache in the chip.

Both the Venice and MI400 series are scheduled to launch later this year and I can’t wait to learn more about the underlying architecture of both SoCs.

If you like the content, please consider continuing patreon Or paypal If you want to spend a few bucks on chips and cheese, also consider joining discord,

<a href