TL;DR: I scanned every public GitLab Cloud repository (~5.6 million) with Trufflehog, found over 17,000 verified live secrets, and earned over $9,000 in bounties along the way.

This guest post was developed by security engineer Luke Marshall Truffle Security’s Research CFP Program. Luke specializes in investigating exposed secrets in open-source ecosystems, a path that led him to bug bounty work and responsible disclosure.

This is the last blog post in a two-part series exploring the secrets exposed in the popular Git platform. check it out Bitbucket research here,

What is GitLab?

GitLab is very similar to Bitbucket and GitHub; It is a Git-based code hosting platform launched in 2011. GitLab was the last of the 3 “big” platforms to be released, but surprisingly has almost twice as many public repositories as Bitbucket.

It has all the same features as GitHub and Bitbucket that make it an attractive target for exposed credentials:

-

It uses Git, which hides secrets deep in the commit history.

-

It hosts several million public repositories.

Searching all public GitLab cloud repositories

Like the Bitbucket research, this research aims to provide accurate information into the state of exposed credentials across all public GitLab cloud repositories. This means I need a way to list every single public GitLab cloud repository.

GitLab exposes a public API endpoint (https://gitlab.com/api/v4/projects) which can be used to retrieve a list of repositories by sequentially paging through the results.

This script below handled it for me.

At the time of initial research (10/09/2025), GitLab returned over 5,600,000 repositories. Since this scan, approximately 100,000 new repos have been published.

building automation

I used the same automation as Bitbucket Research: an AWS Lambda function connected to an AWS Simple Queue Service (SQS) queue. This lost me about $770 USD, but it allowed me to scan 5,600,000 repositories in about 24 hours.

My automation consisted of two main components:

-

A local Python script that sent all 5,600,000 repository names to an AWS SQS queue that acted as a durable task list.

-

An AWS Lambda function (a) scans the repository with Trufflehog, and (b) logs the results.

The beauty of this architecture meant that no repository was accidentally scanned twice, and if something broke, scanning would resume seamlessly.

The scanning architecture looked like this:

AWS Lambda requires container images to embed the Lambda Runtime Interface Client (RIC) and a handler. Since the pre-built Trufflehog image is Alpine-based, it will not run as a Lambda on its own.

I created a custom Lambda function using the AWS Python base image (which already has the RIC and the correct entrypoint), copied the Trufflehog binary to that image, and then invoked it from the handler.

My Dockerfile looked like this:

This is the trufflehog command I used:

Each Lambda invocation executed a simple Trufflehog scan command with concurrency set to 1000. This setup allowed me to complete a scan of 5,600,000 repositories in just 24 hours.

Results: Comparison of GitLab with Bitbucket

When comparing the two platforms, the differences in scale and findings are apparent:

|

metric |

bit bucket |

gitlab |

|---|---|---|

|

public repo scanned |

~2.6 million |

~5.6 million (2.1x) |

|

Verified secret found |

6,212 |

17,430 (2.8x) |

While I scanned almost twice as many repositories on GitLab, I found almost three times as many verified secrets. This indicates a ~35% higher density Number of secrets leaked per repository on GitLab compared to Bitbucket.

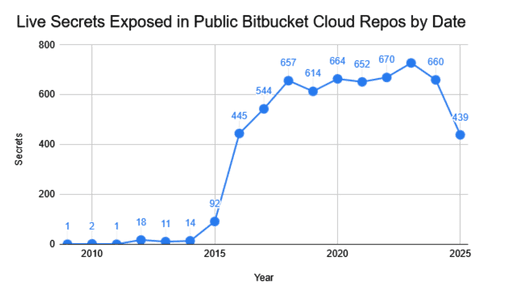

Secrets revealed by date

The graphs below show the frequency of live secrets exposed on GitLab Cloud and Bitbucket public repositories.

While Bitbucket’s exposure volume has effectively stagnated since 2018, consistently hovering in the mid-hundreds, GitLab experienced an explosive surge during the same period. This divergence suggests that the recent surge in AI development and the associated proliferation of API keys has disproportionately impacted GitLab’s more active public repository landscape.

Fun Facts: While Bitbucket has some old secrets, GitLab has some very ancient secrets. The earliest commit timestamp for a valid secret is 2009-12-16! These credits must have been imported into this GitLab repository, as they predate GitLab’s release date by almost 2 years!

mystery revealed

Like Bitbucket, Google Cloud Platform (GCP) credentials were the most commonly leaked secret type on the GitLab repository. About this 1 in 1,060 The repo included a set valid GCP Credentials!

In the graph below, I have plotted the keys most frequently leaked by SaaS/Cloud providers.

One extraordinary finding was the distribution of GitLab-specific credentials. We found that 406 valid GitLab keys are being leaked in the GitLab repository, but only 16 GitLab keys are being leaked in Bitbucket. This sharp contrast, 406 vs. 16, strongly supports the concept of ‘platform-locality’: developers are significantly more likely to accidentally commit a platform’s credentials to the same platform.

Automating the Triage Process

The 17,430 secrets leaked belonged to 2804 unique domains, which meant I needed an efficient and accurate way to test the results. I used LLM (Cloud Sonnet 3.7) capable of performing web searches to identify the best path to reporting each organization’s security vulnerability.

I divided the domains into batches of 40 and then sent them to the cloud with this prompt.

Of course, this wasn’t foolproof, and some organizations don’t have defined security programs, so I also “vibed up” a simple Python script that would extract metadata from Trufflehog results and dynamically generate disclosure emails. I used role-based email addresses security@, support@And contact@ In my best effort to reach these organizations, I could not find a specific email address for security or executive contact.

Both of these systems worked well and allowed me to disclose leaked secrets to over 120+ organizations. Separately, I reached out to 30+ SaaS providers to work directly with them on improving their customers’ exposed credentials.

looking deeply

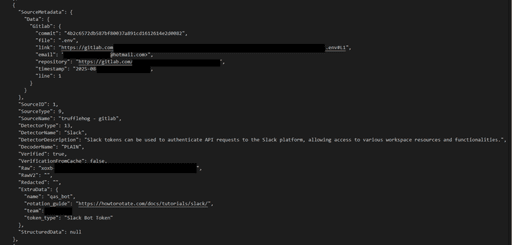

One of the main challenges in secret identities on the Git platform is that users working with personal email addresses can expose an organizational secret, or vice versa. Because of this, I tried to focus on linking the mystery to an organization rather than linking the mystery to an organization. A good example of this was a Slack token that was committed by @hotmail.com Address of the public GitLab repo.

For some secrets, Trufflehog outputs an additional data field to aid in triaging. In the case of the Slack token, Trufflehog outputs the Team value, which is often used to identify the organization using the Slack token.

To confirm my suspicion that this might be an organization’s token, I used Trufflehog analyze A closer look at the mystery in more detail:

Success! In url In the field, I found a link to a Slack instance. After navigating to this page, I saw a login screen for the organization’s Okta instance, confirming that this token belonged to an organization. This mystery was accepted as P1 and $2100 was paid.

Comment:run only trufflehog analyze On secrets that you have, or when the relevant bug bounty/disclosure program specifically authorizes that type of scanning.

Summary

This project is coupled with earlier bitbucket studyIt offers a clear view of how secrets are distributed across major Git platforms. Some key points emerged:

-

High Density, Equal Payment: While GitLab exposed far more legitimate credentials (3x the amount of Bitbucket), the total reward payout was almost identical ($9,000 vs. $10,000), suggesting that higher volume does not always equate to higher significant impact.

-

“Zombie Mystery” Problem: Both platforms have valid credentials dating back over a decade (2009), proving that secrets don’t just expire on their own, they must be rotated.

-

Platform locality is real: Where they live, secrets get leaked. We found approximately 25 times more valid GitLab tokens on GitLab than Bitbucket.

-

Cost of Disclosure: It required significant automation and “triage” to responsibly disclose secrets in over 2,800 organizations, but it successfully revoked thousands of live keys.

The bottom line: Even as they mature, enterprise platforms still retain high-impact exposure. For defenders, it reinforces that disciplined, large-scale scanning is not just a research exercise; This is a necessity.

<a href