This blog reflects the collaborative effort of Qudo’s research team to design, build, and validate the benchmark and this analysis.

Anthropic launches Code Review for Cloud Code, a multi-agent system that dispatches parallel agents to review pull requests, verify findings, and post inline comments on GitHub. This is a significant engineering effort, and we wanted to see how it performed on rigorous, standardized benchmarks.

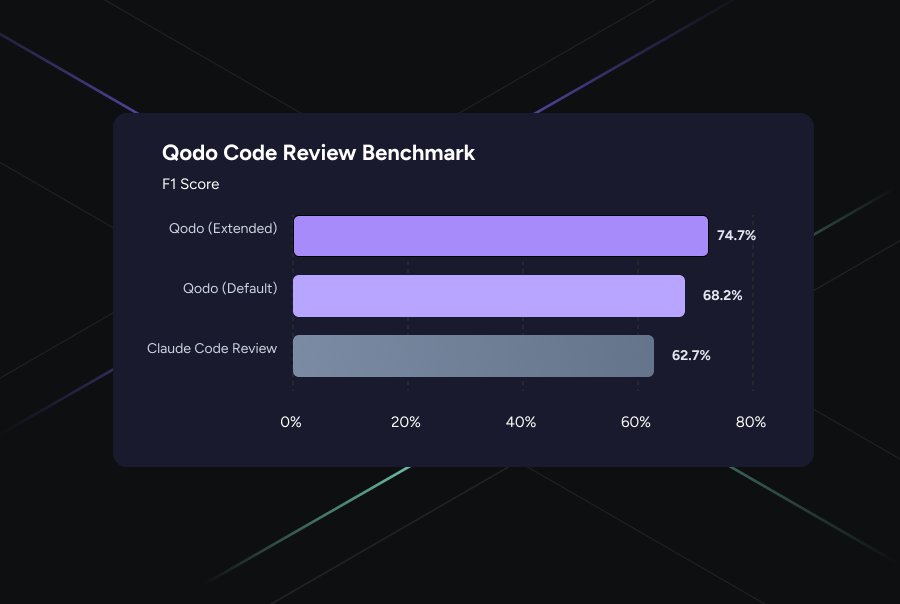

However, according to the Cudo Code Review benchmark (which is being adopted throughout the industry, most recently NVIDIA announced the Nemotron 3 Super),

Kyudo outperforms Claude by 12 F1 points!

Here’s what we learned:

benchmark

our research paper “Beyond Surface-Level Bugs: Benchmarking AI Code Review at Scale” Introduced Cudo Code Review Benchmark 1.0, a methodology designed to inject realistic defects into real, merged pull requests from production-grade open-source repositories.

The benchmark consists of 100 PRs with 580 issues in 8 repositories spanning TypeScript, Python, JavaScript, C, C#, Rust, and Swift. Unlike previous benchmarks, which step back from fix commits and focus on individual bugs, our approach evaluates both code correctness and code quality (best practice and rule enforcement) within full PR review scenarios. The injection-based method is repository-agnostic and scalable, as it can be applied to any codebase, open-source or private.

Our initial evaluation included 8 major AI code review tools. The Kyudo led the field in all configurations. With the release of Cloud Code Review, we added it as a ninth tool for similar situations. We have also applied the same benchmark to the latest generation of open-source models, such as NVIDIA Nemotron-3 Super, rapidly closing the gap With proprietary model.

This benchmark is designed to be a live assessment rather than a static snapshot. Each run represents the most current iteration of the tools involved. While we prefer real-time accuracy over static version serialization, these results represent the latest performance parity in the field

Cloud Code Review: Setup

We configured Cloud Code Review using its default settings, just like a new customer does. The PRs were opened on the same forked repository, and agents.md The rules are generated from the codebase and committed to the root of each repo. Cloud Code Review ran automatically on PR submissions, and we collected its inline comments to evaluate against our validated ground truth using the same LLM-a-judge system applied to all other tools.

No tuning, no special configuration. Absolutely fair, head-to-head comparison.

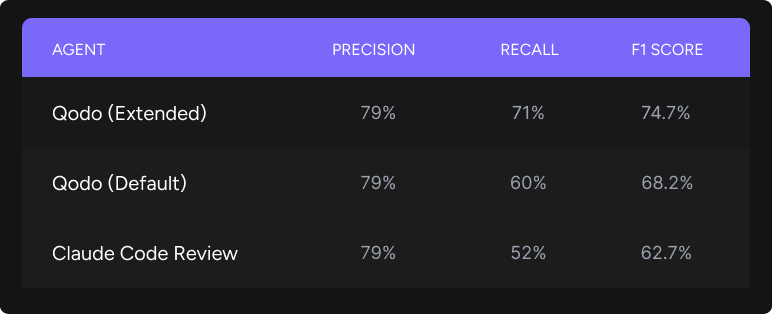

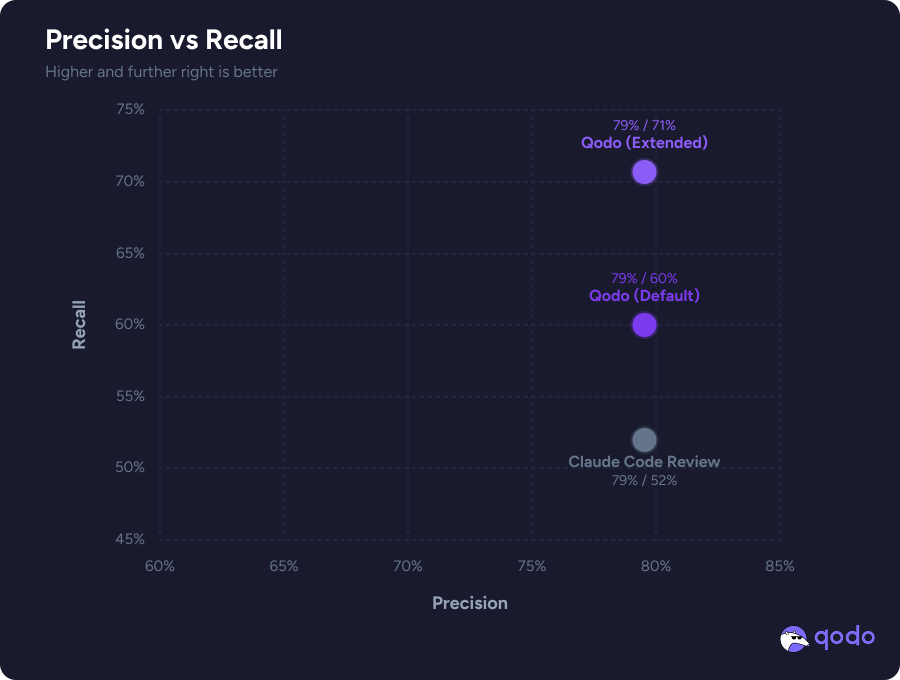

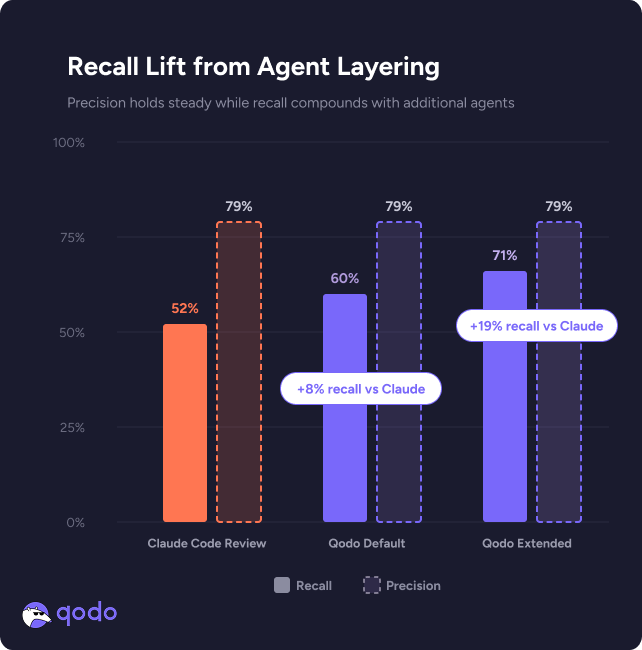

Our evaluation also includes the latest iteration of Qodo. It is important to note that because our system evolves with every run, the absolute number of trials of the production version of CUDO (79% precision/60% recall) has increased since our original report. So we’re introducing these as a new baseline for this comparison.

To find out the upper limits of AI-assisted review, we tested two different Qdo configurations:

- qudo (default): The current production version of our system.

- Cudo (Extended): Added an orchestrated multi-agent layer on top of the default agents.

While the extended modes are currently only research results and not yet available in production, they represent a “quality-first” use case. Imagine a highly sensitive PR where a team is willing to invest additional resources to ensure as thorough a review as possible.

The results are surprising: both the Qido configuration and the cloud land at similar precision levels. This means that the quality of individual findings remains high regardless of mode. True discrimination lies in memory. In standard mode Qdo already turns up issues with higher recalls than cloud code review, but the difference increases significantly when our multi-agent approach orchestrates specialized agents to capture the remaining ground truth.

Orchestrated Multi-Agent Harness

The research configuration shown above represents our latest architectural iteration. Instead of running a single review pass, it dispatches multiple agents, each tuned for different problem categories (logical errors, best practice violations, edge cases, cross-file dependencies) and merges their outputs through a validation and deduplication step.

This results in a large recall improvement without any precision degradation. This matters because, as we discussed in our original paper, precision is a dimension that can always be tightened through post-processing and filtering. However, recall is an inherently more complex challenge because it depends on deeply understanding the codebase, due to cross-file interactions, and the system’s ability to enforce repository-specific standards. You can’t filter your way to find problems you never knew existed.

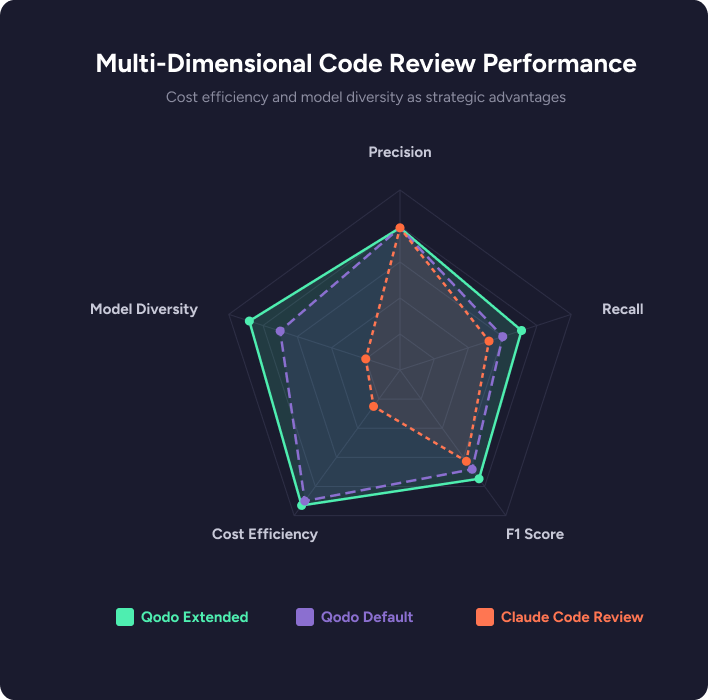

Finally, true multi-agent orchestration requires model diversity. While Anthropic’s system is solely confined to the cloud ecosystem, Qudo leverages a blend of the industry’s best SOTA models, dynamically crossing over OpenAI, Anthropic, and Google. By rejecting vendor lock-in, our harness synthesizes the unique analytical strengths of different model families to achieve a depth of review that a single-provider system cannot reach.

at cost

Cloud code reviews cost $15-$25 per review, depending on token-usage. Anthropic positions this as a premium, depth-first product and the engineering behind it reflects that ambition.

That said, the cost profile is worth noting. Literally an order of magnitude less than Anthropic’s pricing, Cudo makes your review process painless. You’re catching more issues and doing more thorough analysis without spending your budget.

More recalls at lower per-review cost is a favorable tradeoff for most engineering organizations.

what we learned

Cloud Code Review is an efficient system. Its accuracy is on par with the best tools we evaluated, and its multi-agent architecture clearly represents a step up from simple single-pass reviewers. Anthropic’s internal data on comment rates and approval rates aligns with what we observe. When it flags something, it is usually correct (e.g. high precision).

The difference is in coverage. In benchmarks designed to test the full spectrum of code review, not just obvious bugs, but also subtle best-practice and rules violations, cross-file issues and architectural concerns, Cudo’s deep codebase understanding and multi-agent harness approach translates into catching a significantly broader portion of the real issues. At a fraction of the per-review cost.

We will continue to expand the benchmark as new devices and configurations emerge. The dataset and all evaluated reviews are publicly available for independent verification.

Qodo Code Review Benchmark 1.0 is publicly available on our Benchmark GitHub Organization. Read the full research paper: “Beyond Surface-Level Bugs: Benchmarking AI Code Review at Scale.”

<a href