as i did with ICML 2025 Excellent PapersI ran my automated paper review system to create a summary of all the award-winning and runner-up papers. This time I also prepared comics to explain a paper in one image.

Here are the results.

Author: Liwei Jiang, Yuanjun Chai, Margaret Lee, Mikael Liu, Raymond Fok, Noah Desiree, Yulia Tsvetkov, Maarten Sapp, Yejin Choi

paper: https://arxiv.org/abs/2510.22954, neurips submission

Code: https://github.com/liweijiang/artificial-hivemind

Dataset: HF collection

what was done? Author introduces infinity-chatA dataset of 26K real-world open-ended questions to systematically evaluate output diversity in 70+ state-of-the-art LLMs. They identify a widespread “artificial hivemind” phenomenon where models exhibit extreme mode collapse – both repeatedly generating the same outputs internally (intra-model) and converging on surprisingly similar responses across different model families (inter-model).

why it matters? This invalidates the common assumption that increasing the temperature or using model combinations guarantees diversity. Studies show that modern RLHF and instruction tuning have homogenized the “constructive” latent space of models to such an extent that different models (for example, DeepSeq and GPT-4) perform as nearly identical clones on open-ended tasks. Furthermore, it shows that existing reward models are poorly calibrated for different human preferences (pluralism), failing to correctly score valid but specific responses.

Link to full review,

Author: Zihan Qiu, Zekun Wang, Bo Zheng, Zeyu Huang, Caiyu Wen, Songlin Yang, Rui Men, Le Yu, Fei Huang, Suozhi Huang, Daiheng Liu, Jingren Zhou, Junyang Lin (Queen Team)

paper: https://arxiv.org/abs/2505.06708, neurips submission

Code: https://github.com/qiuzh20/gated_attention

Sample: https://huggingface.co/collections/Qwen/qwen3-next

what was done? Author introduces gated attentionA mechanism that implements a learnable, input-dependent sigmoid gate immediately after scaled dot-product attention (SDPA) output. By modifying the attention output Y with a gate σ,XWθ), this method introduces element-wise sparsity and non-linearity before the final output projection.

why it matters? This simple architectural modification improves depth stability for large-scale training (eliminating loss spikes) and consistently improves on 15B MOE and 1.7B dense models. Importantly, it mechanically eliminates the “attention sink” phenomenon and “massive activation” without the need for heuristic corrections such as “sink tokens”, thereby significantly improving long-context extrapolation.

Link to full review,

Author: Kevin Wang, Ishaan Javali, Michael Bortkiewicz, Tomasz Trzesinski, Benjamin Eisenbach

paper: https://openreview.net/forum?id=s0JVsx3bx1

Project Page: https://wang-kevin3290.github.io/scaling-crl/

what was done? The authors successfully scaled reinforcement learning (RL) policies from the standard 2-5 layers to over 1,000 layers using self-supervised learning (specifically Contrastive RL) combined with modern architectural choices such as residual connections, LayerNorm, and swish activation.

why it matters? This challenges the prevailing dogma that RL does not benefit from depth. While standard algorithms like sack With deep networks saturating or collapsing, this work shows that Contrastive RL allows continuous performance scaling (20x-50x gains), enabling agents to solve long-horizon humanoid mazes and develop emergent locomotor skills without explicit reward engineering.

Link to full review,

Author: Tony Bonaire, Rafael Urfin, Giulio Biroli, Marc Mezard

paper: https://arxiv.org/abs/2505.17638, neurips submission

Code: https://github.com/tbonnair/When-Dif Fusion-Models-Don-t-Memorize

what was done? The authors provide a theoretical and empirical analysis showing the training dynamics of score-based diffusion models. Recognizing that models may eventually overfit, they identify two different time-frames: τgeneral, when the model learns to generate valid samples, and τMam, when it starts remembering specific training examples. This work was rewarded Best Paper Award at NeurIPS 2025,

why it matters? This work resolves the paradox of why over-parameterized diffusion models over-generalize despite having the ability to completely memorize the training data. by proving this τMam Scales linearly with dataset size n Whereas τgeneral remains stable, the paper establishes that “stopping early” is not just an assumption, but a structural requirement implicit dynamic regularizationThis explains why larger datasets widen the safety window for training, allowing models to generalize robustly on a larger scale,

Link to full review,

Author: Yang Yu, Ziqi Chen, Rui Lu, Andrew Zhao, Zhaokai Wang, Yang Yu, Shijie Song, Gao Huang

paper: https://arxiv.org/abs/2504.13837, neurips submission

Code: https://limit-of-rlvr.github.io

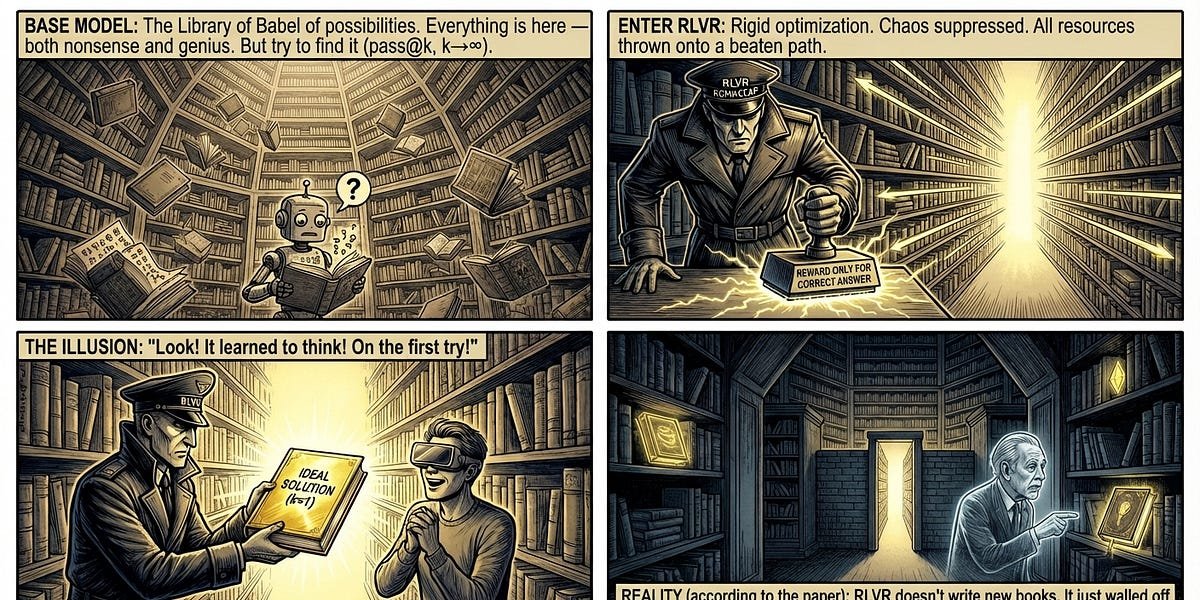

what was done? In this NeurIPS 2025 Best Paper runner-up, the authors systematically investigate the reasoning limits of large language models (LLMs) trained via reinforcement learning with verifiable rewards (RLVR). use fair pass@k metrics in mathematics, coding, and visual reasoning tasks, they compared the base models against their RL-tuned counterparts to determine whether RLVR generates novel reasoning patterns or merely enhances existing ones.

why it matters? The findings challenge the prevailing narrative that RLVR allows models to autonomously discover “superhuman” strategies, similar to AlphaGo. The study shows that while RLVR significantly improves sampling efficiency (correct answers appear more often), it does not extend the fundamental reasoning capability range of the model. In fact, for big OfBase models often solve More Unique problems compared to their RL-trained versions, suggesting that current RL methods are limited by the precursors of pre-trained models.

Link to full review,

Author: Zachary Chase, Steve Haneke, Shay Moran, Jonathan Schaeffer

paper: https://openreview.net/forum?id=EoebmBe9fG

what was done? The authors resolved a 30-year-old open problem in learning theory by establishing strict error bounds for transductive online learning. Recognized as Best Paper Runner-up at NeuroIPS 2025, they proved this for a hypothesis class with Littlestone Dimension DOptimal error threshold Θ(sqrt(D)).

why it matters? This result shows exactly how much “looking ahead” helps. This proves that access to the unlabeled sequence of future test points allows a quadratic reduction in mistakes compared to the standard online setting (where the limit is DThis closes a huge exponential gap between the previous best known lower bound of Ω(log)D) and the upper bound of hey,D,

Link to full review,

Author: Yizhou Liu, Ziming Liu, and Jeff Gore

paper: https://arxiv.org/abs/2505.10465, neurips submission

Code: https://github.com/liuyz0/SuperpositionScaling

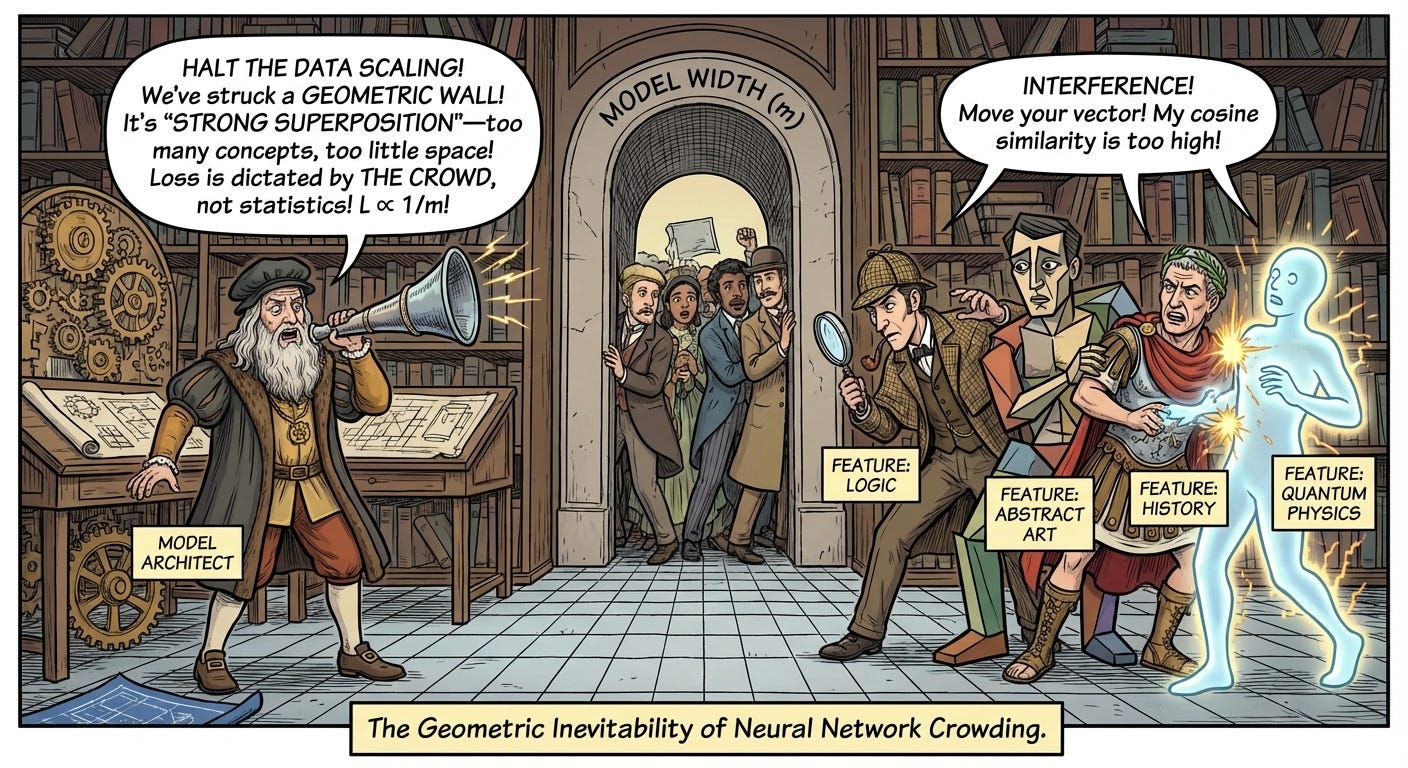

what was done? The authors propose a mechanistic explanation for them by adding neural scaling laws representation superpositionBy adopting a sparse autoencoder framework and performing validation on open-source LLMs (OPT, Pythia, QUEN), they demonstrate that when models operate in the “strong superposition” regime – representing features significantly larger than their dimensions – the loss scales inversely to the width of the model (l∝1/mThis scaling is driven by the geometric interference between feature vectors rather than the statistical properties of the data tail.

why it matters? This work, a NeurIPS 2025 Best Paper runner-up, provides a first-principles derivation of scaling laws that is robust to data distributions. In contrast to previous theories relying on manifold approximations, this research suggests that the “power law” behavior of LLMs is a geometric imperative of compressing sparse concepts into dense spaces. This implies that overcoming these scaling constraints requires architectural intervention to manage feature interference, as the geometric constraint cannot be bypassed by simply adding more data.

Link to full review,

I hope you find it fun and useful 😁

<a href=