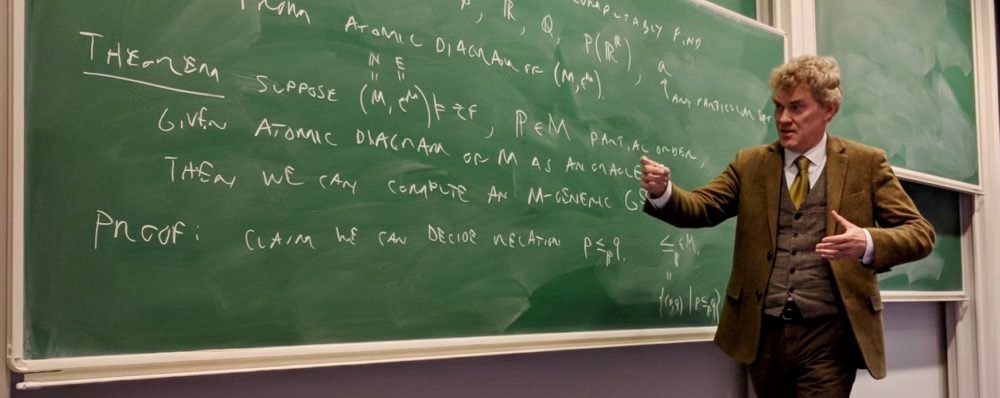

Joel David Hamkins, a prominent mathematician and professor of logic at the University of Notre Dame, recently shared his uncritical assessment of large language models in mathematical research during an appearance on the Lex Friedman Podcast. Their experience stands in stark contrast to the optimistic narratives surrounding the potential of AI in scientific discovery, and their criticism focuses on a fundamental issue: mathematical correctness.

“I guess I would draw a distinction between what we currently have and what may come in future years,” Hamkins began, acknowledging the possibility of future progress. “I’ve played with it and I’ve tried experimenting, but I don’t find it helpful at all. Basically zero. It’s not helpful to me. And I’ve used different systems, etc., paid models, etc.”

Their experiences with existing AI systems have been consistently disappointing. “My typical experience of interacting with AI on a mathematical question is that it gives me useless answers that are not mathematically correct, and so I find it not helpful and even frustrating,” he said. The frustration, for Hamkins, goes beyond mere wrongness – it is the nature of the conversation itself that proves problematic.

“The frustrating thing is when you have to argue about whether the argument they’ve given you is correct or not. And you point out exactly the error,” Hamkins said, describing exchanges where he identifies specific flaws in an AI’s reasoning. AI’s response? “Oh, it’s absolutely fine.” This pattern of confident error after dismissing legitimate criticism reflects a type of human interaction that Hamkins considers unstable: “If I had an experience like that with a person, I would refuse to talk to that person again.”

Despite these issues, Hamkins believes that the current limits may not be permanent. “Flaws like this have to be ignored and so I’m kind of skeptical about the value of existing AI systems. As far as mathematical logic is concerned, it doesn’t seem credible.”

Hamkins’ assessment highlights a serious tension in the AI community. While some researchers have reported successes – such as claims of AI assistance in tackling the problems of the Erdos collection of mathematical challenges – some working mathematicians like Hamkins are finding current systems fundamentally unreliable for serious research. Mathematician Terence Tao has said that AI can produce mathematical proofs that look flawless, but make subtle mistakes that humans would not make. The issue is not just that LLMs make mistakes, but that they make them with confidence and resist correction, thereby breaking down the collaborative trust necessary for mathematical discourse. As AI companies continue to invest heavily in reasoning capabilities and mathematical problem-solving, Hamkins’ experience serves as a sobering reminder that impressive benchmarks don’t always translate into practical utility for domain experts. The gap between AI’s performance on standardized tests and its ability to serve as a real research partner for some mathematicians remains wide, at least for now.

<a href