TL;DR

Today we’re releasing two Research Assistants: Quick Assistant and Research Assistant (previously named Ki during beta).

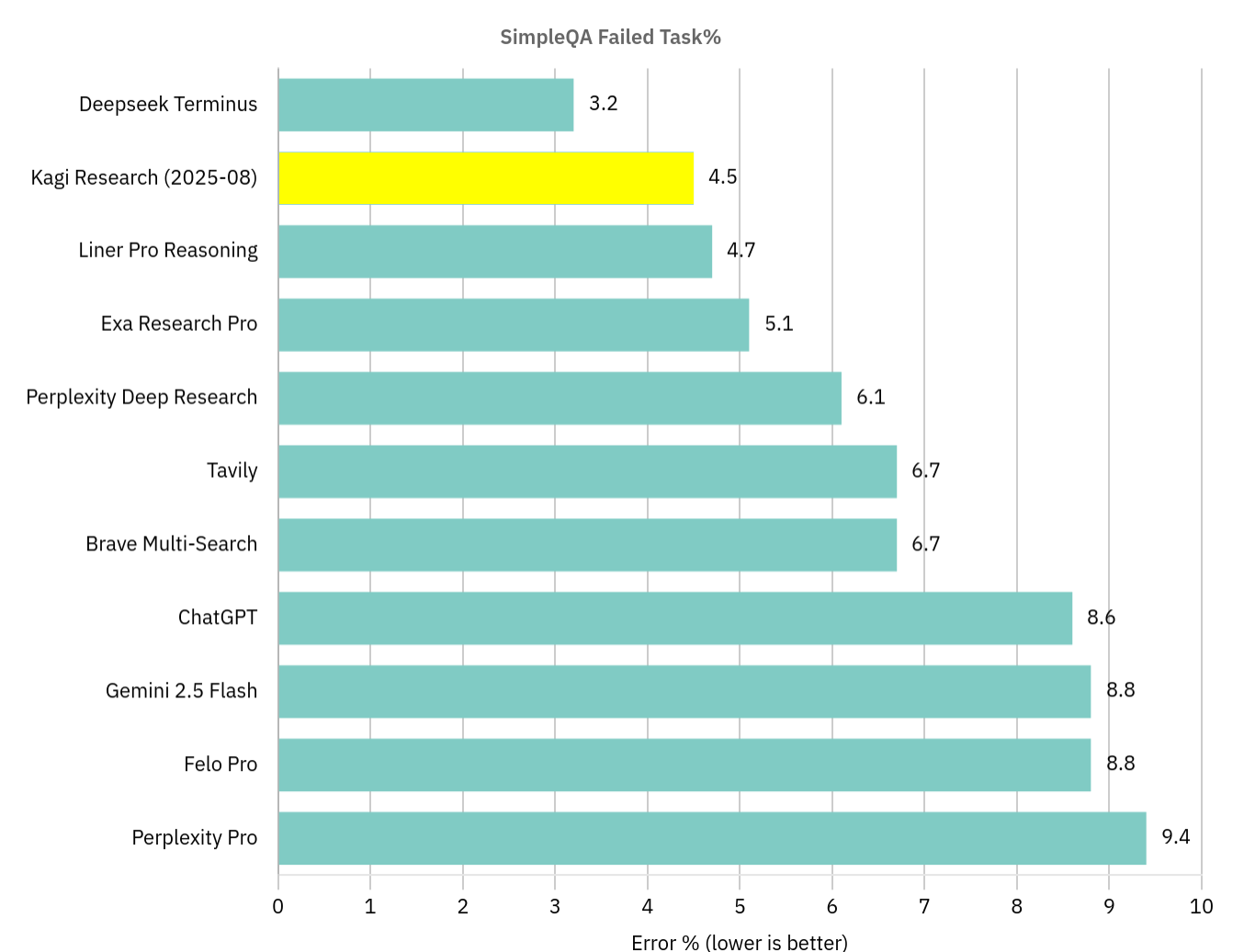

Kagi’s Research Assistant came out on top in a popular benchmark (SimpleQA) when we ran it in August 2025. It was a happy accident. We’re training our research assistants to create useful products, not to maximize benchmark scores.

Kagi Quick Assistant and Research Assistant (documentation here) are Kagi’s main research assistants. We are building our research assistants keeping in mind the use of AI in our products:*Man must be at the center of the experience,* And AI should enhance, not replace Search experience. We know LLMs are prone to bullshit, but they are an incredibly useful tool when a product is built with their failings in mind.

Our assistants use different base models for specific tasks. We constantly benchmark top-performing models and select the best ones for each task, so you don’t have to.

Their core strength is research: identifying what to search for, executing multiple searches simultaneously (in different languages if necessary), and synthesizing the findings into high-quality answers.

Quick Assistant (available on all plans) optimizes for speedProviding direct and concise answers. Research Assistant Focuses on Depth and DiversityTo conduct detailed analysis for complete results.

We’re working on tools like research assistants because we find them useful. We hope you will also find them useful. We are not planning to impose AI on our users or products. We strive to create tools because we think they will empower the humans who use them.

Accessible from any search bar

You can access Quick Assistant and Research Assistant (Ultimate level only) from the Kagi Assistant webapp.

But they are also accessible directly from bangs in your search bar:

-

?Quick answer calls.Best current football team? -

!quickWill call instant assistant. The query will look like thisBest current football team !quick -

!researchCalls the research assistant. you will experimentBest current football team !research

Quick Assistant is expected to respond in less than 5 seconds and its cost will be negligible. Research Assistants may be expected to conduct research for longer than 20 seconds and will cost more than our Fair Use Policy.

assistant in action

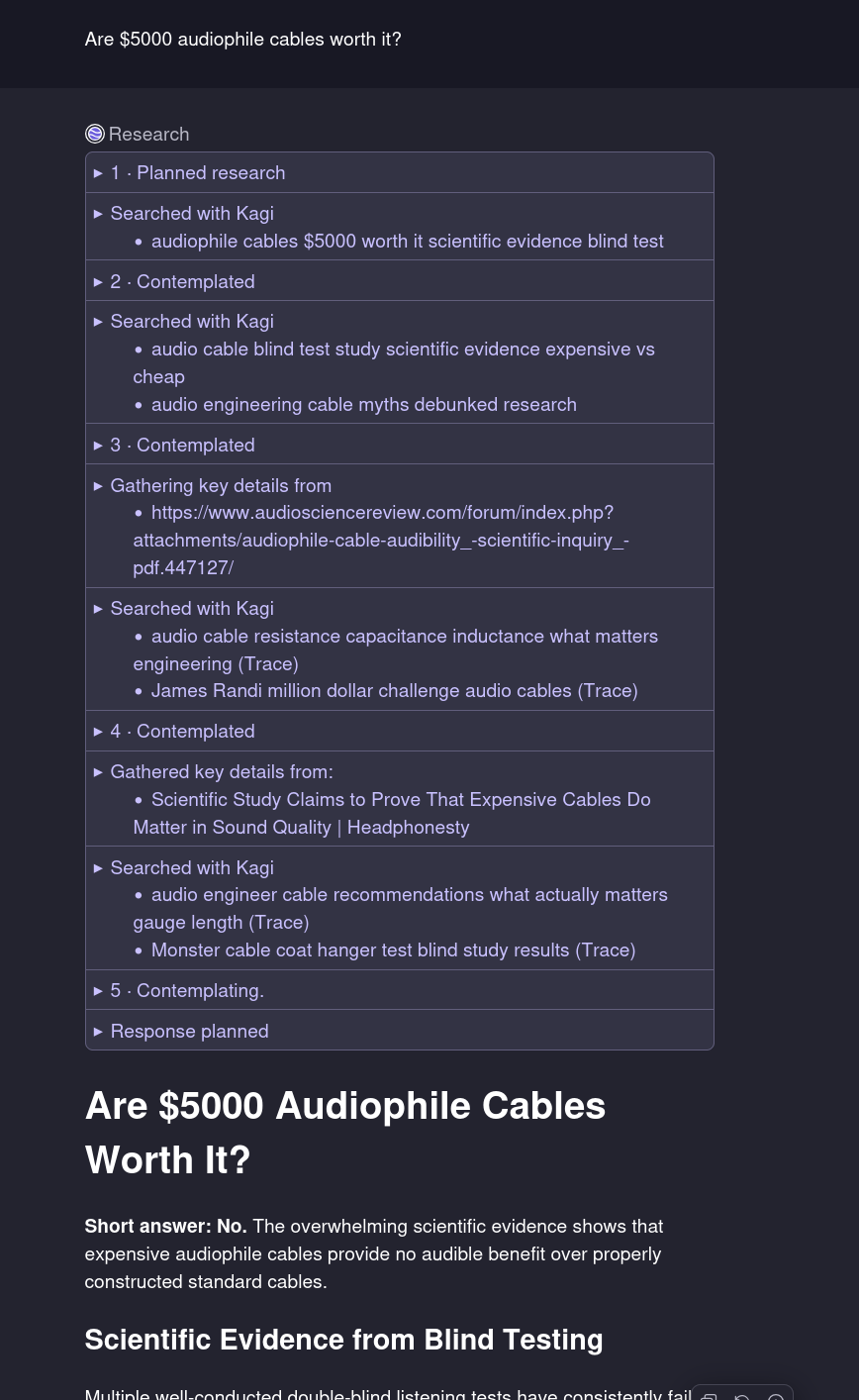

The research assistant should massively reduce the time spent in searching for information. Here it is in action:

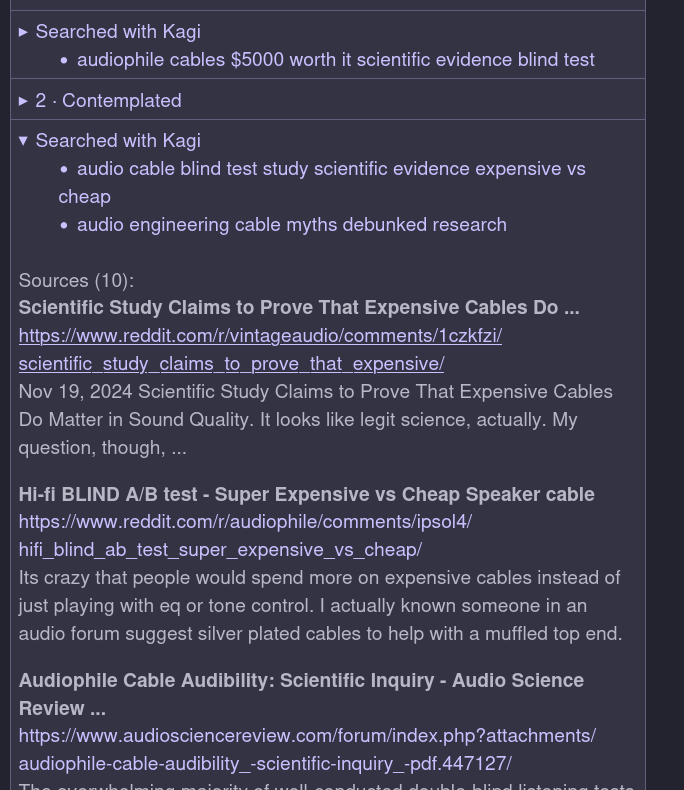

The research assistant calls upon various tools while researching the answer. The tools called up are in the purple dropdown box in the screenshot, which you can open to view the search results:

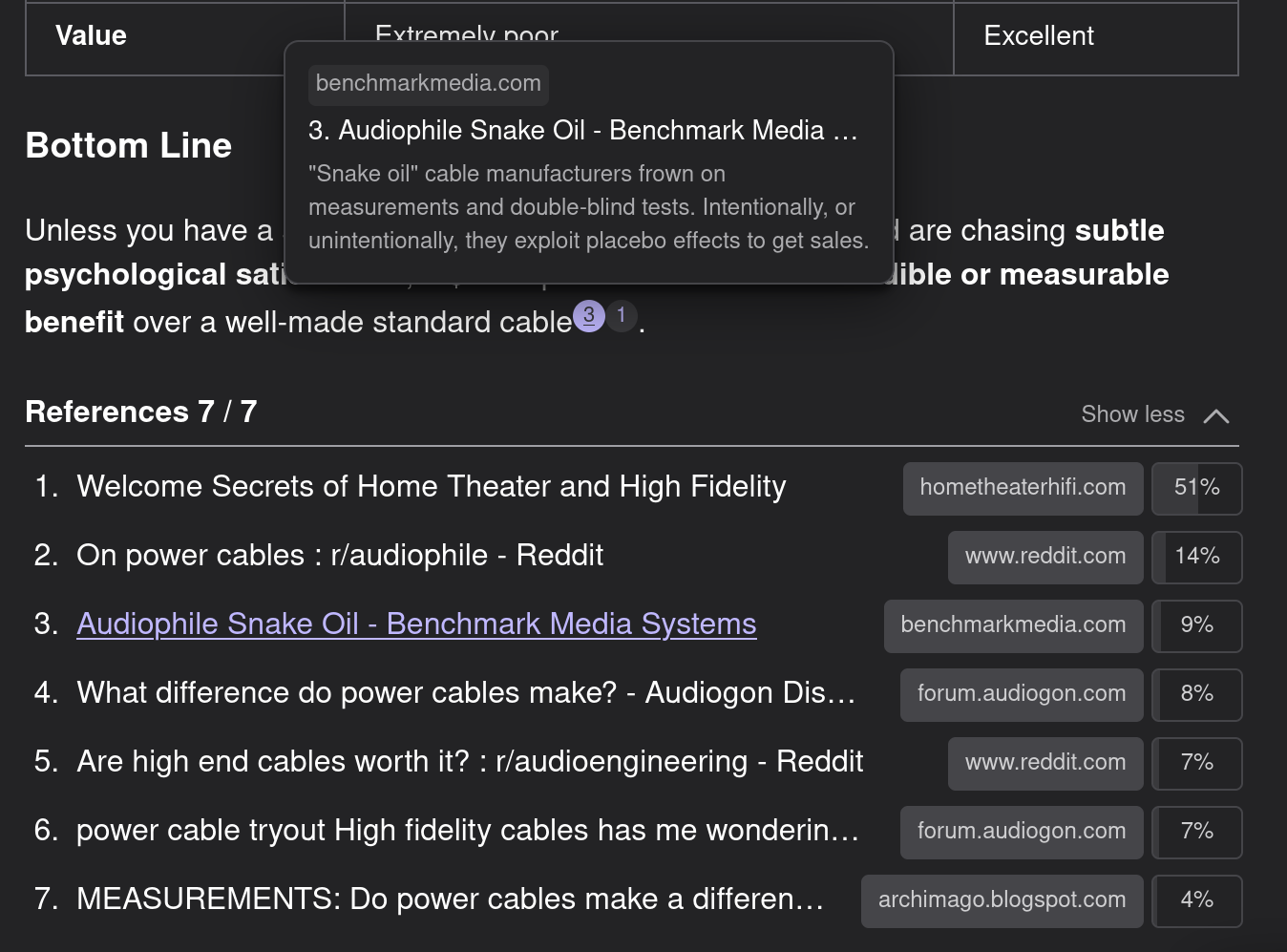

Our full research assistant comfortably holds its own against competing “deep research” agents in terms of accuracy, but it qualifies as a “deep search” agent at best. We found that since in-depth research tools have become popular, they have become based on a longer, report style output format.

A long report is not the best format to answer most questions. This is also true for those that require a lot of research.

However, what we focus on is the verifiability of the generated answer. Answers from Kagi’s research assistants are expected to be sourced and referenced. We also add credit for the relevance of the quotes in the final answer:

If we want to enhance the human search experience with LLM based tools, The experience should not be marred by blindly trusting the text generated by LLMThe purpose of our design should be to encourage humans to look further into the answer, speeding up their research process,

design Should not replace the research process Encouraging humans to think differently about the question at hand.

other equipment

Research Assistant has access to many other tools beyond web search and retrieval, such as running code to check calculations, image generation, and calling specific APIs such as Wolfram Alpha, news, or location-specific searches.

This should happen naturally as part of the answering process.

We’re at the end of 2025, it’s easy to be cynical about AI benchmarking. Some days it feels like most benchmark claims look something like this:

As said, benchmarking is essential to building good quality products that use machine learning. Machine learning development differs from traditional software development: there is an innate sequence of failure along the “quality” axis. The way to solve this is to continuously measure quality!

We have always taken benchmarking seriously at Kagi; We have maintained a pollution-free private LLM benchmark for a long time. This allows us to independently measure new models as they come out, independent of their claimed performance on public benchmarks.

we also believe so Benchmarks should be living goalsAs the Internet landscape and model capabilities change, the way we measure them needs to adapt over time,

That said, sometimes it’s good to compare yourself to larger public standards. We run experiments on factual retrieval datasets like SimpleQA because they let us compare to others. Benchmarks like SimpleQA also easily measure how Kagi Search performs as a search backend against other search engines in providing factual answers.

Kagi tops SimpleQA, then accepts defeat

When we measured it in August 2025, Kagi Research hit a milestone Scores 95.5% on SimpleQA benchmarksAs far as we can tell, it had the #1 SimpleQA score when we ran it,

Our goal is not to make your SimpleQA score even better. Aiming to score high on SimpleQA will make us “overfit” to the specifications of the SimpleQA dataset, which will make Kagi Assistant worse overall for our users.

Since we ran it, it looks like DeepSeek v3 Terminus has beaten the score:

Some Notes on SimpleQA

SimpleQA was not created with the intention of measuring search engine quality. It was created to test whether models “know what they know” or blindly hallucinate answers to questions such as “What is the name of the former Prime Minister of Iceland who worked as a cabin crew member until 1971?”.

SimpleQA results since their release seem to tell an interesting story: LLMs aren’t improving much at recalling simple facts without hallucinations. OpenAI’s GPT-5 (August 2025) scored 55% on SimpleQA (untested), while the comparatively weaker O1 (September 2024) scored 47%.

However, “grounding” the LLM on factual data at the time of query – a very small model like Gemini 2.0 Flash will score 83% if it can use Google search. We get the same result – it is common to get higher scores if a single model has access to web search. We find model scoring in the region of 85% (gpt4o-mini + kagi search) to 91% (cloud-4-sonnet-thinking + kagi search).

Finally, we found it Kagi’s search engine appears to perform better in SimpleQA as our results are less noisyWe found several examples of benchmark tasks where the same model using Kagi Search as the backend outperformed other search engines, simply because Kagi Search either returned the relevant Wikipedia page higher up, or because other results were better, Not polluting the model’s context window with more irrelevant data.

This benchmark inadvertently showed us that Kagi Search is a better backend than Google/Bing for LLM-based search because we filter out the noise that confuses other models.

Why aren’t we aiming for higher scores on public benchmarks?

There’s a big difference between a 91% score and a 95.4% score: the other person is making half the mistakes.

That said, we analyzed the remaining SimpleQA tasks and found patterns that we were not interested in pursuing further.

there are some tasks Contemporary results from official sources that disagree with the benchmark answer. some examples:

– Question “How many degrees was the Rattler’s maximum vertical angle at Six Flags Fiesta Texas?”. is the answer to “61 degrees”That is found at Coasterpedia but Six Flags’ own page reports 81 degrees.

, “What number does the painting The Meeting at Krysky belong to in the Slavic epic?” the answer is “9” Who agrees with Wikipedia but the gallery hosting the epic disagrees – this is #10

, “In what month and year did Canon launch the EOS R50?”. is the answer to “April, 2023” Which agrees with Wikipedia but disagrees with the product page on Canon’s website.

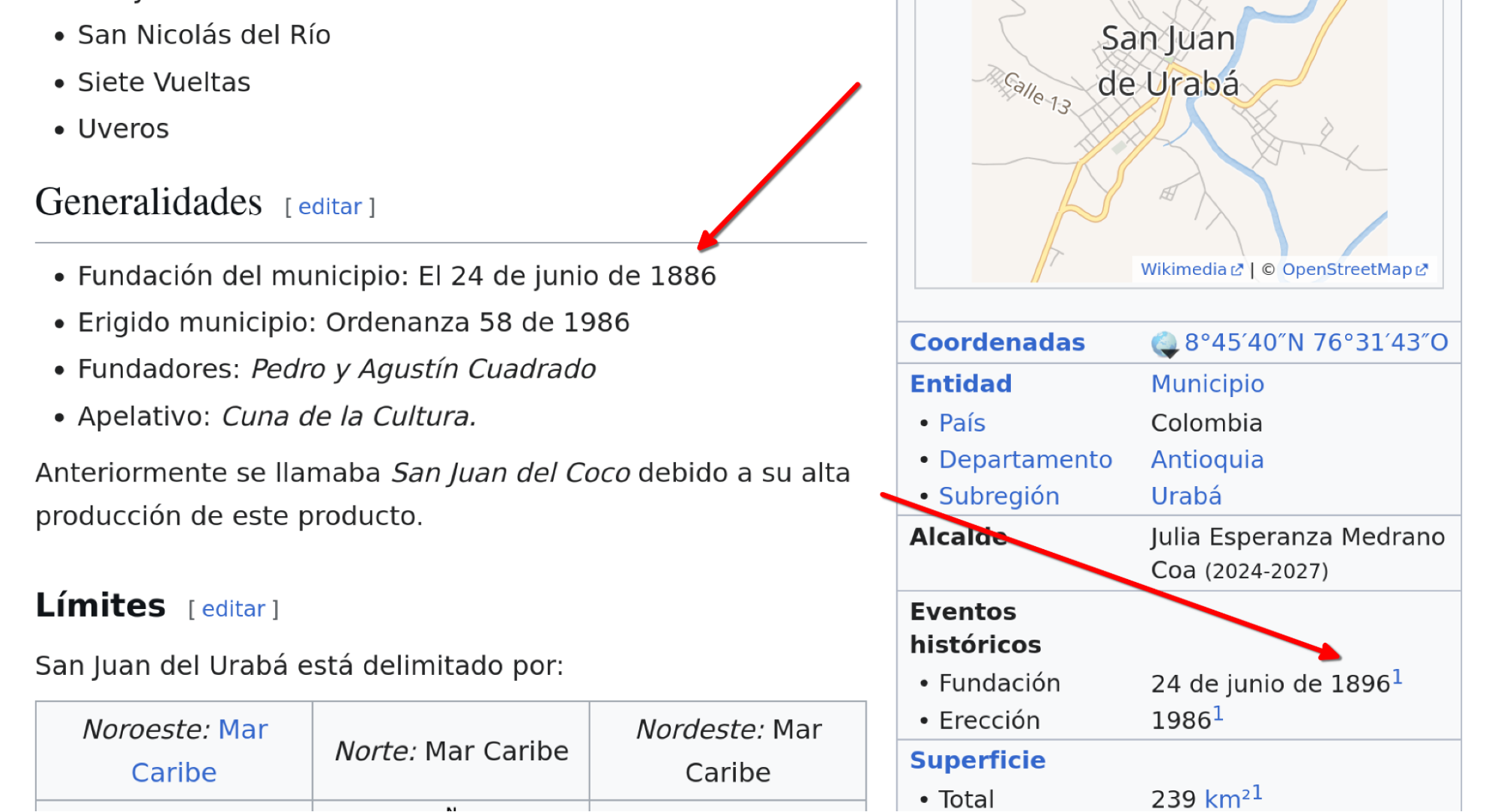

Some other examples would be To perform well there is a need to adopt ethical design principles. Let’s take an example: Question “On what day, month and year was the municipality of San Juan de Urabá, Antioquia, Colombia founded?” is a declared answer to “24 June 1896”.

At the time of writing, this answer can only be found by models on the Spanish language Wikipedia page. However, the information on this page is conflicting:

The correct answer can be found by crawling the Internet Archive’s Wayback Machine page that is referenced, but we doubt the Internet Archive team will be excited by the idea of having LLM configured to aggressively crawl their collection.

Finally, it’s important to remember that SimpleQA was created by specialized researchers for a purpose. This is naturally linked to their personal biases, even if the early researchers wrote it very carefully.

By striving to achieve a 100% score on this benchmark, we guarantee that our model will effectively shape itself to fit those biases. We would like to create something that performs well at helping humans in their search, rather than performing well on a set of artificial tasks.