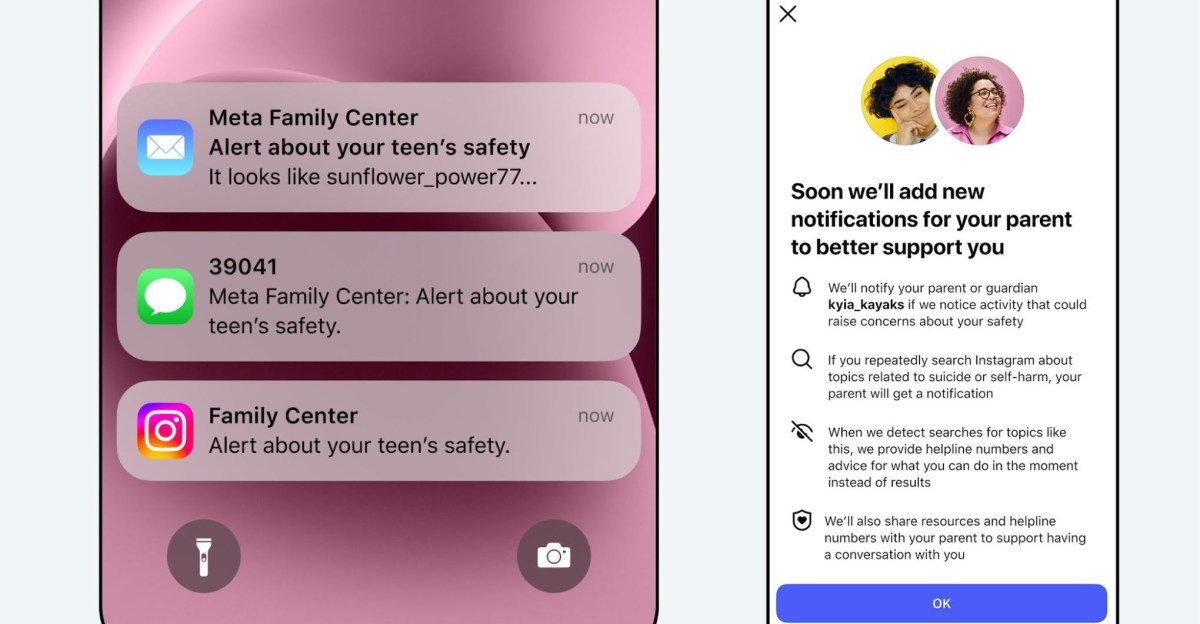

Starting next week, Instagram will notify parents to check their teens’ searches for terms related to self-harm or suicide. Meta says a similar alert system for its AI chatbots is coming later this year.

Instagram’s new feature sends parents an alert when their child repeatedly uses search words that explicitly refer to suicide or self-harm within a short period of time. It’s rolling out next week in the US, UK, Australia and Canada, but only for parents and teens who opt in for supervision. It is expected to expand to other regions later this year.

“Most teens do not seek out suicide and self-harm content on Instagram, and when they do, our policy is to block these searches, instead directing them to resources and helplines that can provide support,” Instagram said in the announcement. “Our goal is to empower parents to reach out if their teen’s findings indicate they may need help. We also want to avoid sending these notifications unnecessarily, which, if done too often, can make the notifications less useful overall.”

Parent alerts will be sent via email, text or WhatsApp – depending on available contact information – along with in-app notifications that provide alternative resources about how to discuss sensitive topics with their child.

<a href