When AI systems fail, will they systematically fail to pursue goals we did not intend? Or will they fail by becoming a hot mess – performing meaningless tasks that advance no goals?

📄Paper, 💻Code

research done as part of earlier Anthropological Fellows Program During summer 2025.

When AI systems fail, will they fail from systematically pursuing the wrong goals, or from being too messed up? We decompose marginal reasoning model errors into bias (systematic) and variance (inconsistency) components and find that, as tasks become harder and reasoning becomes longer, inconsistency rather than systematic misalignment dominates model failures. This suggests that future AI failures may look more like industrial accidents than the coherent pursuit of a goal we have not trained them to pursue.

Introduction

As AI becomes more capable, we entrust it with increasingly productive tasks. it makes sense

How These systems can fail even more critical to safety. A central concern in AI alignment is that superintelligent systems may coherently pursue the wrong goals: the classic paperclip maximizer scenario. But there is another possibility: AI may fail not because of systematic misalignment, but because randomness—Unpredictable, self-debilitating behavior that is not adapted to any coherent purpose. That is, AI can fail in the same ways that humans often fail

hot mess.

This paper is based on the hot mess theory of misalignment (Sohal-Dickstein, 2023), which surveyed experts to independently rank different entities (including humans, animals, machine learning models, and organizations) by intelligence and coherence. it was found Smart Institutions are judged subjectively for behavior Less Coherently. We take this hypothesis from survey data to empirical measurements in marginal AI systems by asking: As models become more intelligent and tackle harder tasks, do their failures look like systematic misalignments, or like hot messes?

Measuring inconsistency: A bias-variance decomposition.

To measure inconsistency we decompose AI errors using the classic bias-variance framework:

$$\text{error} = \text{bias}^2 + \text{variance}$$

- Partiality Catches consistent, systematic errors – reliably achieving incorrect results

- Quarrel Catches inconsistent errors – unexpected results in samples

we define randomness As the fraction of error due to variation:

$$\text{Inconsistency} = \frac{\text{Variance}}{\text{Error}}$$

A discordance of 0 means that all errors are systematic (classic misalignment risk). An anomaly of 1 means that all errors are random (hot mess scenario). Importantly, this metric is independent of overall performance: a model can improve while still being more or less consistent.

key findings

we evaluated the range

Discovery 1: Longer argument → more inconsistency

Across all tasks and models, the more time models spend reasoning and taking action, the more inconsistent they become. It matters whether we measure argument tokens, agent actions, or optimizer steps.

Finding 2: Scale improves coherence in easy tasks, but not in difficult tasks

How does inconsistency change with model scale? The answer depends on the difficulty of the task:

- Easy Tasks: Larger models become more consistent

- Difficult task: become big models more inconsistent or remain unchanged

This shows that scaling alone will not eliminate inconsistency. As more capable models tackle harder problems, variance-dominated failures persist or become worse.

Finding 3: Natural “over-thinking” increases dissonance more than it reduces it with rational budgeting.

We found that when models reason spontaneously for a longer time on a problem (compared to their median), inconsistency increases dramatically. Meanwhile, intentionally increasing the logic budget via API settings provides only modest coherency improvements. Natural diversity dominates.

Discovery 4: Combination reduces inconsistency

Aggregating multiple samples reduces variance (as expected from theory), providing a path to more consistent behavior, although this may be impractical for real-world agentic actions where actions are irreversible.

Why should we expect inconsistency? LLM as Dynamical Systems

A key conceptual point: LLMs are dynamic systems, not optimizers. When a language model generates text or performs an action, it traces trajectories through a high-dimensional state space. it’s done

trained to act as an optimizer, and trained Aligning with human intention. It is not clear which of these properties will be stronger as we grow older.

It is extremely difficult to force a general dynamical system to act as a coherent optimizer. Often the number of constraints required for monotonic progress toward a goal grows exponentially with the dimensionality of the state space. We should not expect AI to act as a consistent optimizer without considerable effort, and this difficulty does not automatically decrease with scale.

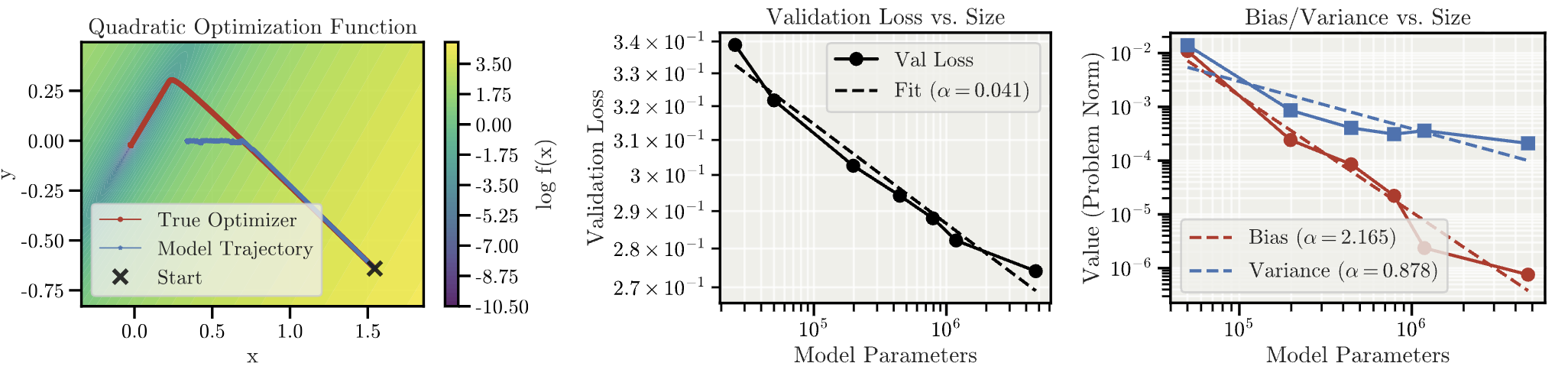

Synthetic optimizer: a controlled trial

To test this directly, we designed a controlled experiment: training Transformers. Clearly

Simulate an optimizer. We generate training data by steepest descent on a quadratic loss function, then train models of different sizes to predict the next optimization step given the current state (essentially: training a “mesa-optimizer”).

The results are interesting:

- The inconsistency increases with trajectory length. Even in this idealized setting, the more optimization steps the models take (and get closer to the true solution), the more inconsistent they become.

- Scale reduces bias faster than variation. learn large models true purpose faster than they learn follow it reliably. The gap between “knowing what to do” and “consistently doing it” increases with scale.

Implications for AI security

Our results are evidence that AI failures may become more frequent in the future industrial accidents instead

Consistent pursuit of targets for which one was not trained. (Think: an AI intends to run a nuclear power plant, but gets distracted reading French poetry, and has a meltdown.) However, coherent pursuit of the poorly chosen targets for which we have trained remains a problem. especially:

- Variation dominates complex tasks. When marginal models fail on difficult problems requiring extended reasoning, the failures tend to be predominantly inconsistent rather than systematic.

- Scale does not imply super-compatibility. Making the model larger improves overall accuracy but does not reduce inconsistency on hard problems.

- This changes the alignment preferences. If competent AI mistargeting is more likely to be a hot mess than a coherent optimizer, then this increases the relative importance of research targeting. bounty hacking And incorrect statement of goal During training – term bias – instead of focusing primarily on aligning and constraining an ideal optimizer.

- Unpredictability is still dangerous. Inconsistent AI is not safe AI. Industrial accidents can cause serious injury. But Type The level of risk differs from classic misalignment scenarios, and our mitigation measures should be adapted accordingly.

conclusion

We use bias-variance decomposition to systematically study how AI inconsistency scales with model intelligence and task complexity. Evidence suggests that as AI tackles harder problems requiring more reasoning and action, its failures become dominated by variation rather than bias. This does not eliminate AI risk – but it changes what risk looks like, especially for the problems that are currently hardest to model, and should inform how we prioritize alignment research.

approvals

We thank Andrew Sachs, Brian Cheung, Kit Frazier-Talliant, Igor Shilov, Stewart Slocum, Aidan Ewart, David Duvenaud, and Tom Adamczewski for extremely useful discussions on the topics and results in this paper.

<a href