Last week, this commit stopped me mid-scroll:

858d2e434dd 8372584: [Linux]: Replace reading proc to get thread CPU time with clock_gettime

diffstat was interesting: +96 insertions, -54 deletions. A 55-line JMH benchmark has been added to the changeset, meaning the production code has actually been reduced.

os_linux.cpp: :

static jlong user_thread_cpu_time(Thread *thread) CPUCLOCK_VIRT;

The implementation behind this was ThreadMXBean.getCurrentThreadUserTime(). To get the user CPU time of the current thread, the old code was:

- formatting path to

/proc/self/task//stat - opening that file

- reading into stack buffer

- Parsing through a hostile format where the command name may contain parentheses (so

strrchrfor the last)) - run

sscanfTo remove fields 13 and 14 - Converting clock ticks to nanoseconds

For comparison, here’s what getCurrentThreadCpuTime() Does and has always done:

jlong os::current_thread_cpu_time() // Flip to CPUCLOCK_VIRT for user-time-only *clockid = (*clockid & ~CLOCK_TYPE_MASK) jlong os::Linux::thread_cpu_time(clockid_t clockid) // Flip to CPUCLOCK_VIRT for user-time-only *clockid = (*clockid & ~CLOCK_TYPE_MASK)

just one clock_gettime() call out. There’s no file I/O, no complex parsing and no buffers to manage.

“getCurrentThreadUserTime is 30x-400x slower than getCurrentThreadCpuTime”

The difference increases under concurrency. Why clock_gettime() so fast? Both approaches require kernel entry, but the difference is what happens next.

/proc Path:

open()syscall- VFS Dispatch + Daintree Lookup

- Synthesizes file contents at procfs read time

- Kernel formats string into buffer

read()syscall, copy to userspace- userspace

sscanf()dissection close()syscall

clock_gettime(CLOCK_THREAD_CPUTIME_ID) Path:

- Single syscall →

posix_cpu_clock_get()→cpu_clock_sample()→task_sched_runtime()→ reads directly fromsched_entity

/proc The path involves multiple syscalls, VFS machinery, string formatting kernel-side, and parsing userspace-side. clock_gettime() Path is a syscall with a direct function call chain.

Under concurrent load, /proc The approach also suffers from kernel lock contention. The bug report notes:

so why not do it“Reading the proc is slow (so this process is placed under the Slow_thread_cpu_time(…) method) and can cause noticeable spikes in case of contention for kernel resources.”

getCurrentThreadUserTime() just use clock_gettime() From the beginning?

The answer is (probably) POSIX. The standard mandates that CLOCK_THREAD_CPUTIME_ID Returns the total CPU time (user + system). There is no portable way to simply request a user’s time. so /procbased implementation.

The Linux port of OpenJDK is not limited to the POSIX definition, it can use Linux-specific features. Let’s see how.

The Linux kernel since 2.6.12 (released in 2005) encodes clock type information directly.clockid_t price. when you call pthread_getcpuclockid()You get back a clockid with a specific bit pattern:

Bit 2: Thread vs process clock Bits 1-0: Clock type 00 = PROF 01 = VIRT (user time only) 10 = SCHED (user + system, POSIX-compliant) 11 = FD

The remaining bits encode the target PID/TID. We’ll come back to that in the bonus section.

POSIX-compliant pthread_getcpuclockid() Returns a clockid with bits 10 (Scheduled). But if you flip those low bits 01 (VIRT), clock_gettime() Will only return the user’s time.

New Implementation:

static bool get_thread_clockid(Thread* thread, clockid_t* clockid, bool total) { constexpr clockid_t CLOCK_TYPE_MASK = 3; constexpr clockid_t CPUCLOCK_VIRT = 1; int rc = pthread_getcpuclockid(thread->osthread()->pthread_id(), clockid); if (rc != 0) { // Thread may have terminated assert_status(rc == ESRCH, rc, "pthread_getcpuclockid failed"); return false; } if (!total) { // Flip to CPUCLOCK_VIRT for user-time-only *clockid = (*clockid & ~CLOCK_TYPE_MASK) | CPUCLOCK_VIRT; } return true; } static jlong user_thread_cpu_time(Thread *thread) { clockid_t clockid; bool success = get_thread_clockid(thread, &clockid, false); return success ? os::Linux::thread_cpu_time(clockid) : -1; }

And that’s all. The new version has no file I/O, no buffers and certainly no sscanf() With thirteen format specifiers.

main() Simple execution method from IDE:

@State(Scope.Benchmark) @Warmup(iterations = 2, time = 5) @Measurement(iterations = 5, time = 5) @BenchmarkMode(Mode.SampleTime) @OutputTimeUnit(TimeUnit.MICROSECONDS) @Threads(16) @Fork(value = 1) public class ThreadMXBeanBench { static final ThreadMXBean mxThreadBean = ManagementFactory.getThreadMXBean(); static long user; // To avoid dead-code elimination @Benchmark public void getCurrentThreadUserTime() throws Throwable { user = mxThreadBean.getCurrentThreadUserTime(); } public static void main(String[] args) throws RunnerException { Options opt = new OptionsBuilder() .include(ThreadMXBeanBench.class.getSimpleName()) .build(); new Runner(opt).run(); } }

Aside: this is an unscientific benchmark, there are other processes running on my desktop etc. Anyway, here’s the setup: Ryzen 9950X, on JDK main branch commit 8ab7d3b89f656e5c. For the “before” case, I reverted the fix instead of checking out the old revision.

Here is the result:

Benchmark Mode Cnt Score Error Units ThreadMXBeanBench.getCurrentThreadUserTime sample 8912714 11.186 ± 0.006 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.00 sample 2.000 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.50 sample 10.272 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.90 sample 17.984 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.95 sample 20.832 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.99 sample 27.552 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.999 sample 56.768 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.9999 sample 79.709 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p1.00 sample 1179.648 us/op

We can see that a single invocation took on average 11 microseconds and the average per invocation was about 10 microseconds.

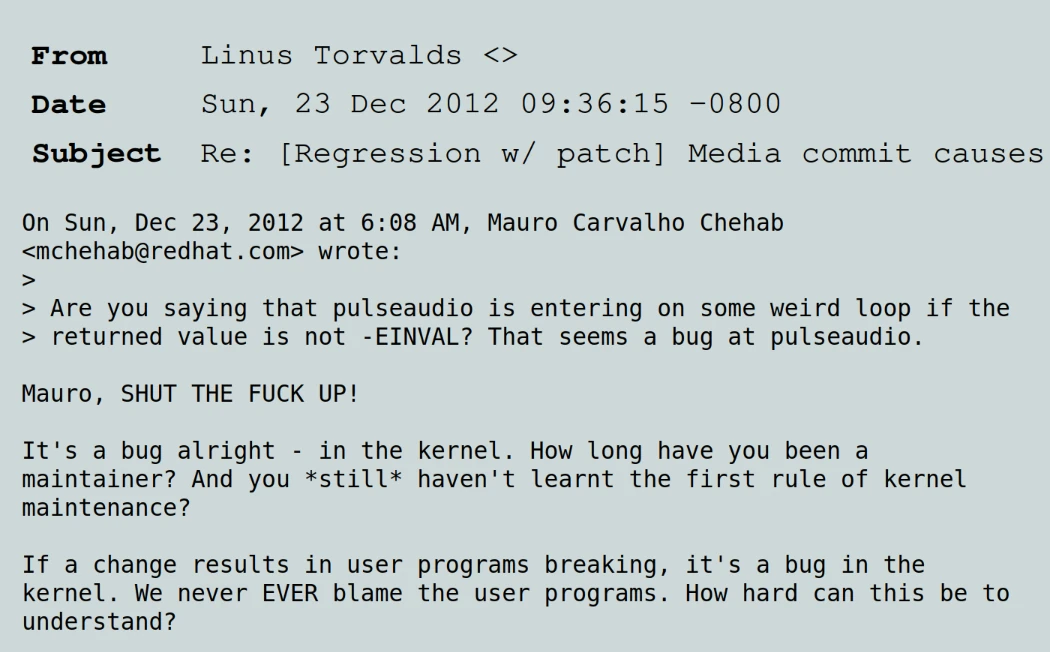

The CPU profile looks like this:

CPU profile confirms that each invocation getCurrentThreadUserTime() Makes multiple syscalls. In fact, most of the CPU time is spent in syscalls. We can see files opening and closing. Shutting down alone generates multiple syscalls, including futex locks.

Let’s see the benchmark results with the improvements applied:

Benchmark Mode Cnt Score Error Units ThreadMXBeanBench.getCurrentThreadUserTime sample 11037102 0.279 ± 0.001 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.00 sample 0.070 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.50 sample 0.310 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.90 sample 0.440 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.95 sample 0.530 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.99 sample 0.610 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.999 sample 1.030 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.9999 sample 3.088 us/op ThreadMXBeanBench.getCurrentThreadUserTime:p1.00 sample 1230.848 us/op

The average dropped from 11 microseconds to 279 nanoseconds. This means that the latency of the fixed version is 40 times lower than that of the older version. Although this is not a 400x improvement, it is within the 30x – 400x range from the original report. Chances are the delta will be higher with a different setup. Let’s take a look at the new profile:

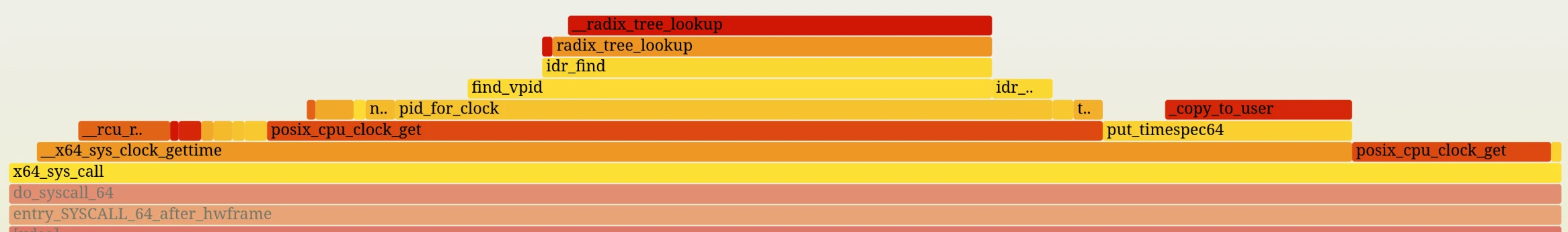

The profile is very clean. There is only one syscall. If the profile is to be trusted most of the time is spent outside the kernel, in the JVM.

barely. Bit encoding is stable. It hasn’t changed in 20 years, but you won’t find it inclock_gettime(2) Man page. The closest thing to official documentation is the kernel source itself. kernel/time/posix-cpu-timers.c and this CPUCLOCK_* Macros.

The kernel’s policy is clear: do not break user space.

My opinion: If glibc depends on it, it’s not going away.

When looking at the profiler data from the ‘after’ run, I noticed another optimization opportunity: a good portion of the remaining syscall is spent inside the radix tree lookup. have a look:

When JVM calls pthread_getcpuclockid()it receives a clockid Which encodes the ID of the thread. when it clockid is passed to clock_gettime()The kernel extracts the thread ID and performs a radix tree lookup to find pid The structure associated with that ID.

However, the Linux kernel has a fast-path. If encoded PID clockid is 0, the kernel interprets this as “the current thread” and skips the radix tree lookup altogether, going straight to the structure of the current task.

The OpenJDK fix currently gets the specific TID, flips the bits, and passes it. clock_gettime(). This forces the kernel to take a “normalized path” (radix tree lookup).

The source code looks like this:

/* * Functions for validating access to tasks. */ static struct pid *pid_for_clock(const clockid_t clock, bool gettime) { [...] /* * If the encoded PID is 0, then the timer is targeted at current * or the process to which current belongs. */ if (upid == 0) // the fast path: current task lookup, cheap return thread ? task_pid(current) : task_tgid(current); // the generalized path: radix tree lookup, more expensive pid = find_vpid(upid); [...]

If JVM has done the entire build clockid With PID=0 encoded manually (instead of getting it). clockid through pthread_getcpuclockid()), the kernel can take the fast-path and avoid radix tree lookups altogether. JVM already pokes bits clockidSo building it completely from scratch wouldn’t be a huge leap in terms of compatibility.

Let’s try it!

First, a refresher on clockid Encoding. clockid It is constructed as follows:

clockid for TID=42, user-time-only: 1111_1111_1111_1111_1111_1110_1010_1101 └───────────────~42────────────────┘│└┘ │ └─ 01 = VIRT (user time only) └─── 1 = per-thread

For the current thread, we want PID=0 encoded, which gives ~0 In upper bits:

1111_1111_1111_1111_1111_1111_1111_1101 └─────────────── ~0 ───────────────┘│└┘ │ └─ 01 = VIRT (user time only) └─── 1 = per-thread

We can translate this into C++ as:

// Linux Kernel internal bit encoding for dynamic CPU clocks: // [31:3] : Bitwise NOT of the PID or TID (~0 for current thread) // [2] : 1 = Per-thread clock, 0 = Per-process clock // [1:0] : Clock type (0 = PROF, 1 = VIRT/User-only, 2 = SCHED) static_assert(sizeof(clockid_t) == 4, "Linux clockid_t must be 32-bit"); constexpr clockid_t CLOCK_CURRENT_THREAD_USERTIME = static_cast(~0u << 3 | 4 | 1);

and then make a little teenage change user_thread_cpu_time(): :

jlong os::current_thread_cpu_time(bool user_sys_cpu_time) { if (user_sys_cpu_time) { return os::Linux::thread_cpu_time(CLOCK_THREAD_CPUTIME_ID); } else { - return user_thread_cpu_time(Thread::current()); + return os::Linux::thread_cpu_time(CLOCK_CURRENT_THREAD_USERTIME); }

It is enough to make the above changes getCurrentThreadUserTime() Use fast-paths in the kernel.

Given that we are already in nanosecond territory, we change the test slightly:

- Increase iteration and fork count

- Use only one thread to reduce noise

- switch to nano

The benchmark changes are to eliminate noise from the rest of my system and get a more accurate measurement of the small delta we expect:

@State(Scope.Benchmark) @Warmup(iterations = 4, time = 5) @Measurement(iterations = 10, time = 5) @BenchmarkMode(Mode.SampleTime) @OutputTimeUnit(TimeUnit.NANOSECONDS) @Threads(1) @Fork(value = 3) public class ThreadMXBeanBench { static final ThreadMXBean mxThreadBean = ManagementFactory.getThreadMXBean(); static long user; // To avoid dead-code elimination @Benchmark public void getCurrentThreadUserTime() throws Throwable { user = mxThreadBean.getCurrentThreadUserTime(); } public static void main(String[] args) throws RunnerException { Options opt = new OptionsBuilder() .include(ThreadMXBeanBench.class.getSimpleName()) .build(); new Runner(opt).run(); } }

Gives the version currently in the JDK main branch:

Benchmark Mode Cnt Score Error Units ThreadMXBeanBench.getCurrentThreadUserTime sample 4347067 81.746 ± 0.510 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.00 sample 69.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.50 sample 80.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.90 sample 90.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.95 sample 90.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.99 sample 90.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.999 sample 230.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.9999 sample 1980.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p1.00 sample 653312.000 ns/op

with manual clockid construction, which uses the kernel fast-path, we get:

Benchmark Mode Cnt Score Error Units ThreadMXBeanBench.getCurrentThreadUserTime sample 5081223 70.813 ± 0.325 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.00 sample 59.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.50 sample 70.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.90 sample 70.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.95 sample 70.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.99 sample 80.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.999 sample 170.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p0.9999 sample 1830.000 ns/op ThreadMXBeanBench.getCurrentThreadUserTime:p1.00 sample 425472.000 ns/op

The average dropped from 81.7 ns to 70.8 ns, approximately a 13% improvement. Improvements are also visible in all percentiles. Is its creation worth the loss of clarity? clockid instead of using manually pthread_getcpuclockid()? I’m not quite sure. The absolute gain is small and makes additional assumptions about the kernel internals, including the size clockid_t. On the other hand, in practice this is still a benefit without any downside. (famous last Words…)

Lesson:

Read the kernel source. POSIX tells you what is portable. The kernel source code tells you what is possible. Sometimes there is a 400x difference between the two. Whether it is worth exploiting or not is a different question.

Examine old assumptions. /proc The parsing approach made sense when it was written, before anyone realized it could be exploited in this way. Assumptions turn into code. Sometimes it is beneficial to meet them again.

The change occurred on December 3, 2025. Just a day before the JDK 26 feature freeze. If you are using ThreadMXBean.getCurrentThreadUserTime()JDK 26 (released March 2026) brings you a 30-400x speedup for free!

<a href