Mythos is one from what I’ve read Very Big model. Rumors have suggested that it is similar in size to the short-lived (and very short-lived) GPT4.5.

Well, I join many commentators in thinking that the primary reason for it not being released further is calculation. possibly anthropological Most computing major AI labs are lacking at the moment and I strongly suspect they don’t have the compute to implement it even if they wanted to more widely.

From the leaked price, it is expensive Also – at $125/MTok output (5 times more than the Opus, which itself is the most expensive model out there).

But it probably doesn’t matter

One thing that has really been overlooked with all the focus on frontier scale models is how quickly improvements over larger models are being achieved on smaller models. I’ve spent a lot of time with the Gemma 4 Open Weight model, and it’s incredibly impressive for a model that is ~50 times smaller than the Frontier model.

So I have no doubt that whatever capabilities the Mythos has, they will be available relatively quickly in smaller models, and thus easier to service.

And even if the sheer size of the Mythos is somehow intrinsic to the capabilities (given current progress in scaling smaller models, I highly doubt it), it’s only a matter of time before new chips will be able to service it en masse. It’s important to see where the puck is going.

Sandboxing is in danger

As I’ve written before, LLM poses an extremely serious cybersecurity risk in my opinion. Fundamentally, we are seeing a paradigm shift in how easy it has become to find serious flaws and bugs in software (and thus exploit them) for nefarious purposes.

To take a step forward, it is important to understand how modern cybersecurity is currently achieved. One of the most important concepts is a sandbox. Almost every electronic device you touch on a day-to-day basis has one (or several) of these layers to protect the system. In short, a sandbox is a so-called ‘virtualized’ environment where software can execute on the system, but with limited permissions, separate from other software, with a very strong boundary that prevents software from ‘breaking out’ of the sandbox.

If you’re reading this on a modern smartphone, you have at least 3 layers of sandboxing between this page and your phone’s operating system.

First of all, there are (at least) two levels of sandboxing in your browser. One is for the JavaScript execution environment (which runs interactive code on websites). It is then sandboxed by the browser sandbox, which limits what the entire site can do. Finally, iOS or Android has an app sandbox that limits what browser Can do as a whole.

This security in depth is absolutely fundamental to modern information security, especially allowing users to browse “untrusted” websites with any level of security. For a malicious website to gain control of your device, it needs to combine multiple vulnerabilities at the same time. In reality this is extremely difficult to do (and these types of chains make millions of dollars on the gray market).

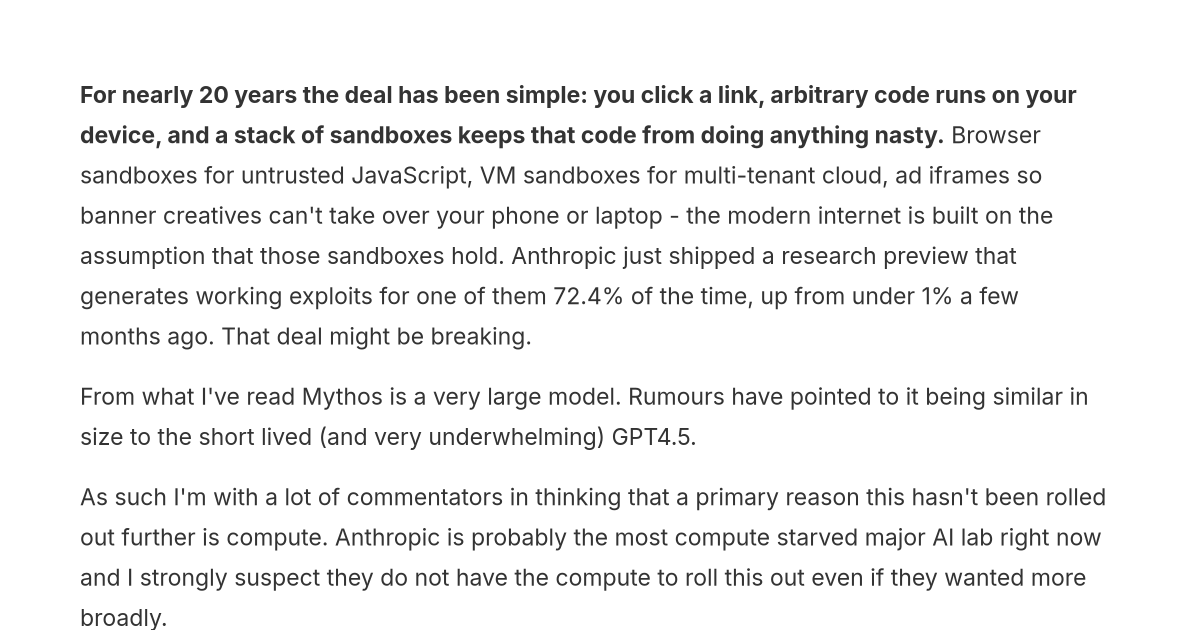

Guess what? According to Anthropic, the Mythos preview successfully generates a working exploit for Firefox’s JS shell in 72.4% of tests. Opus 4.6 managed this in less than 1% of tests in the last evaluation:

A few caveats are worth noting. The JS shell here is Firefox’s standalone SpiderMonkey – so it’s avoiding it innermost Sandbox layer, not the full browser chain (the renderer process and OS app sandbox is still on top). And this is Anthropic’s own benchmark, not independent. But even avoiding both of those, the trajectory is what matters – we’re going from “effectively zero” to “72.4% of the time” in one model generation, on a real-world target rather than a toy CTF.

It’s horrifying if you understand its implications. If one can find exploits in the LLM sandbox – which are some of the most well protected pieces of software on the planet – then suddenly every website you browse aimlessly could contain malicious code that could ‘escape’ the sandbox and theoretically take control of your device – and all the data on your phone could be sent to some nasty person.

These attacks are very dangerous Because The Internet is built around sandbox security. For example, each banner ad loaded by your browser is loaded in a separate sandboxed environment. This means they can run large amounts of (mostly) unused code, which everyone relies on the browser sandbox to protect. If that sandbox collapses, suddenly a malicious ad campaign can take over millions of devices in a matter of hours.

But it’s not just websites

Equally, sandboxes (and virtualization) are fundamental to allowing cloud computing to operate at scale. Most servers these days are not running code as opposed to real server they are on. Instead, AWS et al take physical hardware and “slice” it into so-called “virtual” servers, selling each slice to different customers. This allows many more applications to be run on the same server – and enables some nice profit margins for the companies involved.

It works on much the same model as your phone, with various layers to protect clients from accessing each other’s data and (more importantly) from accessing AWS’s control plane.

So, we have a huge problem if these sandboxes fail, and all fingers point to that happening this year. I should tone down the disaster porn a bit – there have been several sandbox escapes before that haven’t resulted in any chaos, but I have a strong feeling that this is going to be difficult.

And to be clear, when Now! AWS us-east-1 goes down (which it has done multiple times) it is front page news globally and it causes significant disruption to day-to-day life. This is one of AWS’s data center zones – if a malicious actor were able to take control of the AWS control plane, chances are they would be able to take over all the zones at once, and when a bad actor was in charge, it would be infinitely harder to restore, unlike the internal problems that have caused previous problems – and extremely difficult to restore in a timely manner.

Plan

Given all this, it’s understandable that Anthropic is cautious about releasing it into the wild. However, the point is that the cat is out of the bag. Even if Anthropic pulled a Miles Dyson and dropped his model code into a pit of molten lava, Any Otherwise one is going to scale the RL model and release it. The incentives are too high and the prisoner’s dilemma begins again.

The current status quo appears to be that these next generation models will be released to a select group of cybersecurity professionals and related organizations so they can get things as fine as possible so they can be leveraged going forward.

Perhaps this is the best that can be done, but I feel like this is a repetition of the famous “ambiguity is not security” approach that has become a meme in itself in the information security world. It also seems very far to me who these organizations are to do also going to find access most of Solution of serious problems in limited time period.

And this brings me to my final point. While Anthropic is offering a $100 million credit and a $4 million ‘direct cash donation’ to open source projects, it is not All Open source project.

here is one Very Open source projects that everyone trusts without thinking. While obvious ones like the Linux kernel are getting this “access” ahead of time, in reality it is millions Pieces of open source software (never mind commercial software) that are essential to a large portion of system operation. I’m not quite sure where the plan leaves these.

Perhaps this is another round of the cat and mouse cycle that reaches a mostly stable equilibrium, and at worst we have some short-term disruptions. But if I step back and look at how fast the industry has grown over the last few years – I’m not so sure.

And one thing I think is for sure is that it looks just like us to do There is now supernatural ability in at least one domain. I don’t think this is the last.

<a href