Robotic machine learning company Generalist has announced GEN-1, a new physical AI system that it says “exceeds production-level success rates” on “a wide range of physical skills” that require human hand dexterity and muscle memory. Generalists are also promoting the new model’s ability to respond to disruptions by improvising new moves and “connecting”[ing] “Ideas come from different places to solve new problems.”

GEN-1 is based on Generalist’s previous GEN-0 model, which the company introduced in November as a proof of concept for the applicability of scaling laws in robotics training, showing how more pre-training data and computation time improves post-training performance. But while large language models are able to effectively process the trillions of words collectively written on the Internet as part of their training, robotic models do not have a uniform, easily accessible source of quality data about how humans manipulate objects.

To help solve this problem, generalists have relied on “data hands,” a set of wearable pincers that capture subtle movements and visual information as humans perform manual tasks. Generalist now claims to have collected more than half a million hours and “petabytes of physical interaction data” to help train its physical models.

Shut up and take my money (from my wallet) (then put it back).

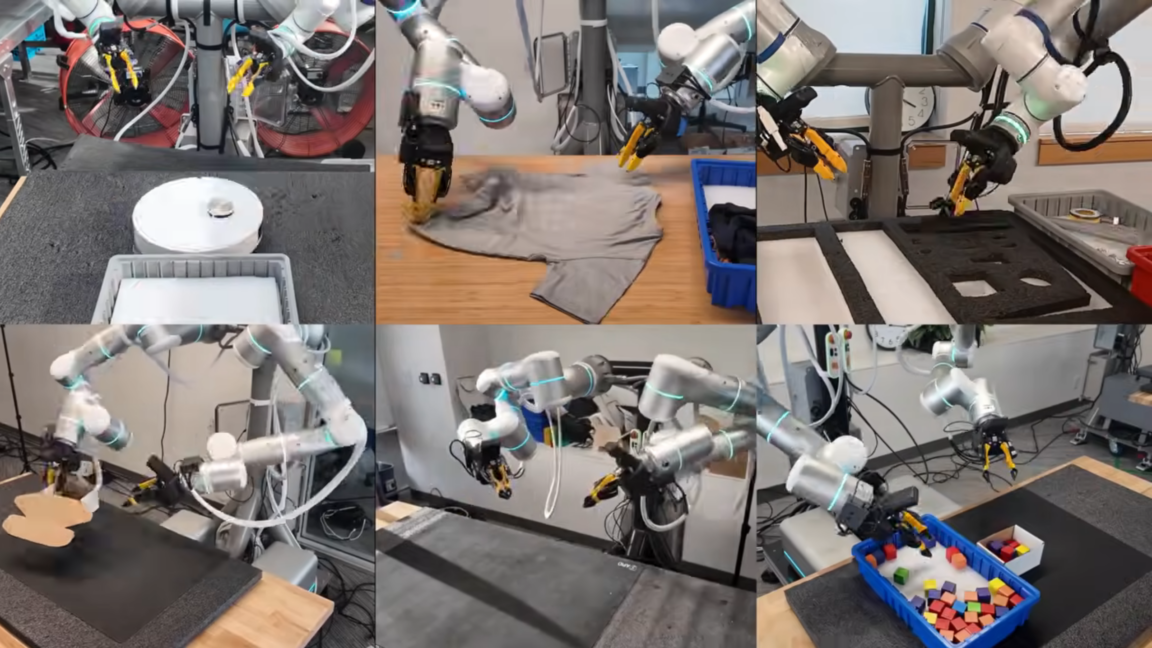

The result is an autonomous system that is accurate enough to put money in a wallet and adaptable enough to do laundry or sort auto parts. According to Generalist, the model now reaches a 99 percent success rate on frequently repeated but delicate mechanical tasks such as folding boxes, packing phones and servicing robot vacuums, and is nearly three times the speed of previous GEN-0 models. According to the company, GEN-1 can hit these marks after spending only an hour adapting its pre-training to “robot data”, which is applied to its specific robotic incarnation.

recovering from mistakes

In the past, complex robotic systems typically relied on carefully pre-programmed motions or were trained to focus exclusively on a single task with little variation. What sets GEN-1 apart, Generalist says, is the ability for a model to make improvements based on its past experience and react naturally to perturbations, even if they are “well outside the training distribution.”

<a href