The complaint states that her first instinct was to contact other victims she knew, then “eventually, local law enforcement was contacted, and a criminal investigation was initiated.”

Examining the Discord evidence, police quickly determined that the perpetrator had access to the first victim’s Instagram “because he had maintained a close and friendly relationship with her”. Upon searching his phone, police found a third-party app that Grok had licensed or otherwise purchased, leading them to conclude that the culprit used to morph photos of girls.

From there, the bad actor uploaded the images to a file-sharing platform called Mega and used them as “barter tools in Telegram group chats with hundreds of other users,” trading the AI CSAM files “for sexually explicit material of other minors.”

The lawsuit cites severe emotional and mental distress and says the victims have suffered extensive harm. The lawsuit states that victims who knew the perpetrator remain unsure whether Grok-generated CSAM was shared with classmates or distributed to others at their school. One girl fears the scandal will affect her college admissions, while another is too scared to attend her graduation.

However, even more worrying than any familiarity with AI CSAM is the fear that girls will now be stalked because of Grok’s output. As the lawsuit states, “It appears that the victims’ real first names and the name of their school were linked to their online files, meaning that other online predators may also be able to identify them, creating a greater risk of stalking.”

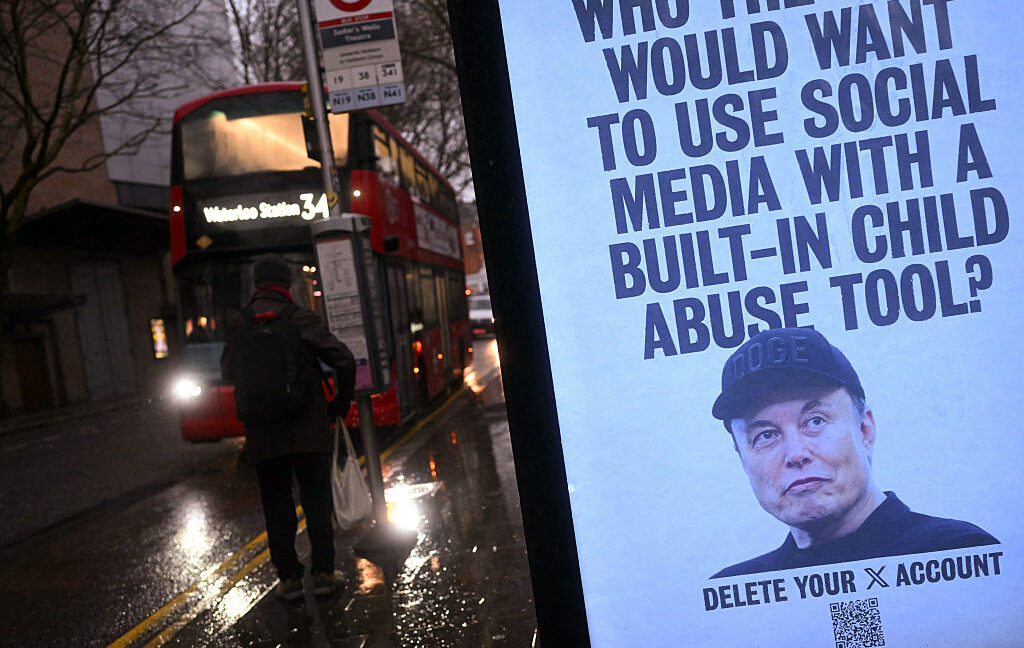

xAI reportedly hosts Grok CSAM

While it was previously reported that Grok Imagine’s paying customers were generating more graphic output than Grok output, which sparked outrage at XAI, the lawsuit alleges that XAI has also taken other steps to hide how it profits from explicit content that harms real people.

<a href