But the use of AI has injured ArXiv and it is bleeding. And it’s not clear that the bleeding can ever be stopped.

As a recent story in The Atlantic notes, ArXiv creator and Cornell information science professor Paul Ginsparg has been concerned since the rise of ChatGPT that AI could be used to break down minor but essential barriers preventing the publication of junk on ArXiv. Last year, Ginsparg collaborated on a piece of analysis that looked at potential AI in arXiv submissions. Rather frighteningly, scientists who used LLM to produce apparently credible-looking papers were more prosperous than those who did not use AI. The number of papers with posters of AI-written or augmented work was 33 percent higher.

The analysis said AI could legitimately be used for things like overcoming the language barrier. let’s Going On:

“However, traditional indicators of scientific quality such as language complexity are becoming unreliable indicators of merit as we experience growth in the volume of scientific work. As AI systems advance, they will challenge our fundamental assumptions about research quality, scholarly communication, and the nature of intellectual labor.”

It’s not just ArXiv. These are difficult times for the credibility of scholarship in general. A surprising autobiography published last week in Nature described the AI misadventures of a nerd scientist named Marcel Bucher in Germany, who was using ChatGPT to generate emails, course information, lectures, and tests. As if that wasn’t bad enough, ChatGPT was also helping them analyze student responses and incorporating it into the interactive parts of their teaching. Then one day, Butcher attempted to “temporarily” disable what he called the “data consent” option, and when ChatGPT suddenly deleted all the information it was storing specifically in the app – ie: on OpenAI’s servers – he complained in the pages of Nature that “two years of carefully structured academic work disappeared.”

Widespread, AI-induced laziness is on display in exactly the same areas where rigor and attention to detail are expected and supposed to be frustration-inducing. It was safe to assume there was a problem when the number of publications skyrocketed just a few months after ChatGPT was first released, but now, as The Atlantic points out, we’re starting to get details on the true essence and scale of that problem – not butcher-like, AI-piled individuals experiencing the anxiety of publishing or perishing and quickly removing fake papers, but fraud on an industrial scale.

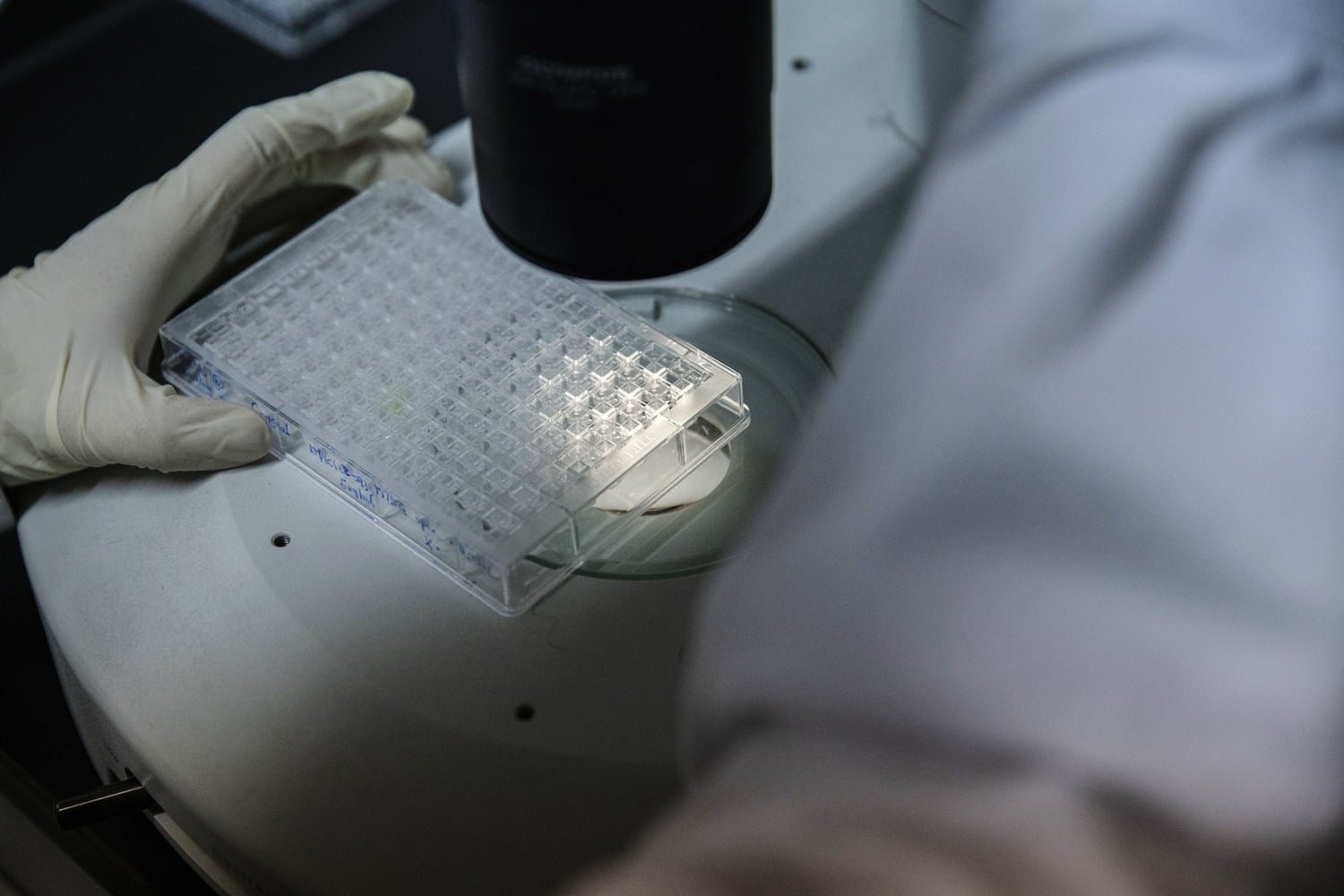

For example, in cancer research, bad actors may prompt boring papers that claim to document “the interaction between a tumor cell and just one protein out of thousands that exist,” notes The Atlantic. If the paper claims to be groundbreaking, it will raise eyebrows, meaning the ploy is more likely to be noticed, but if the fake cancer experiment’s fake findings are ho-hum, that ploy will be more likely to be seen in publication – even in a credible publication. It would be even better if it came with AI generated images of gel electrophoresis blobs that are also boring, but add extra plausibility at first glance.

In short, there is a flood of laziness in science, and everyone from academics busy planning their lessons to peer reviewers and ArXiv moderators need to be less lazy. Otherwise, the stores of knowledge that used to be one of the few remaining reliable sources of information are about to be overwhelmed by the disease that has already infected them, possibly irreversibly. And does 2026 seem like a time when anyone, anywhere, is becoming less lazy?

<a href