In computer graphics, color can be encoded in many different ways. A common encoding is sRGB (standard RGB), in which a color value is divided into red, green, and blue components. Each component can be re-stored in several different ways, the most common being an unsigned integer (usually 8 bits wide): for example, a value of zero in the red component means no color, while 255 means full red color. Using this encoding, a 160×160 bitmap requires 160*160*(8+8+8), or 76.800 bytes. This creates some problems for memory-constrained devices such as older PalmOS PDAs: such a bitmap will not fit in a single resource, limited to a scant 65.536 bytes. Another problem is memory alignment, since each pixel requires an odd number of bytes. The Motorola 68000-compatible processors powering those devices had a 16-bit data bus, making access to long streams of data aligned at 24 bits inefficient. Because of that, later PalmOS versions offered two different encodings for storing color bitmaps: 8 bits palette Bitmaps, capable of using up to 256 colors simultaneously and 16-bit straight color Bitmaps, capable of using 65.536 different colors (encoded using 5 bits for red, 6 for green and 5 bits for blue).

Our main concern here is with the first type: a palette stores 256 colors, which are indexed from 0 to 255. Each palette entry defines the red, green, and blue components as unsigned 8-bit integers. Given the palette index, retrieving the color components can be achieved in constant time, or O(1) Using Big O notation. However, the inverse operation is more interesting. Given an arbitrary RGB color triplet of all 256 palette entries, which one is nearest To the triplet? An RGB triplet can represent 16.777.216 different colors, but our palette only stores 256 unique colors. It is obviously impossible to provide an exact color for every triplet, but we can find a compromise and return an estimate. However, before we can answer this question, we must define nearestGiven two arbitrary colors in the palette, which best represents the target RGB triplet?

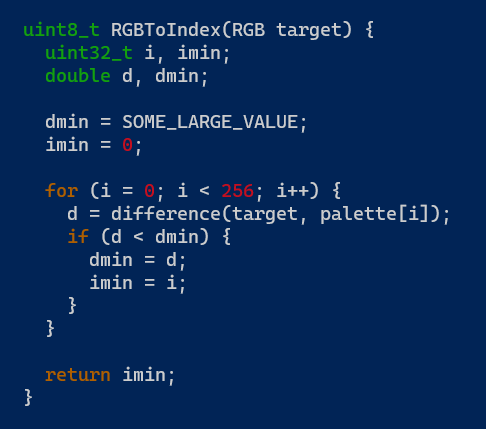

There are many ways to define A nearest The function, and the general algorithm, is outlined in this simplified C function called RGBToIndex. It accepts an RGB target color and returns the index of the color that best approximates the target:

The function iterates the palette, and for each entry, it calls a difference function that accepts two colors, the target and the palette entry. The value returned by the difference() function is compared to the current minimum (initialized to a larger value before the loop) and, if it is lower, the minimum is updated. Finally, the index of the entry that generated the minimum is returned. There is an obvious optimization that was omitted for simplicity: if any exact matchThe loop can be drawn out first, as any other color will not give better results.

Our goal is to compare different algorithms and see how they perform, analyzing the quality of the image produced and the time it takes to run. The test image is a 320×180 frame from the Blender Foundation’s Big Buck Bunny short film, which is licensed under CC BY 3.0. Here is the original 24-bit RGB image, scaled down to 320×180 pixels:

To make things similar, since we will be comparing images that use a color palette, here is the same image converted to a customized palette of 256 colors with Gimp:

Our test images will also have a color palette, but they will use the default palette found in PalmOS, which is obviously not optimized for a specific image. Now the hard part: how do we compare colors? We’ll start with a color space model that represents colors the way humans perceive colors. This shows that we do not see all shades of red, green and blue in the same way. To use this color space, called CIELAB, we need to convert the RGB values to L*a*b* values (the “*” here is part of the notation, not multiplication), where L* represents perceptual lightness and a* and b* represent the unique colors of human vision. This conversion relies on some complex formulas involving floating-point numbers that are beyond the scope of this article. After the transformation, the colors (L1*a1*b1*) and (L2*a2*b2*) are interpreted as points in 3D space and the Euclidean distance between the points is calculated:

Delta E*AB is the value returned by the function difference() in the above code. Using this algorithm, the RGBToIndex function was repeated 1.000.000 times with a random color value as input parameter, and the total time was measured. In my setup, the measured time was about 25 seconds, but since we are going to compare it to other methods, the absolute time is not relevant. We will interpret this time as 1,0 time units And measure other times accordingly. The image transformed with this algorithm is shown below.

It is important to use accurate color models if accuracy is required, but in practice, we can get away with simpler models that are much faster to compute. The first adaptation is to forget how we see colors differently and treat red, green and blue in a similar way. We can skip the initial transformation altogether and calculate the Euclidean distance directly on the RGB components:

Using the same random seed (so that the same color sequence is generated), RGBToIndex was repeated again 1.000.000 times. The total time is now 0.0260 time units, which is a dramatic improvement.

There are noticeable differences at the base of the tree trunk, where the CIELAB method performed better, and also on the grass, where apparently the sRGB method gave a nicer looking result.

Another improvement is possible if we realize it absolute The distance between two colors is not important, but only their Relative distance. If the square root of (A) is less than the square root of (B), it means that (A) is less than (B). This means we can skip an expensive floating-point square root operation and compare squared distances, now working only with integers. Using this small optimization, the total time is reduced to 0.0015 time units, which is another significant improvement.

The red line in the picture below shows the Euclidean distance between the black dot on the bottom left and the black dot on the top right (in 2D space, but the same principle applies to 3D space as well). The colors in the picture are arbitrary; They are not related to RGB values. The blue and green lines represent what is called the Manhattan distance: instead of finding the shortest path between two points, we are forced to walk along the gray lines, taking the vertices as we wish. Using this metric, the blue line is 18 units long (9 horizontal + 9 vertical). The green line is also 18 units (4 + 3 + 3 + 3 + 2 + 3), which shows that no matter which path we choose on the grid, the distance is the same.

The theoretical advantage of this method over the previous method is that it is not necessary to multiply, only to add. This no longer gives a direct representation of distance, but Relative Distances between points are preserved. The RGBToIndex run with this new distance function completes in 0,0015 time units, which is equivalent to the previous method. The image quality is also similar to the previous two in which Euclidean distances were used.

So far, all methods are called linear or sequential search. Iterating over all entries in the palette is a But) Operation. In theory, it is possible to find the closest color without examining each palette entry. But for that, we need a special data structure called a KD tree, or K-dimensional tree. In our case, K is 3, because it has three dimensions: red, green and blue. A KD tree is like a binary search tree, in which the dimensions are cycled at each level of the tree. Each no-leaf node divides the color space into two hyperplanes. For example, to the left of the root node are all colors whose red value is less than the root, and to the right are all colors whose red value is greater than the root. At the second level, the green dimension is used, at the third level, the blue dimension is used, and the cycle is repeated. However, in practice, the implementation of kd trees that I have tested performs worse than all methods using Euclidean or Manhattan distances.

PumpkinOS, a re-implementation of PalmOS that I’m developing, relies on an efficient implementation of RGBToIndex to perform complex bitmap rendering using multiple, incompatible color palettes. After much experimentation, the current distance function is based on the square Euclidean method.