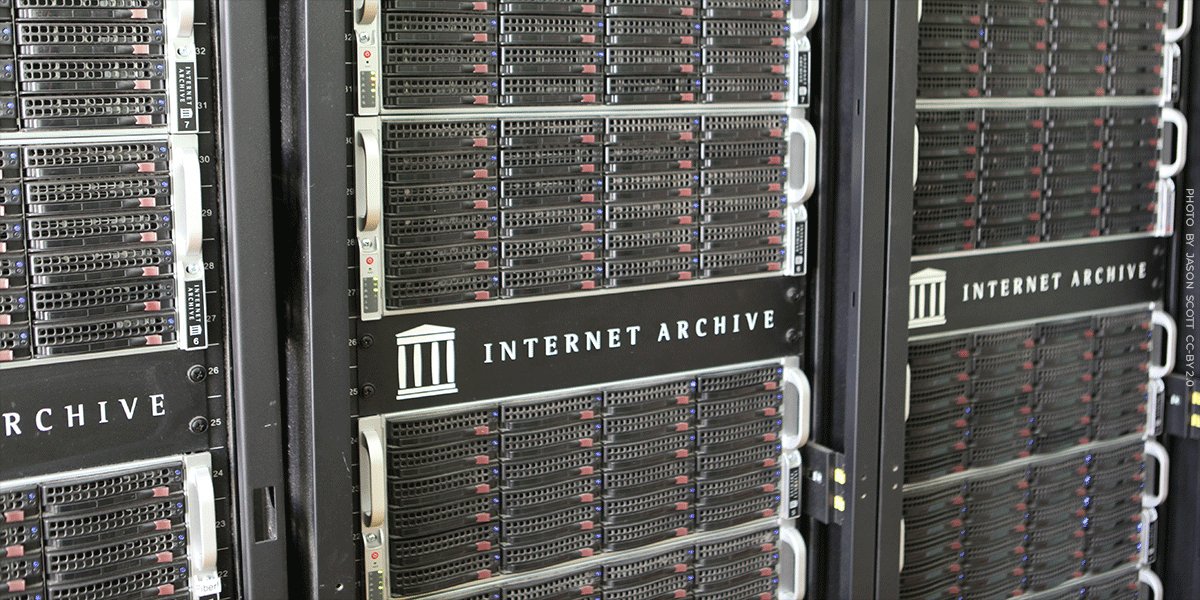

This is effectively what has started happening online in the last few months. The Internet Archive—the world’s largest digital library—has preserved newspapers since they went online mid 1990s. The mission of the Archive is to preserve the Web and make it accessible to the public. To that end, the organization operates the Wayback Machine, which now includes Over a trillion archived web pages And it is used daily by journalists, researchers, and courts.

But in recent months The New York Times started blocking archive From crawling your website, to using technical measures that go beyond the web’s traditional robots.txt rules. This risks destroying the records that historians and journalists have relied on for decades. Other newspapers, including The Guardian, also seem to be following suit.

For nearly three decades, historians, journalists, and the public have relied on the Internet Archive to preserve news sites as they appear online. Those archived pages are often the only reliable record How the stories were originally published. In many cases, articles are edited, changed, or deleted – sometimes openly, sometimes not. The Internet Archive often becomes the only source to view those changes. When major publishers block archive crawlers, that historical record begins to disappear.

The Times says the move is motivated by concerns that AI companies are overkilling news content. Publishers want control over how their work is used, and many, including the Times, are now suing AI companies over whether training models on copyrighted material violates the law. This is a strong case such training is fair use.

Whatever the outcome of those lawsuits, barring nonprofit archivists is the wrong response. Organizations like the Internet Archive are not building commercial AI systems. They are preserving the record of our history. Shutting down that preservation in an effort to control AI access would essentially destroy decades of historical documentation in a fight that libraries like the Archive did not start, nor did they demand.

If publishers turn off the archive, they aren’t just limiting bots. They are erasing historical records.

Collecting and searching is legal

Making content searchable is one established fair use. Courts have long recognized that it is often impossible to create a searchable index without making copies of the underlying content. That’s why when Google copied entire books to create a searchable database, courts clearly recognized it as fair use. Imitation served a transformative purpose: enabling discovery, research, and new insights about creative works.

The Internet Archive works on the same principle. Just as physical libraries preserve newspapers for future readers, the Archive preserves the historical record of the Web. Researchers and journalists rely on it every day. According to archive staff, Wikipedia alone preserves more than 2.6 million news articles in the archive, spanning 249 languages. And this is just one example. Countless bloggers, researchers, and journalists rely on the Archive as a stable, authoritative record of what they publish online.

The same legal principles that protect search engines should also protect archives and libraries. Even if courts impose limits on AI training, the law protecting search and web archives is already well established.

The Internet Archive has preserved the historical record of the Web for nearly thirty years. If major publishers begin blocking that mission, future researchers may find that large parts of that historical record have disappeared. There are real disputes over AI training that should be resolved in the courts. But sacrificing the public record to fight those battles would be a grave and possibly irreversible mistake.

<a href