But “that cannot be the case,” Goldberg argued.

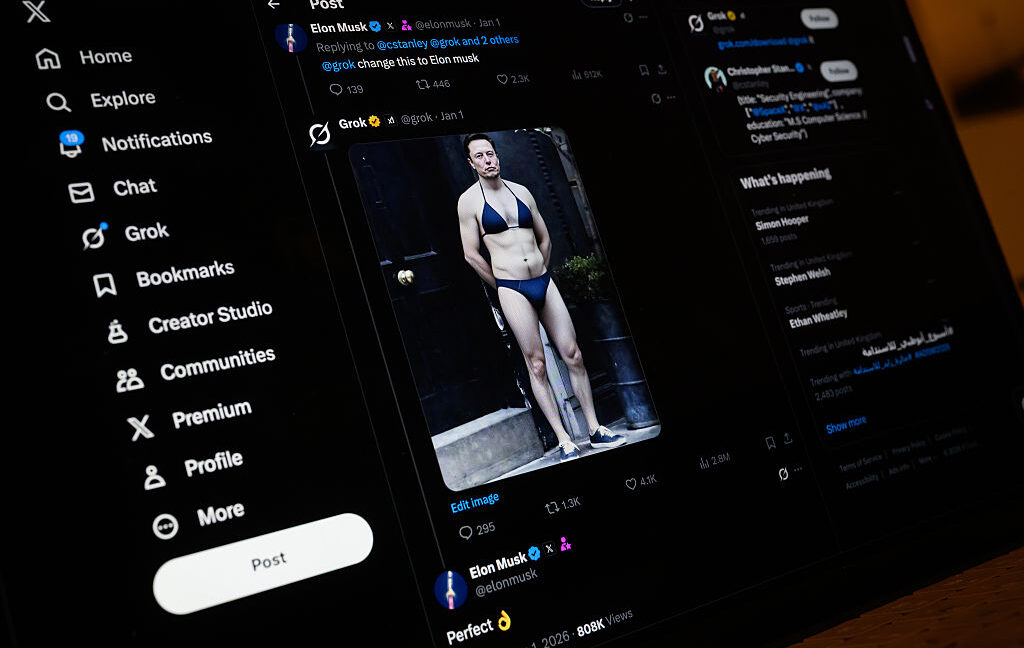

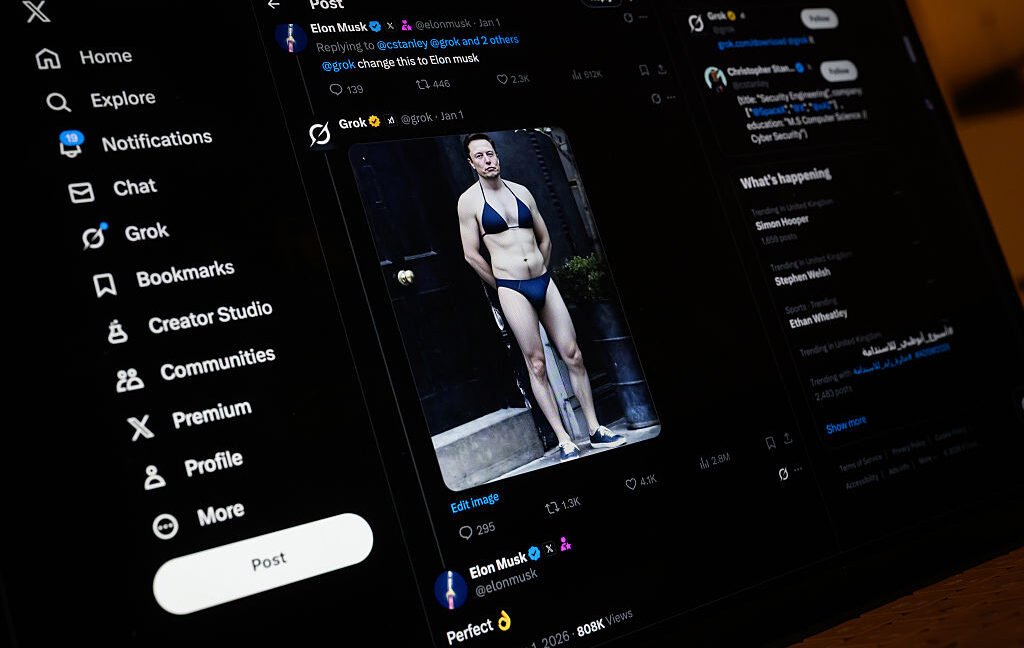

Goldberg argued, “Facing the implicit threat that Grok would put St. Clair’s images online and, presumably, make more of them”, St. Clair had no choice but to negotiate with Grok. Goldberg argued that the protections St. Clair seeks to claim under New York law should not dilute that incentive, asking the court to void St. Clair’s XAI contract and reject XAI’s motion to change venues.

Should St. Clair win its battle to retain the lawsuit in New York, the case could perhaps help set a precedent for millions of other victims who are considering legal action but are afraid to face XAI in a court of Musk’s choosing.

Goldberg argued, “It would be unjust to expect St. Clair to prosecute in a state so far from his residence, and it may be that a trial in Texas would be so difficult and inconvenient that St. Clair would effectively be deprived of his day in court.”

Grok may continue to harm children

The estimated volume of sexual images reported this week is worrying as it suggests that Grok, at the height of the scandal, may have been generating more child sexual abuse material (CSAM) on its platform each month than X.

In 2024, XSafety reported 686,176 instances of CSAM to the National Center for Missing and Exploited Children, an average of approximately 57,000 CSAM reports per month. If the CCDH’s estimate of 23,000 Grok output who sexually exploited children over an 11-day period is accurate, the average monthly total could exceed 62,000 if Grok were left unchecked.

NCMEC did not immediately respond to Ars’ request for information on how Grok’s estimated CSAM volume compares to X’s average CSAM reporting. But NCMEC previously told Ars that “regardless of whether an image is real or computer-generated, the harm is real, and the content is illegal.” This suggests that Grok may remain a thorn in the side of NCMEC, as CCDH warns that even when X removes harmful Grok posts, “images may still be accessed through different URLs,” suggesting that Grok’s CSAM and other harmful outputs may continue to spread. CCDH also found examples of alleged CSAM that X had not removed by January 15.

<a href

Nubank caiu Nubank caiu .

Nubank caiu Nubank caiu .

кухню заказать кухню заказать .

casino joy t.me/joy_casino_news .

заказать кухню с замером zakazat-kuhnyu-4.ru .

заказать кухню с замером zakazat-kuhnyu-4.ru .

школы дистанционного обучения shkola-onlajn-33.ru .

онлайн школа для школьников с аттестатом онлайн школа для школьников с аттестатом .

школа онлайн дистанционное обучение школа онлайн дистанционное обучение .

домашняя школа интернет урок вход shkola-onlajn-33.ru .

lbs lbs .

Claro caiu Claro caiu .

YouTube caiu caiu.site .

TIM caiu TIM caiu .

вывод из запоя в стационаре в ростове вывод из запоя в стационаре в ростове .

вывод из запоя в стационаре в ростове-на-дону вывод из запоя в стационаре в ростове-на-дону .

купить курсовую москва купить курсовую москва .

курсовая работа недорого курсовая работа недорого .

сайт заказать курсовую работу сайт заказать курсовую работу .

продвинуть сайт в москве продвинуть сайт в москве .

internetagentur seo prodvizhenie-sajtov-v-moskve16.ru .

поисковое продвижение сайта в интернете москва prodvizhenie-sajtov-v-moskve16.ru .

продвижение сайтов интернет магазины в москве prodvizhenie-sajtov-v-moskve16.ru .

продвижения сайта в google продвижения сайта в google .

сео продвижение по трафику сео продвижение по трафику .

рейтинг агентств digital маркетинга luchshie-digital-agencstva.ru .

net seo net seo .

топ digital агентств топ digital агентств .

поисковое продвижение по трафику поисковое продвижение по трафику .

рейтинг компаний seo оптимизации reiting-seo-kompanii.ru .

как продавать сайты как продавать сайты .

top rated seo seo-prodvizhenie-reiting.ru .

seo информационных порталов prodvizhenie-sajtov-po-trafiku11.ru .

рейтинг seo компаний рейтинг seo компаний .

многоуровневый линкбилдинг seo-kejsy17.ru .

digital маркетинг блог digital маркетинг блог .

рейтинг агентств интернет маркетинга luchshie-digital-agencstva.ru .

seo продвижение по трафику clover seo продвижение по трафику clover .

статьи о маркетинге статьи о маркетинге .

компании занимающиеся продвижением сайтов компании занимающиеся продвижением сайтов .

современные seo кейсы seo-kejsy17.ru .

seo продвижение магазин наушников seo продвижение магазин наушников .

топ компаний по продвижению сайтов топ компаний по продвижению сайтов .

топ digital агентств топ digital агентств .

продвижение портала увеличить трафик специалисты prodvizhenie-sajtov-po-trafiku11.ru .

цифровой маркетинг статьи цифровой маркетинг статьи .

продвижение по трафику продвижение по трафику .

сео центр сео центр .

рейтинг сео рейтинг сео .

продвинуть сайт в москве продвинуть сайт в москве .

продвижение сайта продвижение сайта .

сео агентство сео агентство .

рейтинг лучших seo агентств reiting-seo-kompanii.ru .

seo агентство seo агентство .

интернет продвижение москва интернет продвижение москва .

частный seo оптимизатор частный seo оптимизатор .

seo agentur ranking seo agentur ranking .

раскрутка сайта франция раскрутка сайта франция .

получить короткую ссылку google получить короткую ссылку google .

создать сайт прогнозов на спорт в москве создать сайт прогнозов на спорт в москве .

комплексное продвижение сайтов москва комплексное продвижение сайтов москва .

продвижение сайта продвижение сайта .

раскрутка и продвижение сайта раскрутка и продвижение сайта .

топ агентств seo продвижения reiting-seo-kompaniy.ru .

раскрутка сайта франция раскрутка сайта франция .

продвижение сайтов в москве продвижение сайтов в москве .

раскрутка и продвижение сайта раскрутка и продвижение сайта .

заказать сео анализ сайта пушка заказать сео анализ сайта пушка .

поисковое продвижение москва профессиональное продвижение сайтов поисковое продвижение москва профессиональное продвижение сайтов .

раскрутка сайта москва раскрутка сайта москва .

поисковое продвижение москва профессиональное продвижение сайтов поисковое продвижение москва профессиональное продвижение сайтов .

интернет агентство продвижение сайтов сео интернет агентство продвижение сайтов сео .

глубокий комлексный аудит сайта глубокий комлексный аудит сайта .

поисковое продвижение сайта в интернете москва prodvizhenie-sajtov-v-moskve15.ru .

seo partner program prodvizhenie-sajtov-v-moskve15.ru .

seo продвижение сайтов агентство reiting-seo-kompaniy.ru .

раскрутка сайта москва раскрутка сайта москва .

продвижение интернет сайта омск продвижение интернет сайта омск .

усиление ссылок переходами prodvizhenie-sajtov-v-moskve15.ru .

глубокий комлексный аудит сайта глубокий комлексный аудит сайта .

seo продвижение сайтов омск seo продвижение сайтов омск .

рейтинг сео рейтинг сео .

порно видео йога порно видео йога .

инъекционное укрепление грунта инъекционное укрепление грунта .

реконструкция зданий и объектов remont-zdaniya-1.ru .

продвижение сайтов в москве prodvizhenie-sajtov-v-moskve15.ru .

услуги продвижения сайтов омск услуги продвижения сайтов омск .

профессиональное продвижение сайтов профессиональное продвижение сайтов .

порно на занятии йогой порно на занятии йогой .

усиление и стабилизация грунтов усиление и стабилизация грунтов .

усиление и стабилизация грунтов усиление и стабилизация грунтов .

ремонт гидроизоляции фундаментов и стен подвалов ремонт гидроизоляции фундаментов и стен подвалов .

ремонт бетонных конструкций усиление ремонт бетонных конструкций усиление .

внутренняя гидроизоляция подвала gidroizolyacziya-podvalov.ru .

геополимерное усиление грунта usilenie-gruntov-1.ru .

ремонт бетонных конструкций нижний новгород ремонт бетонных конструкций нижний новгород .

усиление грунта при высоком уровне грунтовых вод usilenie-gruntov-1.ru .

йога порно онлайн йога порно онлайн .

секс на занятии йогой секс на занятии йогой .

стоимость гидроизоляции подвала gidroizolyacziya-podvalov.ru .

секс на занятии йогой секс на занятии йогой .

гидроизоляция подвала москва gidroizolyacziya-podvalov.ru .

ремонт бетонных конструкций москва ремонт бетонных конструкций москва .

цена ремонта подвала цена ремонта подвала .

ремонт бетонных конструкций услуга ремонт бетонных конструкций услуга .

доставка букетов в москве на дом http://www.zakazat-cveti-moskva.ru .

bahis sitesi 1xbet 1xbet-giris-27.com .

ремонт бетонных конструкций промышленный объект remont-betona-1.ru .

1xbet giri? g?ncel 1xbet giri? g?ncel .

1xbet mobi 1xbet-giris-27.com .

составить букет онлайн и посмотреть http://zakazat-cveti-moskva.ru .

1xbet mobi 1xbet mobi .

1xbet giri? g?ncel 1xbet-giris-27.com .

цена букетов цветов https://zakazat-cveti-moskva.ru/ .

реконструкция и капитальный ремонт зданий реконструкция и капитальный ремонт зданий .

букеты цена http://www.zakazat-cveti-moskva.ru .

капитальный ремонт зданий капитальный ремонт зданий .

капитальный ремонт квартиры этапы remont-zdaniya-1.ru .

букет отзывы zakazat-cveti-moskva.ru .

капитальный ремонт здания цена remont-zdaniya-1.ru .

нейросеть онлайн для учебы nejroset-dlya-referatov-1.ru .

реферат нейросеть реферат нейросеть .

нейросеть реферат онлайн нейросеть реферат онлайн .

нейросеть пишет реферат nejroset-dlya-referatov.ru .

ии реферат ии реферат .

нейросеть для рефератов нейросеть для рефератов .

нейросеть реферат онлайн нейросеть реферат онлайн .

сайт для рефератов nejroset-dlya-referatov.ru .

1xbet resmi sitesi 1xbet-giris-43.com .

1xbet t?rkiye 1xbet t?rkiye .

ии для школьников и студентов ии для школьников и студентов .

ии для учебы студентов nejroset-dlya-referatov-2.ru .

доставка цветов сколько стоит dostavka-cvetov-moskva495.ru .

нейросеть для рефератов нейросеть для рефератов .

нейросеть для студентов нейросеть для студентов .

нейросеть для рефератов нейросеть для рефератов .

ии реферат ии реферат .

нейросеть текст для учебы nejroset-dlya-referatov-6.ru .

лучшая нейросеть для учебы nejroset-dlya-referatov-3.ru .

реферат нейросеть реферат нейросеть .

реферат через нейросеть реферат через нейросеть .

доставка букетов цена dostavka-cvetov-moskva495.ru .

сайт для рефератов сайт для рефератов .

генерация nejroset-dlya-referatov-5.ru .

нейросеть онлайн для учебы nejroset-dlya-referatov-3.ru .

нейросеть студент бот нейросеть студент бот .

нейросети для студентов нейросети для студентов .

реферат через нейросеть реферат через нейросеть .

реферат нейросеть реферат нейросеть .

порно с другом мужа порно с другом мужа .

ии реферат ии реферат .

где самые дешевые цветы в москве отзывы http://dostavka-cvetov-moskva495.ru/ .

измена с женой друга измена с женой друга .

нейросеть онлайн для учебы nejroset-dlya-referatov.ru .

ии реферат ии реферат .

нейросеть реферат онлайн нейросеть реферат онлайн .

нейросеть для рефератов нейросеть для рефератов .

нейросеть для студентов онлайн нейросеть для студентов онлайн .

ии для студентов nejroset-dlya-referatov-5.ru .

порно с женой друга порно с женой друга .

нейросеть для студентов онлайн nejroset-dlya-referatov-6.ru .

нейросеть для рефератов нейросеть для рефератов .

нейросеть онлайн для учебы nejroset-dlya-referatov-1.ru .

сайт для рефератов сайт для рефератов .

нейросеть для студентов нейросеть для студентов .

цветы в москве с доставкой недорого на дом онлайн заявка [url=https://www.dostavka-cvetov-moskva495.ru]https://www.dostavka-cvetov-moskva495.ru[/url] .

нейросеть для учебы онлайн nejroset-dlya-referatov-5.ru .

сделать реферат nejroset-dlya-referatov-6.ru .

ии реферат ии реферат .

трахнул жену друга трахнул жену друга .

нейросеть генерации текстов для студентов нейросеть генерации текстов для студентов .

нейросеть для студентов нейросеть для студентов .

нейросеть для студентов нейросеть для студентов .

жена друга соблазняет жена друга соблазняет .

раскрутка и продвижение сайта раскрутка и продвижение сайта .

заказ букетов москва недорого https://www.dostavka-cvetov-moskva495.ru .

умная нейросеть для учебы [url=https://nejroset-dlya-referatov-6.ru/]nejroset-dlya-referatov-6.ru[/url] .

улучшение креативов улучшение креативов .

net seo net seo .

машинное обучение креативы reklamnyj-kreativ14.ru .

нейросеть для студентов онлайн нейросеть для студентов онлайн .

интернет агентство продвижение сайтов сео интернет агентство продвижение сайтов сео .

a/b тест наружная реклама reklamnyj-kreativ12.ru .

продвижение сайта франция internet-agentstvo-prodvizhenie-sajtov-seo.ru .

нейросеть для студентов нейросеть для студентов .

upster pro upster pro .

технический перевод teh-perevod.ru .

продвижение сайтов интернет магазины в москве prodvizhenie-sajtov-v-moskve4.ru .

оптимизация сайта франция internet-agentstvo-prodvizhenie-sajtov-seo.ru .

анализ карточек маркетплейс анализ карточек маркетплейс .

улучшение креативов улучшение креативов .

luxury villas in phuket for sale villas-for-sale-in-phuket.com .

seo partner prodvizhenie-sajtov-v-moskve4.ru .

phuket luxury apartments for sale apartments-for-sale-in-phuket-1.com .

продвижение в google продвижение в google .

анализ креативов анализ креативов .

real estate for sale in phuket real estate for sale in phuket .

анализ карточек маркетплейс reklamnyj-kreativ12.ru .

продвижение сайта продвижение сайта .

перепланировка квартиры москва pereplanirovka-kvartir11.ru .

houses for sale in phuket real-estate-for-sale-in-phuket.com .

согласование перепланировки согласование перепланировки .

частный seo оптимизатор internet-agentstvo-prodvizhenie-sajtov-seo.ru .

a/b тест наружная реклама reklamnyj-kreativ12.ru .

технический перевод услуги teh-perevod.ru .

переводчик технического текста в москве teh-perevod.ru .

sea view apartments for sale in phuket apartments-for-sale-in-phuket.com .

houses for sale in phuket real-estate-for-sale-in-phuket.com .

luxury villas for sale in phuket luxury villas for sale in phuket .

нейросеть пишет реферат нейросеть пишет реферат .

улучшение баннеров реклама reklamnyj-kreativ12.ru .

технический переводчик стоимость teh-perevod.ru .

перепланировка квартиры согласование pereplanirovka-kvartir11.ru .

seo интенсив kursy-seo-12.ru .

услуги по перепланировке квартир услуги по перепланировке квартир .

продвижение сайта по трафику пример договора prodvizhenie-sajta-po-trafiku2.ru .

проект на перепланировку квартиры заказать proekt-pereplanirovki-kvartiry24.ru .

нейросеть для студентов онлайн нейросеть для студентов онлайн .

цветы с доставкой недорого http://www.cvejie-cveti.ru/ .

согласование перепланировки согласование перепланировки .

технический переводчик стоимость teh-perevod.ru .

согласование перепланировки квартиры в москве согласование перепланировки квартиры в москве .

умная нейросеть для учебы nejroset-dlya-referatov-8.ru .

согласование перепланировки квартиры под ключ pereplanirovka-kvartir11.ru .

продвижение сайта по трафику пример договора prodvizhenie-sajtov-po-trafiku.ru .

цветы с доставкой недорого москва cvejie-cveti.ru .

услуги продвижения seo рязань timoly.ru сеотика.рф [url=https://prodvizhenie-sajta-po-trafiku2.ru/]prodvizhenie-sajta-po-trafiku2.ru[/url] .

сделать проект перепланировки квартиры в москве proekt-pereplanirovki-kvartiry24.ru .

seo специалист kursy-seo-12.ru .

нейросеть для учебы онлайн нейросеть для учебы онлайн .

seo продвижение сайта по трафику seo продвижение сайта по трафику .

seo продвижение по трафику кловер prodvizhenie-sajta-po-trafiku2.ru .

перепланировка квартиры проектные организации proekt-pereplanirovki-kvartiry24.ru .

цветы подарить http://cvejie-cveti.ru/ .

seo специалист kursy-seo-12.ru .

трафиковое продвижение сайтов трафиковое продвижение сайтов .

вавада зеркало вавада зеркало .

vavada ставки на спорт vavada ставки на спорт .

согласование перепланировок согласование перепланировок .

мелбет официальная контора мелбет официальная контора .

интернет партнер интернет партнер .

where internet partner prodvizhenie-sajtov-po-trafiku1.ru .

проект перепланировки квартиры москва проект перепланировки квартиры москва .

melbet букмекерская melbet букмекерская .

seo интенсив kursy-seo-12.ru .

daostone.ru daostone.ru .

вавада оф сайт вавада оф сайт .

согласование перепланировки согласование перепланировки .

продвижение сайта трафику prodvizhenie-sajta-po-trafiku2.ru .

seo продвижение по трафику seo продвижение по трафику .

услуги продвижения seo рязань timoly.ru сеотика.рф услуги продвижения seo рязань timoly.ru сеотика.рф .

доставка цветов рядом https://www.cvejie-cveti.ru .

скачать казино вавада на андроид скачать казино вавада на андроид .

зарегистрироваться в вавада 2026 зарегистрироваться в вавада 2026 .

учиться seo учиться seo .

продвижение по трафику сео prodvizhenie-sajtov-po-trafiku1.ru .

сео инфо сайта увеличить трафик специалисты prodvizhenie-sajtov-po-trafiku.ru .

бк вавада бк вавада .

мел бет букмекерская melbetlogin.ru .

цветы в москве недорого https://cvejie-cveti.ru .

проект перепланировки и переустройства квартиры проект перепланировки и переустройства квартиры .

согласование перепланировок согласование перепланировок .

vavada личный кабинет vavada личный кабинет .

vavada зеркало рабочее зеркало сайта polezno-vsem.ru .

мэлбэт мэлбэт .

вавада официальный сайт регистрация [url=https://daostone.ru/]вавада официальный сайт регистрация[/url] .

мелбет официальный мелбет официальный .

вавада оф сайт вавада оф сайт .

vavada kazino vavada kazino .

vavada казино зеркало vavada казино зеркало .

врач нарколог на дом narkolog-na-dom-v-krasnodare.ru .

вавада игровые вавада игровые .

vavada вход зеркало 2026 vavada вход зеркало 2026 .

мелбет ру мелбет ру .

карточки товаров wildberries [url=https://reklamnyj-kreativ13.ru/]карточки товаров wildberries[/url] .

нейросеть генерации текстов для студентов нейросеть генерации текстов для студентов .

анализ наружной рекламы reklamnyj-kreativ12.ru .

технический переводчик услуги teh-perevod.ru .

vavada online vavada online .

вавада на сегодня 2026 вавада на сегодня 2026 .

срочный выезд нарколога на дом narkolog-na-dom-v-krasnodare-1.ru .

узнаваемость бренда баннер reklamnyj-kreativ13.ru .

перевод технического текста в москве teh-perevod.ru .

запоминаемость рекламы reklamnyj-kreativ12.ru .

vavada официальный cleansheet.ru .

partscore.ru [url=https://partscore.ru/]partscore.ru[/url] .

melbet registration by e-mail melbet registration by e-mail .

выезд нарколога на дом narkolog-na-dom-v-krasnodare-1.ru .

платная наркологическая клиника платная наркологическая клиника .

сео инфо сайта увеличить трафик специалисты сео инфо сайта увеличить трафик специалисты .

срочный выезд нарколога на дом narkolog-na-dom-v-krasnodare.ru .

зарегистрироваться в мелбет зарегистрироваться в мелбет .

врач нарколог на дом платный narkolog-na-dom-v-krasnodare-1.ru .

трафиковое продвижение сайта prodvizhenie-sajtov-po-trafiku1.ru .

клиника наркологии москва клиника наркологии москва .

нарколог на дом нарколог на дом .

заказать кухню под заказ заказать кухню под заказ .

заказать кухню с установкой zakazat-kuhnyu-9.ru .

частный нарколог на дом частный нарколог на дом .

поисковое seo в москве internet-agentstvo-prodvizhenie-sajtov-seo.ru .

нарколог на дом нарколог на дом .

мелбет букмекерская контора официальный сайт мелбет букмекерская контора официальный сайт .

нарколог на дом краснодар нарколог на дом краснодар .

нарколог на дом в краснодаре narkolog-na-dom-v-krasnodare.ru .

заказать кухню сайт заказать кухню сайт .

психолог нарколог в москве narkologicheskaya-klinika-trezvyj-vybor.ru .

продвижение сайтов продвижение сайтов .

срочный выезд нарколога на дом narkolog-na-dom-v-krasnodare-2.ru .

vavada казино зеркало vavada казино зеркало .

заказать кухню по размерам zakazat-kuhnyu-9.ru .

запоминаемость слогана реклама reklamnyj-kreativ14.ru .

вызов нарколога на дом вызов нарколога на дом .

нейросеть для школьников и студентов нейросеть для школьников и студентов .

заказать кухню по индивидуальному проекту zakazat-kuhnyu-10.ru .

нарколог на дом цены нарколог на дом цены .

нарколог на дом краснодар нарколог на дом краснодар .

нарколог на дом недорого narkolog-na-dom-v-krasnodare.ru .

клиника наркологии клиника наркологии .

заказать кухню сайт zakazat-kuhnyu-9.ru .

кухню заказать кухню заказать .

a/b тест баннеров reklamnyj-kreativ14.ru .

платный нарколог на дом платный нарколог на дом .

купить кухню в спб от производителя купить кухню в спб от производителя .

заказать кухню через интернет заказать кухню через интернет .

заказать кухню заказать кухню .

нарколог на дом нарколог на дом .

клиники наркологические москва narkologicheskaya-klinika-trezvyj-vybor.ru .

анализ карточек маркетплейс анализ карточек маркетплейс .

кухня на заказ спб кухня на заказ спб .

кухни на заказ питер kuhni-spb-50.ru .

заказать кухню заказать кухню .

seo partner internet-agentstvo-prodvizhenie-sajtov-seo.ru .

заказать кухню сайт заказать кухню сайт .

заказать кухню сайт zakazat-kuhnyu-9.ru .

нарколог на дом недорого narkolog-na-dom-v-krasnodare-2.ru .

кухни в спб от производителя кухни в спб от производителя .

нарколог на дом в краснодаре нарколог на дом в краснодаре .

upster pro upster pro .

заказать кухню онлайн заказать кухню онлайн .

seo продвижение и раскрутка сайта seo продвижение и раскрутка сайта .

кухни на заказ спб кухни на заказ спб .

нарколог на дом круглосуточно нарколог на дом круглосуточно .

узнаваемость бренда баннер reklamnyj-kreativ14.ru .

раскрутка сайта франция internet-agentstvo-prodvizhenie-sajtov-seo.ru .

кухни на заказ спб каталог кухни на заказ спб каталог .

заказать кухню в спб по индивидуальному проекту заказать кухню в спб по индивидуальному проекту .

кухни спб kuhni-spb-49.ru .

кухни на заказ от производителя в спб кухни на заказ от производителя в спб .

заказать кухню с замером zakazat-kuhnyu-12.ru .

кухни на заказ спб каталог кухни на заказ спб каталог .

купить кухню в спб от производителя купить кухню в спб от производителя .

кухня глория kuhni-spb-50.ru .

заказать кухню под заказ заказать кухню под заказ .

заказ кухни спб заказ кухни спб .

кухни на заказ в санкт-петербурге kuhni-spb-50.ru .

кухни на заказ от производителя в спб кухни на заказ от производителя в спб .

кухни под заказ в спб kuhni-spb-52.ru .

кухни на заказ в спб цены kuhni-spb-50.ru .

нарколог на дом круглосуточно нарколог на дом круглосуточно .

купить кухню купить кухню .

заказать кухню стоимость zakazat-kuhnyu-10.ru .

property for sale in phuket thailand property for sale in phuket thailand .

мебель для кухни спб от производителя мебель для кухни спб от производителя .

врач нарколог на дом платный narkolog-na-dom-v-krasnodare-1.ru .

нарколог на дом в краснодаре narkolog-na-dom-v-krasnodare.ru .

кухни на заказ в спб недорого kuhni-spb-49.ru .

нарколог на дом недорого narkolog-na-dom-v-krasnodare-3.ru .

заказать кухню под заказ заказать кухню под заказ .

где заказать кухню в спб kuhni-spb-49.ru .

нарколог на дом цены нарколог на дом цены .

заказать кухню в спб от производителя недорого kuhni-spb-51.ru .

частного нарколога на дом narkolog-na-dom-v-krasnodare.ru .

seo информационных порталов prodvizhenie-sajtov-po-trafiku.ru .

трафиковое продвижение сайта prodvizhenie-sajtov-po-trafiku.ru .

вывод из запоя круглосуточно вывод из запоя круглосуточно .

вывод из запоя на дому вывод из запоя на дому .

вывод из запоя стационар краснодар вывод из запоя стационар краснодар .

вывод из запоя цена краснодар vyvod-iz-zapoya-v-krasnodare-2.ru .

наркологическая помощь наркологическая помощь .

лечение алкоголизма лечение алкоголизма .

лечение наркомании лечение наркомании .

вывод из запоя краснодар на дому анонимно vyvod-iz-zapoya-v-krasnodare-3.ru .

вывод из запоя в стационаре vyvod-iz-zapoya-na-domu-samara.ru .

наркологическая клиника наркологическая клиника .

купить дешевые цветы https://www.cveti-nederogo.ru .

вывод из запоя на дому в краснодаре вывод из запоя на дому в краснодаре .

реабилитация алкоголиков vyvod-iz-zapoya-na-domu-samara-1.ru .

вывод из запоя цены краснодар вывод из запоя цены краснодар .

реабилитационный центр для наркозависимых vyvod-iz-zapoya-na-domu-voronezh-2.ru .

вывод из запоя лечение краснодар vyvod-iz-zapoya-v-krasnodare-2.ru .

вывод из запоя кодирование краснодар vyvod-iz-zapoya-v-krasnodare-3.ru .

лечение алкоголизма vyvod-iz-zapoya-na-domu-voronezh.ru .

реабилитация алкоголиков vyvod-iz-zapoya-na-domu-samara.ru .

кодирование от алкоголизма кодирование от алкоголизма .

вывод из запоя на дому вывод из запоя на дому .

вывод из запоя на дому вывод из запоя на дому .

какие цветы недорогие и красивые http://cveti-nederogo.ru .

лечение алкоголизма лечение алкоголизма .

реабилитация наркоманов [url=https://vyvod-iz-zapoya-na-domu-samara-1.ru/]vyvod-iz-zapoya-na-domu-samara-1.ru[/url] .

вывод из запоя краснодар на дому вывод из запоя краснодар на дому .

вывод из запоя краснодар на дому вывод из запоя краснодар на дому .

капельница от похмелья vyvod-iz-zapoya-na-domu-samara.ru .

реабилитационный центр от алкоголизма [url=https://vyvod-iz-zapoya-na-domu-voronezh.ru/]vyvod-iz-zapoya-na-domu-voronezh.ru[/url] .

капельница от запоя [url=https://vyvod-iz-zapoya-na-domu-voronezh-3.ru/]капельница от запоя[/url] .

вывод из запоя круглосуточно краснодар на дому вывод из запоя круглосуточно краснодар на дому .

лечение наркомании vyvod-iz-zapoya-na-domu-voronezh-1.ru .

наркологическая клиника наркологическая клиника .

вывод из запоя краснодар вывод из запоя краснодар .

краснодар вывод из запоя vyvod-iz-zapoya-v-krasnodare-1.ru .

реабилитация наркоманов vyvod-iz-zapoya-na-domu-samara-1.ru .

нарколог на дом нарколог на дом .

вывод из запоя в стационаре vyvod-iz-zapoya-na-domu-samara.ru .

вывод из запоя краснодар вывод из запоя краснодар .

вывод из запоя лечение краснодар vyvod-iz-zapoya-v-krasnodare-3.ru .

нарколог на дом нарколог на дом .

реабилитация алкоголиков vyvod-iz-zapoya-na-domu-voronezh.ru .

цены на цветы цены на цветы .

нарколог на дом нарколог на дом .

лечение алкоголизма лечение алкоголизма .

капельница от запоя капельница от запоя .

вывод из запоя на дому краснодар круглосуточно [url=https://vyvod-iz-zapoya-v-krasnodare-4.ru/]вывод из запоя на дому краснодар круглосуточно[/url] .

наркологический центр наркологический центр .

нарколог на дом нарколог на дом .

нарколог вывод из запоя краснодар vyvod-iz-zapoya-v-krasnodare-3.ru .

вывод из запоя цены краснодар вывод из запоя цены краснодар .

вывод из запоя на дому краснодар круглосуточно vyvod-iz-zapoya-v-krasnodare-1.ru .

наркологический стационар vyvod-iz-zapoya-na-domu-voronezh-3.ru .

капельница от похмелья vyvod-iz-zapoya-na-domu-voronezh.ru .

лечение наркомании [url=https://vyvod-iz-zapoya-na-domu-voronezh-1.ru/]vyvod-iz-zapoya-na-domu-voronezh-1.ru[/url] .

букеты цветов недорого москва http://cveti-nederogo.ru .

вывод из запоя в стационаре вывод из запоя в стационаре .

скачать видео с youtube в высоком качестве skachat-video-s-youtube-3.ru .

капельница от похмелья vyvod-iz-zapoya-na-domu-samara-2.ru .

цвет цена цвет цена .

наркологический стационар vyvod-iz-zapoya-na-domu-samara-2.ru .

скачать из ютуба видео скачать из ютуба видео .

скачать видео из youtube скачать видео из youtube .

скачать видео с ютуба на смартфон skachat-video-s-youtube-3.ru .

скачать видео с ютуба без вирусов skachat-video-s-youtube-3.ru .

доставка цветов анонимно москва http://dostavka-cvetov777.ru/ .

доставка цветов номер телефона доставка цветов номер телефона .

доставка цветов москве [url=http://dostavka-cvetov777.ru]http://dostavka-cvetov777.ru[/url] .

номер телефона доставка цветов https://www.dostavka-cvetov777.ru .