Engineers building browser agents today face a choice between closed APIs that they can’t inspect and using open-source frameworks with no trained models. Ai2 is now offering a third option.

The Seattle-based nonprofit behind it is Open-source OLMO language model and molmo The vision-language family is today releasing MolmoWeb, an open-source visual web agent available in 4 billion and 8 billion parameter sizes. Until now, no open-source visual web agent was shipped with the training data and pipeline needed for audit or reproducibility. Molmoweb does. The accompanying dataset MolmoWebMix contains 30,000 human task trajectories, 590,000 individual sub-task executions, and 2.2 million screenshot question-answer pairs across over 1,100 websites – which Ai2 describes as the largest publicly released collection of human web-task executions ever assembled.

"Can you go from just passively understanding, describing, and captioning images to actually inspiring them to take action in an environment?" Ai2 senior research scientist Tanmay Gupta said venturebeat. "That’s exactly what Molmoweb is."

How it works: It’s what you see

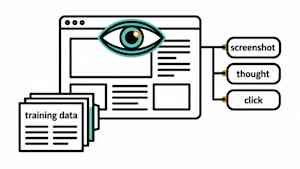

MolmoWeb is powered entirely by browser screenshots. It does not parse HTML or rely on accessibility tree representations of a page. At each step it receives a task instruction, the current screenshot, a text log of previous actions, and the current URL and page title. It generates a natural-language thought describing its logic, then executes the next browser action – clicking a screen coordinate, typing text, scrolling, navigating to a URL, or switching tabs.

The model is browser-agnostic. It only requires a single screenshot, which means it runs against a local Chrome, Safari, or hosted browser service. The hosted demo uses BrowserBase, a cloud browser infrastructure startup.

The dataset that implements it

The weight of the model is only a part of the models being released by Ai2. MolmoWebMix, along with the accompanying training dataset, is the main differentiator from every other open-weighted agent available today.

"The data basically looks like a sequence of screenshots and actions, paired with instructions as to what the intention was behind that sequence of screenshots," Gupta said.

MolmoWebMix combines three components.

human performance. Human annotators completed the browsing task using a custom Chrome extension that recorded activities and screenshots on more than 1,100 websites. The result is 30,000 task trajectories consisting of more than 590,000 individual sub-task performances.

Synthetic trajectories. To go beyond what human annotations alone could provide, Ai2 created additional trajectories using text-based accessibility-tree agents – single-agent runs filtered for task success, multi-agent pipelines that decompose tasks into sub-goals and deterministic navigation paths across hundreds of websites. Critically, no proprietary vision agents were used. The synthetic data came from the text-only system, not from the OpenAI operator or Anthropic’s computer usage API.

GUI perception data. The third component trains the model to read and reason about page content directly from images. It contains over 2.2 million screenshot question-answer pairs drawn from approximately 400 websites, covering element grounding and screenshot-based reasoning tasks.

"If you’re able to perform a task and you’re able to record a trajectory from it, you should be able to train a web agent on that trajectory to do exactly that task," Gupta said.

How Molmoweb stands out from the competition

In Gupta’s view, there are two categories of technologies in the browser agent market.

The first is API-only systems, which are enabled but closed, with no visibility into training or architecture. OpenAI Operator, Anthropic’s Computer Usage API, and Google’s Gemini Computer Usage fall into this group. The second is the open-weight model, which is a much smaller category. Browser-use, the most widely adopted open alternative, is a framework rather than a trained model. This requires developers to supply their own LLM and build an agent layer on top.

MolmoWeb falls into the second category as a fully trained open-weight vision model. Ai2 reports that it leads that group in four live-website benchmarks: WebVoyager, Online-Mind2Web, DeepShop, and WebtelBench. According to Ai2, it even outperforms older API-based agents built on GPT-4o with accessibility trees plus screenshot inputs.

Documents several existing limitations in the Ai2 release. The model makes occasional errors when reading text from screenshots, drag-and-drop interactions remain unreliable and performance degrades on ambiguous or heavily restricted instructions. The model was also not trained on tasks requiring login or financial transactions.

Enterprise teams evaluating browser agents aren’t just choosing one model. They’re deciding whether they can audit what’s going on, fix it on internal workflows, and avoid per-call API dependencies.

<a href